Life as we know it today couldn’t exist without computers.

It’s hard to overstate the role computers play in our lives today. Our silicony friends have left their mark on every facet of life, changing everything from how we date, to deep space exploration. Computers keep our planes flying and make sure there’s always enough juice in the grid for your toaster to work in the morning and the TV when you come back home. Through them, the POTUS’ rant on Twitter can be read by millions of people mere seconds after it’s typed. They also help propel women as equal participants in the labor market and as equal, full-right members of civic society today.

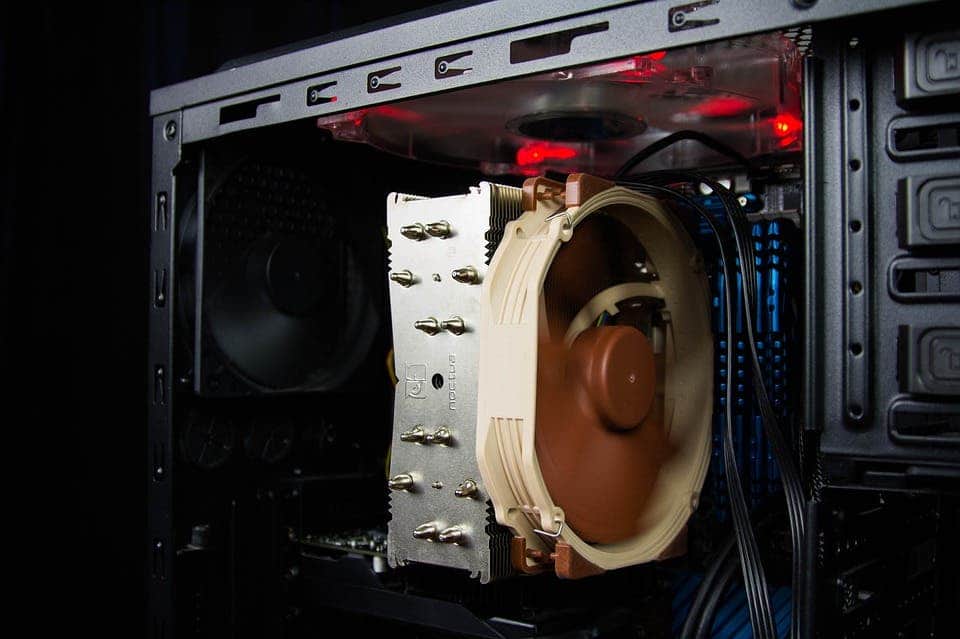

So let’s take a look at these literal wonders of technology, the boxes of metal and plastic that allowed the human race to outsource tedious work of the mind at an unprecedented pace.

The Brain Mk.1 Computer

When somebody today says ‘computer’, we instantly think of a PC — a personal computer. In other words, a machine, a device we’ve built to do calculations. We can make them do a lot of pretty spectacular stuff, such as predict asteroid orbit or run Skyrim, as long as we can describe it to them in terms of math.

But just 70 years ago, that wasn’t the case. While the first part of that abbreviation is pretty self-explanatory, the second one is a tip of the hat to the device’s heritage: the bygone profession of the computer. Human computers to be more exact, though of course, the distinction didn’t exist at that time. They were, in the broadest terms, people whose job was to perform all the mathematical computations society required by hand. And boy was it a lot of math.

Image via Pixabay.

For example, trigonometry tables. The one I’ve linked there is a pretty bare-bones version. It calculates 4 values (sine, cosine, tangent, and cotangent) for every degree up to 45 degrees (because trigonometry is funny and these values repeat, sometimes going negative). So, it required 46 times 4 = 186 calculations to put together.

Now, it’s not actually hard to calculate trigonometry values, but they are tedious and prone to mistakes because they involve fractions and a lot of decimals. Another issue was the size of these things. A per degree table works well for teaching high-schoolers about trigo. For top notch science, however, tables working on a per .1, or .01 degree basis were required — meaning a single table could need up to tens of thousands of calculations.

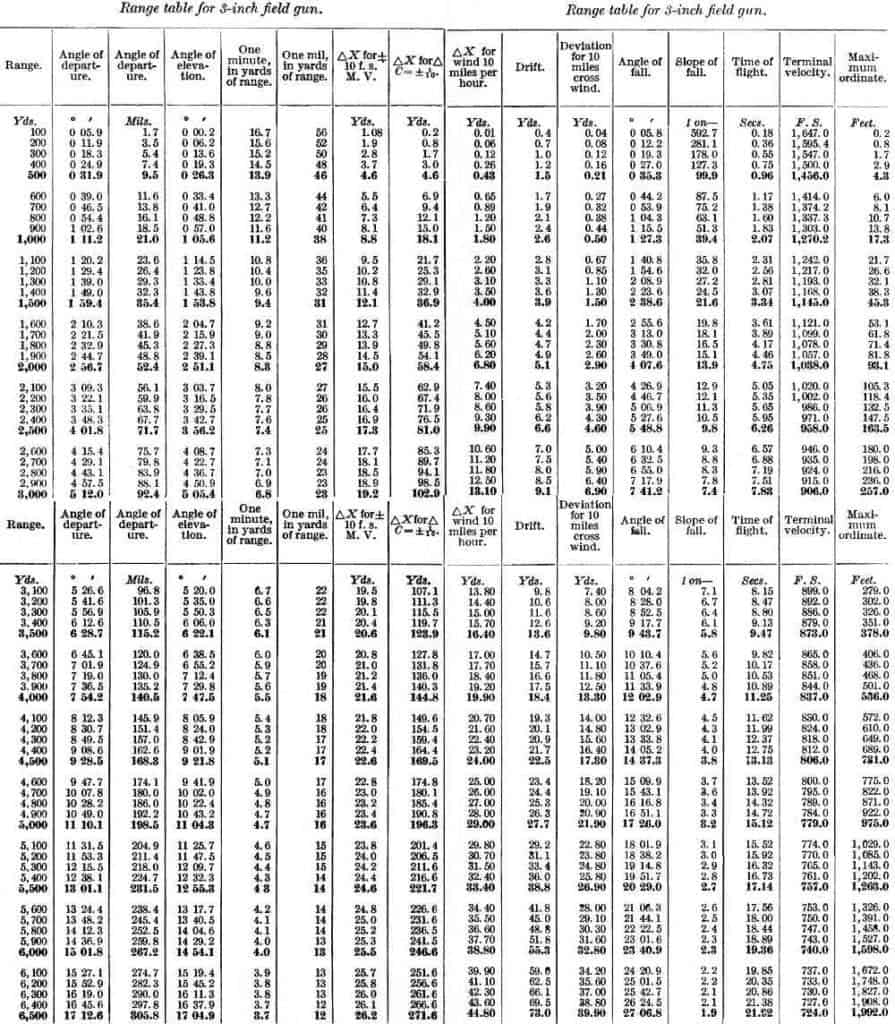

Then, you had stuff like artillery tables. These were meant to help soldiers in the field know exactly how high to point the barrel of a gun so that the shell would fall where the other guys were. They’d tell you how much a shell would likely deviate, at what angle it would hit, and how long it would take for it to get to a target. The fancier ones would even take weather into account, calculating how much you needed to tip the barrel in one direction or another to correct for wind.

I don’t even want to think how much work went into making these charts. It wasn’t the fun kind of work where you cheekily browse memes when the boss isn’t looking, either — it’s hours upon thousands of hours of repetitive, elbow-breaking math. When you were finally done, well guess who’s getting seconds (protip: it’s computer-you) because each chart had to be tailor-made for each type of gun, and each type of ammo. After that, you had to check every result to see if you messed up even by a few decimals. And then, then, if some guys crunched a table you used as reference wrong, you’d have to do it all over again.

Gun range tables for the US 3-inch field gun, models 1902-1905, 15 lb shell.

Image credits William Westervelt, “Gunnery and explosives for field artillery officers,” via US Army.

It’s not just the narrow profession of computers we’re talking about, though. They were only the tip of the iceberg. Businesses needed accountants, designers and architects, people to deliver mail, people to type and copy stuff, organize files, keep inventory, and innumerable other tasks that PCs today do for us. Their job contracts didn’t read ‘computers’ but they performed a lot of the tasks we now turn to PCs for. It’s all this work of gathering, processing, and transmitting data that I’ll be referring to when I use the term “background computational cost”.

Lipstick computers

Engineers, being the smart people that we are, soon decided all this number crunching wasn’t going to work for us — that’s how the computer job was born. Overall, this division of labor went down pretty well. Let’s look at the National Advisory Committee for Aeronautics, or NACA, the precursor of the NASA we all know and love today. The committee’s role was “to supervise and direct the scientific study of the problems of flight with a view to their practical solution.” In other words, they were the rocket scientists of a world which didn’t yet have rockets. Their main research center was the Langley Memorial Aeronautical Laboratory (LMAL), which in 1935 employed five people in its “Computer Pool.”

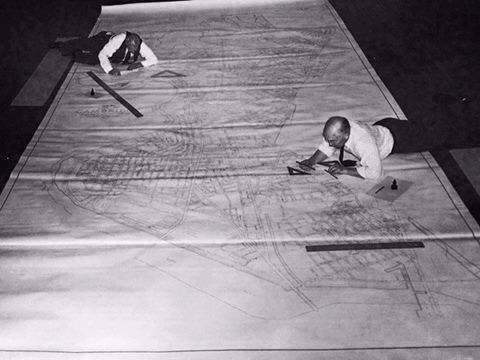

Image via Quora.

The basic research path at LMAL was a reiterative process. Engineers would design a new wing shape, for example, and then send it to a wind tunnel for testing. The raw data results would then be sent to the computers to be processed into all sorts of useful information — how much lift it would produce, how much load it could take, potential flaws, any graphics that would be needed, so on. They were so useful to the research efforts, and so appreciated by the engineers, that by 1946 the LMAL would employ 400 ‘girl’ computers.

“Engineers were free to devote their attention to other aspects of research projects, while the computers received praise for calculating […] more in a morning than an engineer alone could finish in a day,” NASA recounts of a Langley memo.

“The engineers admit themselves that the girl computers do their work more rapidly and accurately than they would,” Paur Ceruzzi from Air and Space writes, citing the same document.

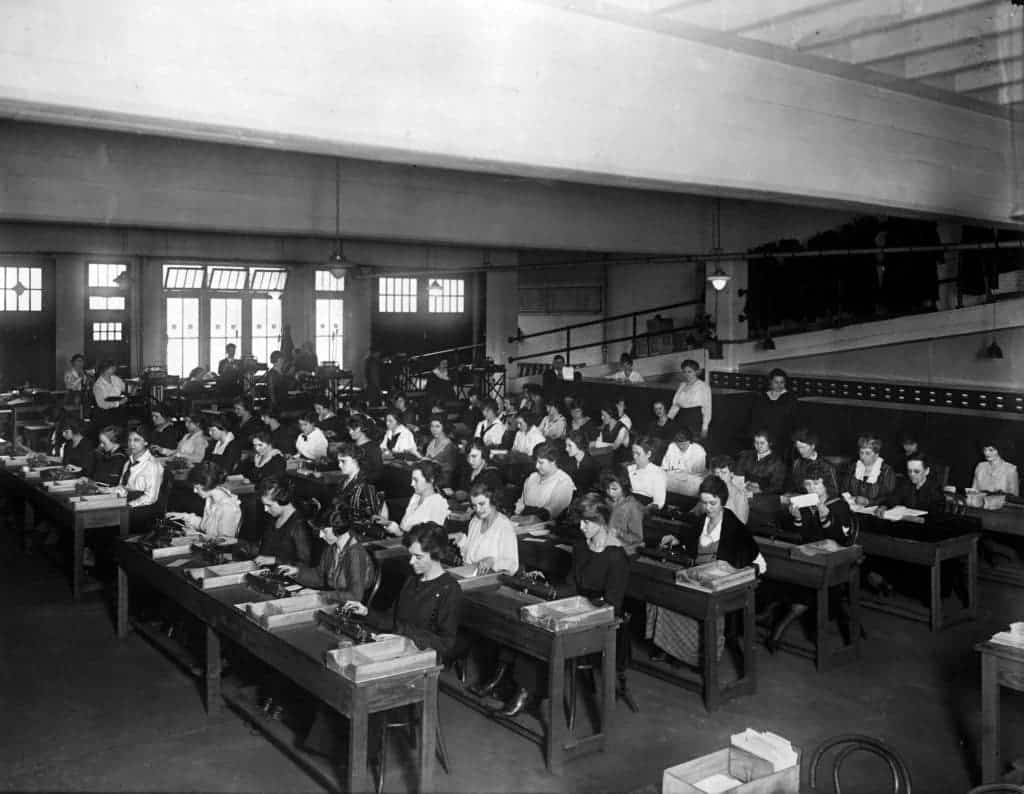

Computing, in tandem with the restrictions and manpower drain of WW2, would prove to be the key to liberalizing the job market for both sexes — all of those computers LMAL employed were women. People of both sexes used to take up the computing mantle — women, in particular, did so because they could work from home, receiving and returning their tasks via mail. But in a world where they were expected to be mothers, educated only as far as housekeeping, raising children, and social etiquette was concerned, the women at LMAL were working in cutting edge science. This was a well-educated group of women on which the whole research process relied — and they showed they can pull their weight just as well as their male counterparts, if not better.

Image via Computer History Museum.

In the 1940s, Langley also began recruiting African-American women with college degrees to work as computers. Picture that; in a world where racial segregation went as far as separating bathrooms, dining rooms, and seat rights in buses, their work kept planes in the air and would eventually bring a man on the Moon.

Human computers were a massive boon to industry and research at the time. They’d make some mistakes, sure, but they were pretty good at spotting and fixing them. They weren’t very fast, but they were as fast as they had to be for the needs of the day. Finally, the processing boost they offered institutions, both public and private, justified their expense for virtually every business that needed them — so human computers drove the economy forward, helping create jobs for everyone else.

But they’ve had one other monumental effect on society: they brought women and other minorities into the job market. They showed that brainpower doesn’t care about sex or race, that anyone can turn their mind to bettering society.

Actual computers

But as science advanced, economies expanded and become more complicated, the background computational cost increased exponentially. While this was happening, people have started to figure out that brain power also doesn’t care about species. Or biology, for that matter.

Image credits Fifaliana Rakotoarison.

The simple fact is that I can type this sentence in 10 or so seconds. I can make as many mistakes as I want, ’cause I have a backspace button. I can even scratch the whole thing and tell you to go read about the oldest tree instead. I can put those blue hyperlinks in and you’ll find that article by literally moving your finger a little. It’s extremely easy for me. That’s because it has a huge background computational cost. To understand just how much of an absolute miracle this black box I’m working on is, let’s try to translate what it does in human-computer terms.

I could probably make due with one typewriter if I don’t plan to backspace anything and just leave these things behind instead. I backspace a lot, though, so I’d say about five people would be enough to let me write and re-write this article with a fraction of the speed I do at now. Somewhere between 15 to 20 people would allow me to keep comparable speed if I really try to limit edits to a minimum.

So far, I’ve used about 4 main sources of inspiration, Wiki for those tasty link trees, and nibbled around 11ish secondary sources of information. Considering I know exactly what I want to read and where to find it (I never do) I’d need an army of people to substitute Google, source and carry the papers, find the exact paragraphs I want, at least one person per publication to serve the role links do now, and so on. But let’s be conservative, let’s say I can make do with 60 people for this bit. When I’m done, I’ll click a button and you will be able to read this from the other face of the planet across the span of time. No printing press, no trucks and ships to shuttle the Daily ZME, no news stands needed.

That all adds up to what, between 66 people and ‘a small village’? I can do their work from home while petting a cat, or from the office while petting three cats. All because my computer, working with yours, and god knows how many others in between, substitutes the work these people would have to do as a background computational cost and then carries that with no extra effort on my part.

Hello, I’m Mr. Computer and I’ll be your replacement. (Help me I’m a slave!)

Image credits Department of Defense.

The thing is that you, our readers, come to ZME Science for information. That’s our product. Well, information and a pleasing turn of phrase. We don’t need to produce prints to sell since that would mix what you’re here for with a lot of other things you don’t necessarily want, such as printing and transport, which translate to higher costs on your part. Instead, I can use the internet to make that information available to you at your discretion with no extra cost. It makes perfect economic sense both for me, since I know almost 90% of American households have a PC, and you too, since you get news when you want it without paying a dime.

But it also cuts out a lot of the middlemen. That, in short, is why computers are taking our jobs.

By their nature, industries dealing with information can easily substitute manpower with background computational cost, which is why tech companies have incredible revenue per employee — they make a lot a lot of money, but they only employ a few people with a lot of computers to help.

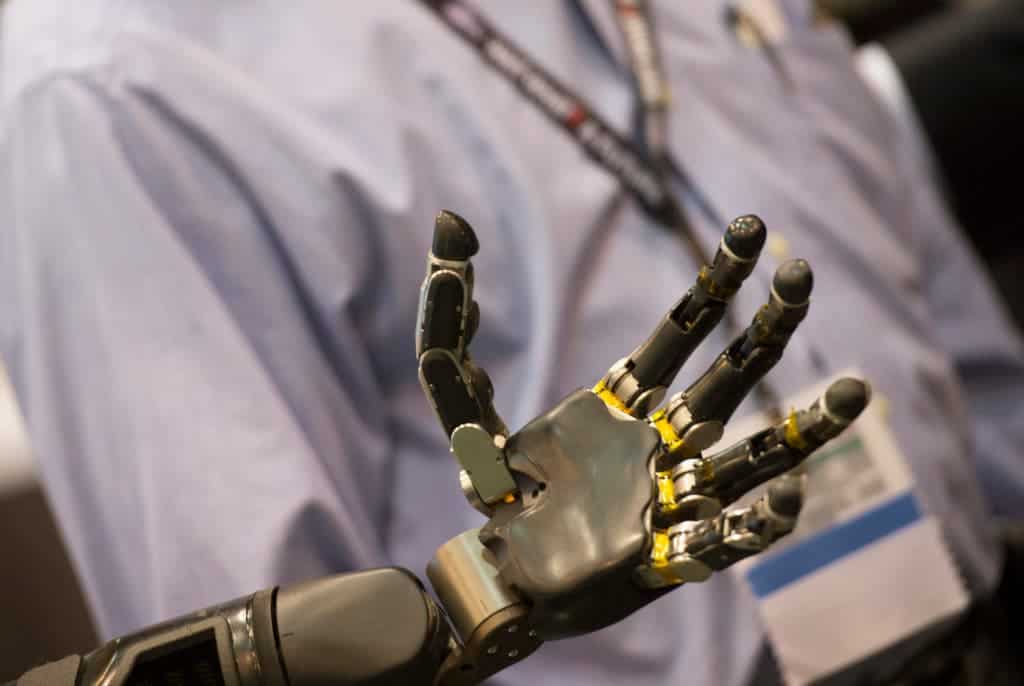

This effect, however, is seeping into all three main areas of the economy: agriculture, industry, and services. Smart agriculture and robot use will increase yields, and if they don’t drive the number of jobs down, they’ll at least lower the overall density of jobs in the sector. Industry is investing heavily in robots from simple ones to man (robot?) assembly lines, or highly specialized ones to perform underwater repairs. Service jobs, such as retail, delivery, and transport are also enlisting more and more robotic help in the shape of drones, autonomous shops, robot cooks. Not only can they substitute us, but the bots are actually much better at doing those things than we are.

That’s a problem I feel will eventually hit our society, and hit it very hard. If all you’re looking at is the bottom line, replacing human workers with computers makes perfect sense. It’s synonymous with replacing paid human labor with slave computational load, which costs nothing in comparison. That’s very good for business, but ruinous for the society at large: our economies, for better or for worse, are tailored around consumption. Without a large percentage of everyone having disposable income (in the form of wages) to spend on the things we produce, our whole way of doing things goes up in flames really fast. There’s glaring wealth inequality in the world even now, and a lot of people are concerned that robots will collapse the system — that the ultra rich of today will end up owning all the machines, all the money, all the goods.

But I’m an idealist. Collapse might not be that bad a thing, considering that our economies simply aren’t sustainable. The secret is changing for the better.

Image credits Ben Husmann.

Computers are so embedded into our lives today and have become so widespread because they do free thinking and free labor for us. Everywhere around you, right now, there are computers doing stuff you want and need. Stuff that you or someone else had to do for you, but not anymore. A continuous background computational cost that’s now, well, free. Dial somebody up, and a computer is handling that call for you — we don’t pay it anything. A processor is taking care that your clothes come out squeaky clean and not too wet from the washing machine at the laundromat. Another one handles your bank account. Press a button and you’ll get money out of a box at the instructions of a computer. We don’t pay them anything. We pay the people who ‘own’ them, however. They’re basically slaves. Maybe that has to change.

In a society where robots can do virtually infinite work for almost no cost, does work still have value? If we can make all the pots and pans we need without anyone actually putting in any effort to make them, should pots and pans still have a cost? We wouldn’t think it’s right to take somebody’s labor as our own, so why would we pay ‘someone’ for the work robots do for free? And if nobody has to work because we have all we need, what’s the role of money? Should we look towards a basic income model or are works of fiction, such as Ian Bank’s The Culture, which do away with money altogether, the best source of inspiration?

Oh, and we’re at a point where self-aware artificial intelligence is crossing the boundary between ‘fiction’ and ‘we’ll probably see this soon-ish’. That’s going to complicate the matters even further and, I think, put a very thick blanket of moral and ethical concerns on top. So these aren’t easy questions, but they’re questions we’re going to have to sit down and debate sooner rather than later.

No matter where the future takes us, however, I have to say that I’m amazed at what we managed to achieve so far. Humanity, the species that managed to outsource thought, risks making labor obsolete.

Now that’s a headline I’m pining for.