Machines are starting to peer into the brain, see what we’re thinking, and re-create it.

Full disclosure here, but I’m not the hardest worker out there. I also have a frustrating habit of timing my bouts of inspiration to a few minutes after my head hits the pillow.

In other words, I wave most of those bouts goodbye on my way to dream town.

But the work of researchers from Japan’s Advanced Telecommunications Research Institute (ATR) and Kyoto University could finally let me sleep my bouts away and also make the most of them — at the same time. The team has created a first-of-its-kind algorithm that can interpret and accurately reproduce images seen or imagined by a person.

Despite still being “decades” away from practical use, the technology brings us one step closer to systems that can read and understand what’s going on in our minds.

Eyes on the mind

Trying to tame a computer to decode mental images isn’t a new idea. It’s actually been in the works for a few years now — researchers have been recreating movie clips, photos, and even dream imagery from brains since 2011. However, all previous systems have been limited in scope and ability. Some can only handle narrow domains like facial shape, while others can only rebuild images from preprogrammed images or categories (‘bird’, ‘cake’, ‘person’, so on). Until now, all technologies needed pre-existing data; they worked by matching a subject’s brain activity to that recorded earlier while the human was viewing images.

According to researchers, their new algorithm can generate new, recognizable images from scratch. It works even with shapes that aren’t seen but imagined.

It all starts with functional magnetic resonance imaging (fMRI), a technique that measures blood flow in the brain and uses that to gauge neural activity. The team mapped out 3 subjects’ visual processing areas down to a resolution of 2 millimeters. This scan was performed several times. During every scan, each of the three subjects was asked to look at over 1000 pictures. These included a fish, an airplane, and simple colored shapes.

The team’s goal here was to understand the activity that comes as a response to seeing an image, and eventually have a computer program generate an image that would stir a similar response in the brain.

However, there’s where the team started flexing their muscles. Instead of showing their subjects image after image until the computer got it right, the researchers used a deep neural network (DNN) with several layers of simple processing elements.

“We believe that a deep neural network is good proxy for the brain’s hierarchical processing,” says Yukiyasu Kamitani, senior author of the study.

“By using a DNN we can extract information from different levels of the brain’s visual system [from simple light contrast up to more meaningful content such as faces]”.

Through the use of a “decoder”, the team created representations of the brain’s responses to the images in the DNN. From then on, they no longer needed the fMRI measurements and worked with the DNN translations alone as templates.

Software teaching software

Image credits Elisa Riva.

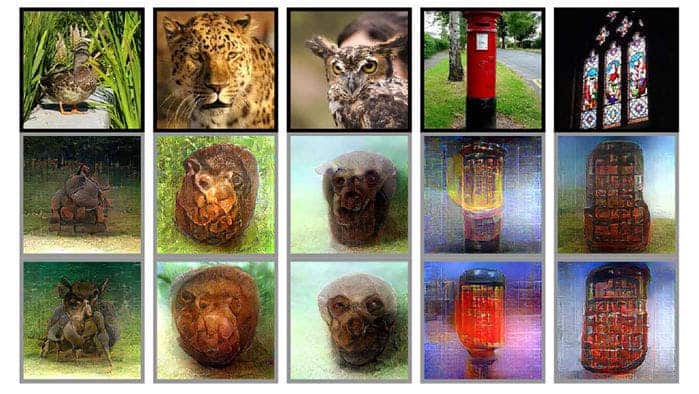

Next came a reiterative process in which the system created images in an attempt to get the DNN to respond similarly to the desired templates — be they of an animal or stained-glass window. It was a trial and error process in which the program started with neutral images (think TV static) and slowly refined them over the course of 200 rounds. To get an idea of how close it was to the desired image, the system compared the difference between the template and the DNN’s response to the generated picture. Such calculations allowed it to improve, pixel by pixel, towards the desired image.

To increase the accuracy of the final images, the team included a “deep generator network” (DGN), an algorithm that had been pre-trained to create realistic images from raw input. The DGN was, in essence, the one that put the finishing details on the images to make them look more natural.

After the DGN touched up the pictures, a neutral human observer was asked to rate the work. He was presented with two images to choose from and asked which was meant to recreate a given picture. The authors report that the human observer was able to pick the system’s generated image 99% of the time.

Next was to integrate all the work with the ‘mind-reading’ bit of the process. They asked three subjects to recall the images that had been previously displayed to them and scanned their brains as they did so. It got a bit tricky at this point, but the results are still exciting — the method didn’t work well for photos, but for the shapes, the generator created a recognizable image 83% of the time.

It’s important to note that the team’s work seems very tidy and carefully executed. It’s possible that their system actually works really well, and the bottleneck isn’t in the software but in our ability to measure brain activity. We’ll have to wait for better fMRI and other brain imaging techniques to come along before we can tell, however.

In the meantime, I get to enjoy my long-seeded dream of having a pen that can write or draw anything in my drowsy mind as I’m lying half-asleep in bed. And, to an equal extent, ponder the immense consequences such tech will have on humanity — both for good and evil.

The paper “Deep image reconstruction from human brain activity” has been published in the pre-print server biorXiv.