If you also dislike fake news, you should probably find a mirror and put on a stern look. A new study found that people unconsciously twist information on controversial topics to better fit wide-held beliefs.

In one study, people were shown figures that the number of Mexican immigrants has been declining for a few years now — which is true, but runs contrary to what the general public believes — and tended to remember the exact opposite when asked later on. Furthermore, such denaturations of facts tended to get progressively worse as people passed the (wrong) information along.

Don’t believe everything you think

“People can self-generate their own misinformation. It doesn’t all come from external sources,” said Jason Coronel, lead author of the study and assistant professor of communication at Ohio State University.

“They may not be doing it purposely, but their own biases can lead them astray. And the problem becomes larger when they share their self-generated misinformation with others.”

The team conducted two studies for their research. In the first one, they had 110 participants read short descriptions of four societal issues that could be quantified numerically. General consensus on these issues were established with pre-tests. Data for two of them fit in with the broad societal view on these issues: for example, many people generally expect more Americans to be in support of same-sex marriage than against it, and public opinion polls seem to indicate that this is true.

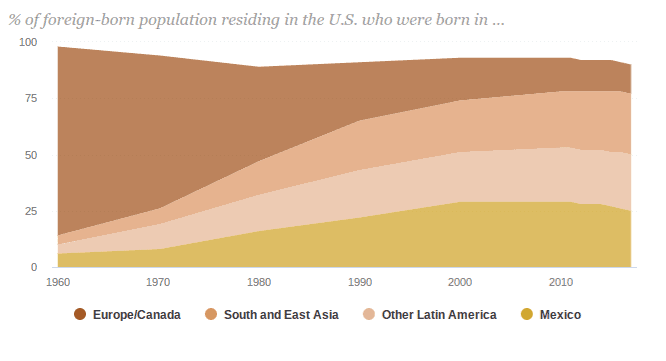

However, the team also used two topics where the facts don’t match up to the public’s perception. For example, the number of Mexican immigrants to the U.S. fell from 12.8 million to 11.7 between 2007 and 2014, but most people in the U.S. believe the number kept growing.

After reading the descriptions, the participants were asked to write down the numbers given (they weren’t informed of this step at the beginning of the test). For the first two issues (those consistent with public perception), the participants kept the relationship true, even if they didn’t remember the exact numbers. For example, they wrote a larger number for the percentage of people supporting same-sex marriage than for those that oppose it.

For the other two topics, however, they flipped the relationship around to make the facts align to their “probable biases” (i.e. popular perception on the issue). The team used eye-tracking technology to track participants’ attention when reading the descriptions.

“We had instances where participants got the numbers exactly correct—11.7 and 12.8—but they would flip them around,” Coronel said. “They weren’t guessing—they got the numbers right. But their biases were leading them to misremember the direction they were going.”

“We could tell when participants got to numbers that didn’t fit their expectations. Their eyes went back and forth between the numbers, as if they were asking ‘what’s going on.’ They generally didn’t do that when the numbers confirmed their expectations,” Coronel said.

For the second study, participants were asked to take part in a telephone (the game) process. The first person in a telephone chain would see the accurate statistics about the number of Mexican immigrants living in the United States. They then had to write those numbers down from memory and pass them along to the second person in the chain, and so on. The team reports that the first person tended to flip the numbers, stating that Mexican immigrants increased by 900,000 from 2007 to 2014 (they actually decreased by about 1.1 million). By the end of the chain, the average participant had said the number of Mexican immigrants increased in those 7 years by about 4.6 million.

“These memory errors tended to get bigger and bigger as they were transmitted between people,” said Matthew Sweitzer, a doctoral student in communication at Ohio State and co-author of the study.

Coronel said the study did have limitations. It’s possible that the participants would have better remembered the numbers if the team explained why they didn’t match their expectations. Furthermore, they didn’t measure each participant’s biases going into the tests. Finally, the telephone game study did not capture important features of real-life conversations that may have limited the spread of misinformation. However, it does showcase the mechanisms in our own minds that can spread misinformation.

“We need to realize that internal sources of misinformation can possibly be as significant as or more significant than external sources,” said Shannon Poulsen, also a doctoral student in communication at Ohio State and co-author of the study. “We live with our biases all day, but we only come into contact with false information occasionally.”

The paper “Investigating the generation and spread of numerical misinformation: A combined eye movement monitoring and social transmission approach” has been published in the journal Human Communication Research.