In his book “Do Androids Dream of Electric Sheep”, one of my favorite writers Philip K. Dick explores what sets apart humans from androids. The theme is more valid today than it ever was, considering the great leaps in artificial intelligence we’re seeing coming off major tech labs around the world, like Google’s. Take for instance how the company employs advanced artificial neural networks to zap through a gazillion images, interpret them and return the right one you’re looking for when you make a query using the search engine. Though nothing like a human brain, the networks uses 10-30 stacked layers of artificial neurons with each layer doing its job in incremental order to come to an “answer” by the final output layer is finished. While not dead-on, the network seems to return results better than anything we’ve seen before and as a by-product, it can also “dream.” These artificial dreams output some fascinating images to say the least, going from virtually nothing (white noise) to something that looks out of a surrealist painting. Who says computers can’t be creative?

Engineers at Google were gracious enough to tell us how the AI works, but also shared some images that show how the Google’s neural network “sees” or “dreams” in a recent blog post.

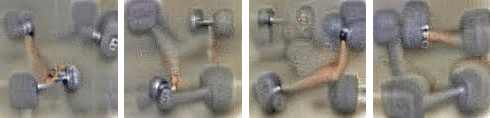

Before it can identify objects on its own, the neural network is trained to understand what those objects are. By feeding millions of photos, researchers teach the network what a fork is, for instance. The network knows that an object that has a handle and 2-4 tines is likely a fork, without getting too involved in details like colour, shape or size. Once it learns, it goes through images through its neural network layer by layer, each time analyzing something different or amplifying a definite feature, until it can identify that fork. Along the way, the AI can fail though. So, to know if it does a good job or to learn which layer isn’t working as it supposed to, it helps to visualize the network’s representation of a fork. In the image below, you can see what the AI thought a dumbbell looked like.

As you can see, the AI can’t extract the essence of what a dumbbell is without attaching some human biceps or muscles in the picture as well. Probably, because most of the images of dumbbell it’s been spoon-fed featured people lifting the weights. By visualizing these mishaps, the engineers at their own learn about how their system works (it’s so complex that even though they designed it, the AI can sometimes seem to have a mind of its own) and build it better to fit their expectations.

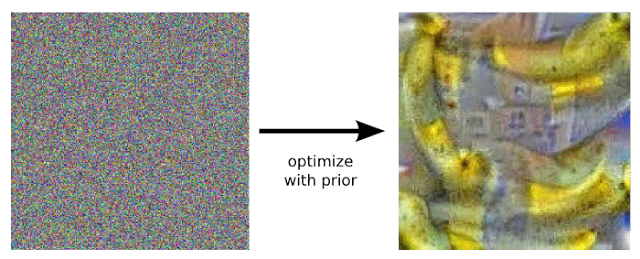

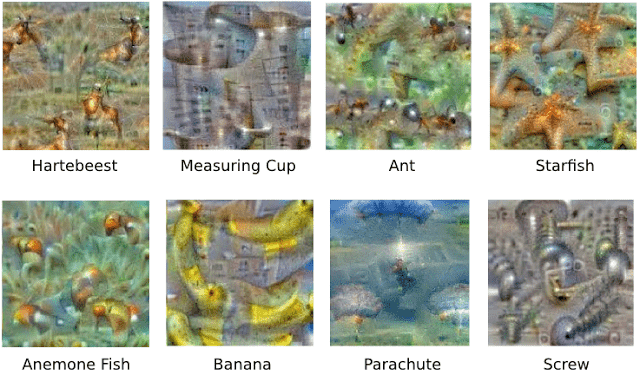

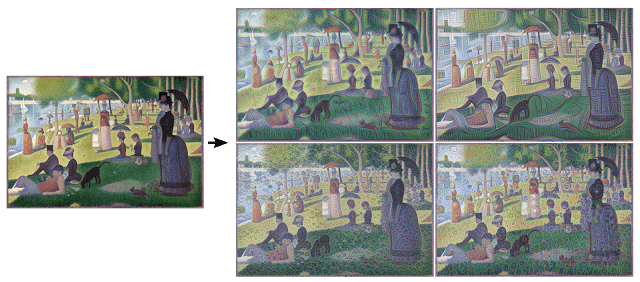

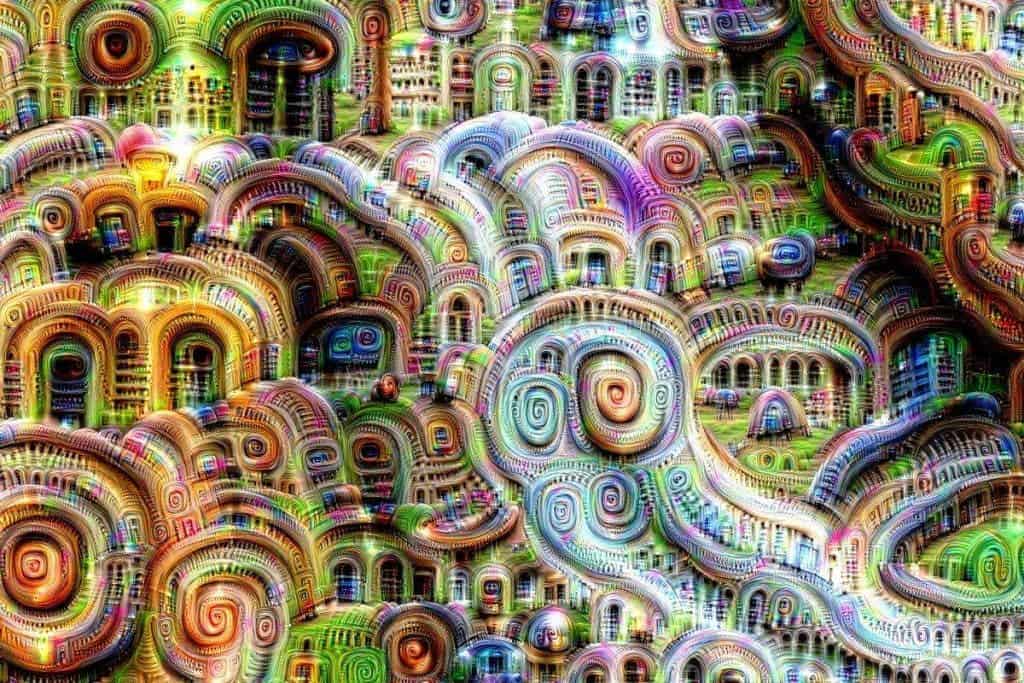

Typically, the first layer looks for edgers and corners by analyzing contrast; intermediate layers looks for basic features like a door or leaf; the final layer assembles the whole data into a complete interpretation. Another approach Google uses to understand what goes on exactly at each layer is to work upside down and ask the AI to enhance an input image in a way that elicits a particular interpretation. For instance, the engineers asked the neural network what it thinks an image representing a banana should look like, all starting from random noise. In the blog post, they write ” that doesn’t work very well, but it does if we impose a prior constraint that the image should have similar statistics to natural images, such as neighboring pixels needing to be correlated. ”

Here are some other examples across different classes.

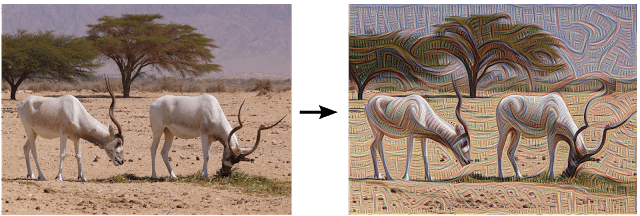

As mentioned earlier, the each layer of the Google AI amplifies a certain feature. Here are some images which shows how the AI amplifies features at a certain layer for both photos and drawings or paintings.

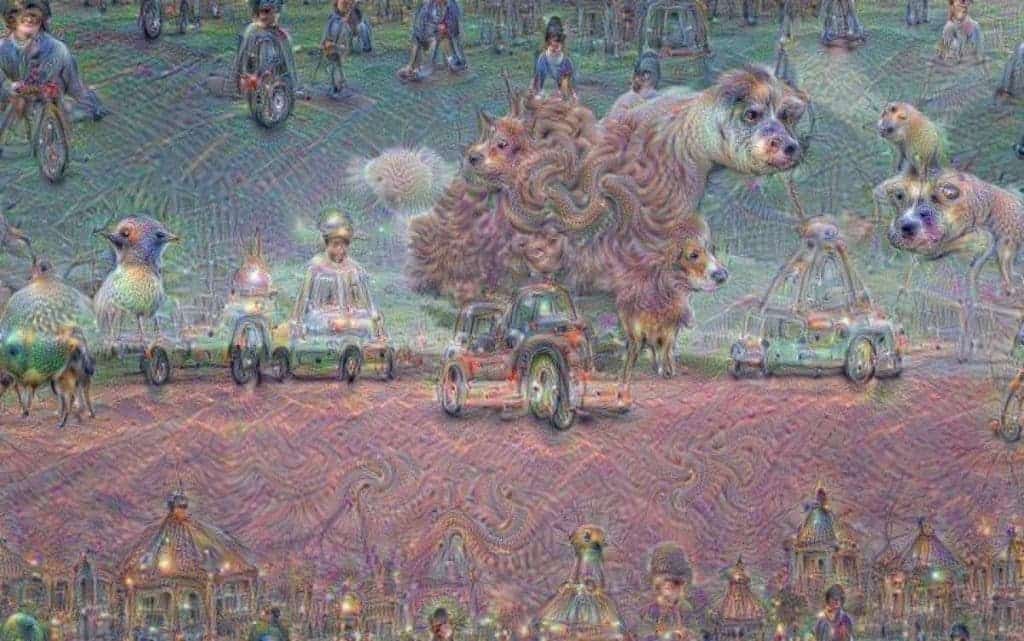

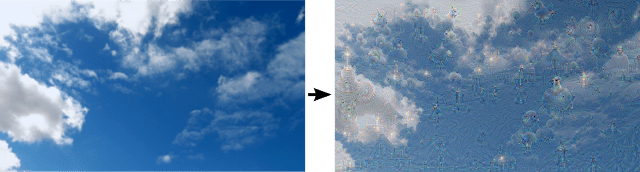

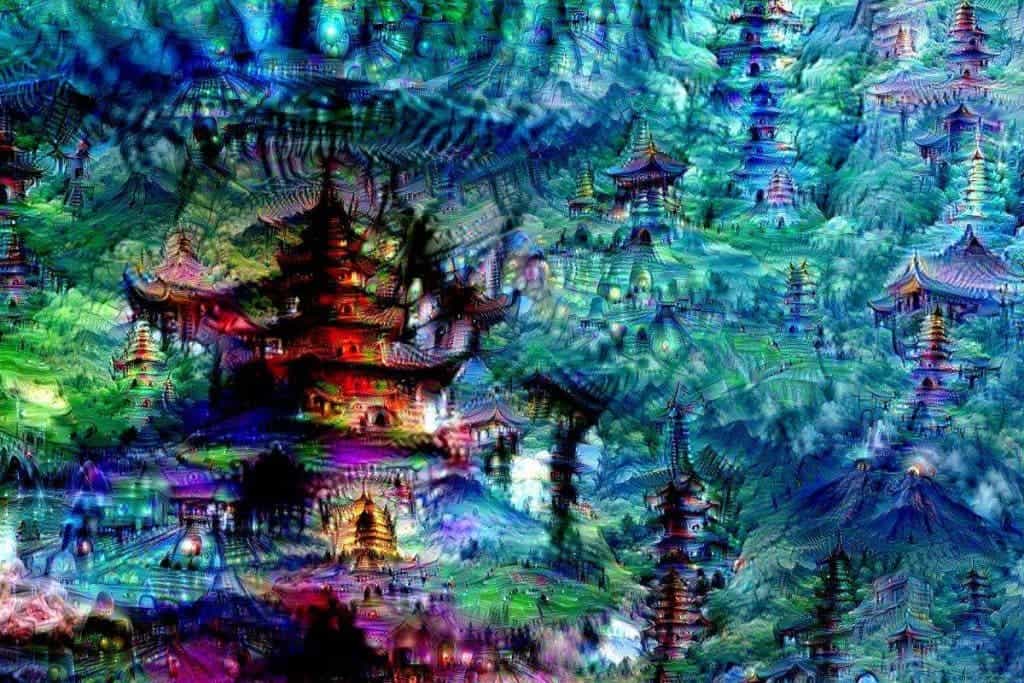

It gets far more interesting when you move farther up the neural layers where the AI starts to interpret things at a more abstract level. Here’s what happened when the researchers asked the AI “whatever you see there, I want more of it!”

This one I found really fascinating. You can see a lot of patterns and things popping up when you look at clouds in the sky: a country’s map, ducks, a woman’s breasts. The possibilities can be endless. That’s because the human brain is great at findings and fitting patterns. This is how we’re able to make sense of the world and to a greater extent that’s what makes us such a successful species. Of course, sometimes we confuse the patterns we see with reality. That’s why some people see things that look like pyramids on Mars and think intelligent Martian aliens are real. In the image above, the Google neural network interpreted some features as birds, amplified this and then it started “seeing” birds everywhere. Here are some more interesting high abstractions.

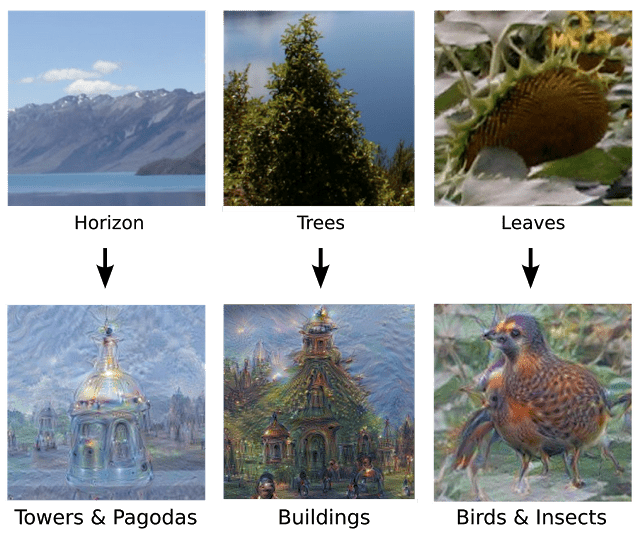

Like humans, the Google neural network is also biased towards certain features because of the kind of images its been fed. Most images are those of animals, buildings, landmarks and so on. Naturally, it interprets these features even when these aren’t present.

The Google researchers also got a bit creative themselves and instructed the network to take the final image interpretation it produced and use it as the new picture to process. This renders an endless stream of new impressions.

Ultimately, these sort of interpretations, while incredibly fun to witness, are very important. It helps Google “understand and visualize how neural networks are able to carry out difficult classification tasks, improve network architecture, and check what the network has learned during training.” But the same process could also help humans underline their own creative process. Nobody knows exactly what human creativity is. Is it inherent to humans or can a machine also be creative? Google’s neural network, but also other examples like this extremely witty chatbot, hints towards the latter. I don’t know about you, but that sounds both amazing and scary at the same time.