Eyes are said to be the window to the soul, but according to Google engineers, they’re also the window to your health.

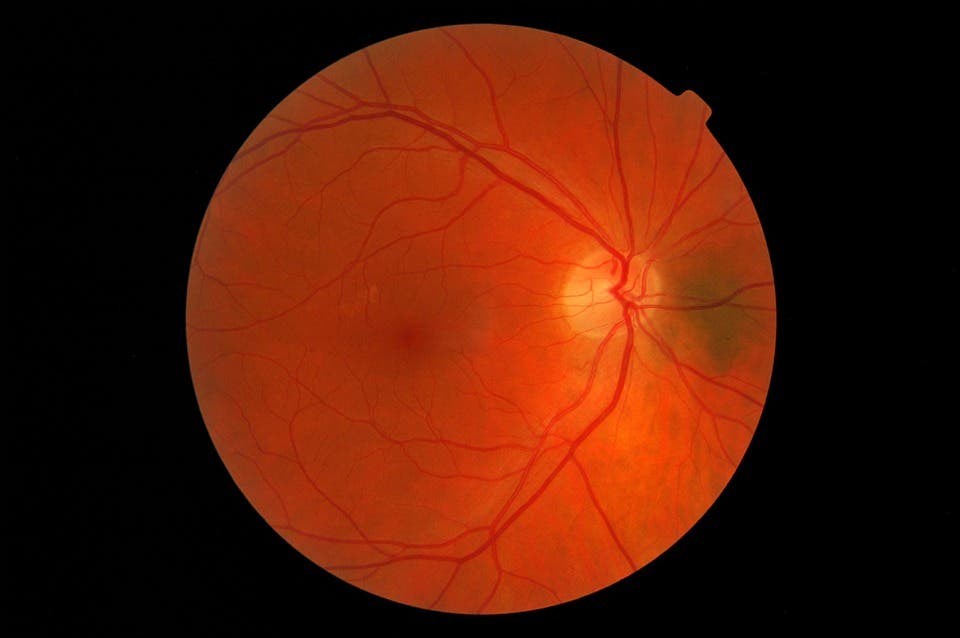

The engineers wanted to see if they could determine some cardiovascular risks simply by looking a picture of someone’s retina. They developed a convolutional neural network — a feed-forward algorithm inspired by biological processes, especially pattern between neurons, commonly used in image analysis.

This type of artificial intelligence (AI) analyzes images holistically, without splitting them into smaller pieces, based on their shared similarities and symmetrical parts.

The approach became quite popular in recent years, especially as Facebook and other tech giants began developing their face-recognition software. Scientists have long proposed that this type of network can be used in other fields, but due to the innate processing complexity, progress has been slow. The fact that such algorithms can be applied to biology (and human biology, at that) is astonishing.

“It was unrealistic to apply machine learning to many areas of biology before,” says Philip Nelson, a director of engineering at Google Research in Mountain View, California. “Now you can — but even more exciting, machines can now see things that humans might not have seen before.”

Observing and quantifying associations in images can be difficult because of the wide variety of features, patterns, colors, values, and shapes in real data. In this case, Ryan Poplin, Machine Learning Technical Lead at Google, used AI trained on data from 284,335 patients. He and his colleagues then tested their neural network on two independent datasets of 12,026 and 999 photos respectively. They were able to predict age (within 3.26 years), and within an acceptable margin, gender, smoking status, systolic blood pressure as well as major adverse cardiac events. Researchers say results were similar to the European SCORE system, a test which relies on a blood test.

To make things even more interesting, the algorithm uses distinct aspects of the anatomy to generate each prediction, such as the optic disc or blood vessels. This means that, in time, each individual detection pattern can be improved and tailored for a specific purpose. Also, a data set of almost 300,000 models is relatively small for a neural network, so feeding more data into the algorithm can almost certainly improve it.

Doctors today rely heavily on blood tests to determine cardiovascular risks, so having a non-invasive alternative could save a lot of costs and time, while making visits to the doctor less unpleasant. Of course, for Google (or rather Google’s parent company, Alphabet), developing such an algorithm would be a significant development and a potentially profitable one at that.

It’s not the first time Google engineers have dipped their feet into this type of technology — one of the authors, Lily Peng, published another paper last year in which she used AI to detect blindness associated with diabetes.

Journal Reference: Ryan Poplin et al. Predicting Cardiovascular Risk Factors from Retinal Fundus Photographs using Deep Learning. arXiv:1708.09843