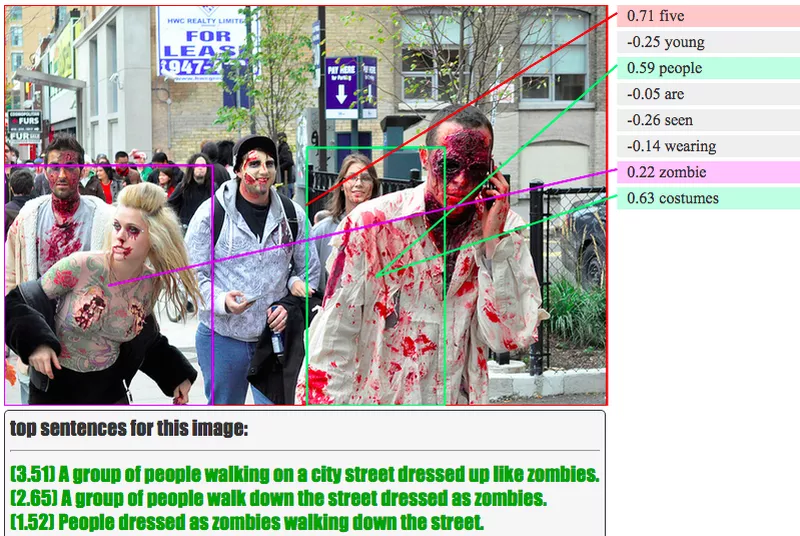

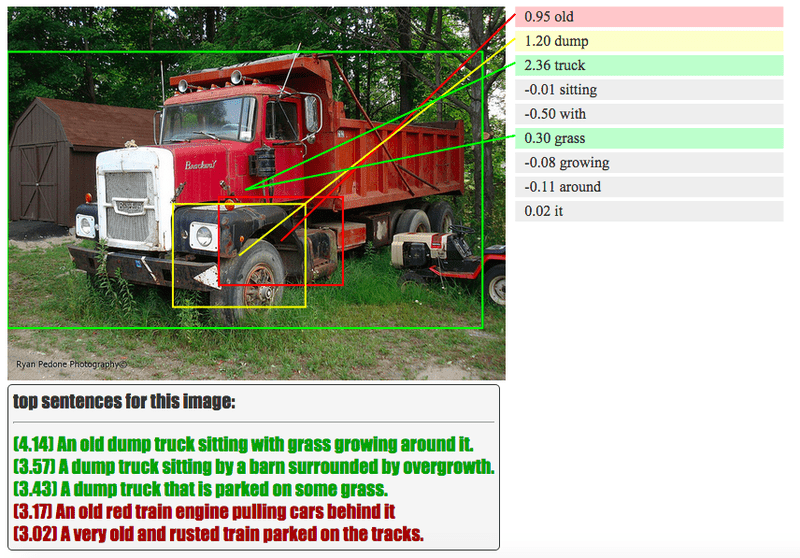

Facial recognition and motion tracking is already old news. The next level is describing what you do or what’s going on – for now only in still pictures. Meet NeuralTalk, a deep learning image processing algorithm developed by Stanford engineers which uses processes similar to those used by the human brain to decipher and interpret photos. The software can easily describe, for instance, a band of people dressed up as zombies. It’s remarkably effective and freaking creepy at the same time.

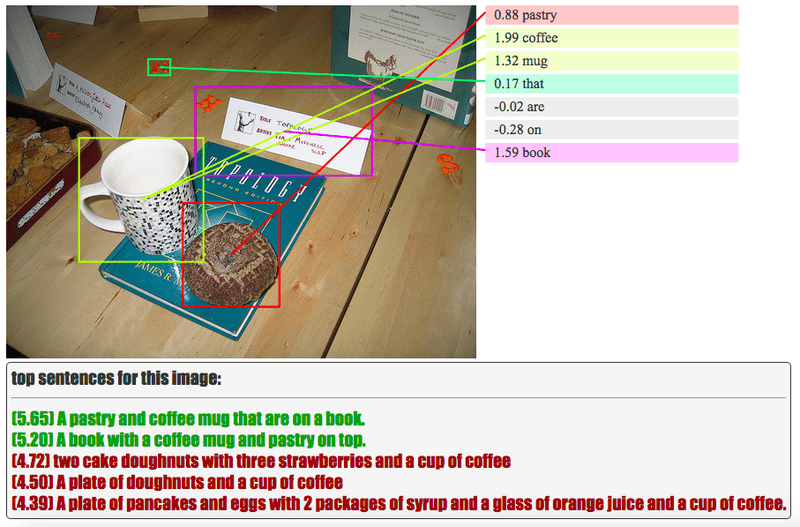

A while ago ZME Science wrote about Google’s amazing neural networks and its inner workings. The network uses stacks of 10 to 30 layers of artificial neurons to dissect images and interpret them at a seemingly cognitive level. Like a child, the neural network first learns, for instance, what a book looks like and what it means, then uses this information to identify books, no matter its shape, size or colour, in other pictures. It’s next level image processing, and with each Google image query the software gets better.

Working in a similar vein, NeuralTalk also employs a neural network to analyze images, only it also returns a description covering the gist of the image. It’s eerily accurate to boast.

In the published study, lead author Fei-Fei Li, director of the Stanford Artificial Intelligence Laboratory, says NeuralTalk works similarly to the human brain. “I consider the pixel data in images and video to be the dark matter of the Internet,” Li toldThe New York Times last year. “We are now starting to illuminate it.

A computer just captioned this as “man using his laptop while his cat looks at the screen” http://t.co/bfwr1wiiFn pic.twitter.com/1F18NCwVf9

— Tim McNamara (@timClicks) July 11, 2015

It’s not quite perfect though. According to Verge, a fully-grown woman gingerly holding a huge donut is tagged as “a little girl holding a blow dryer next to her head,” while an inquisitive giraffe is mislabeled as a dog looking out of a window. But we’re only seeing the first steps of an infant technology with an incredible transformative potential. Tasks that would require the attention of humans could be easily replaced by an equally effective algorithm. In effect hundreds of thousands of collective man hours could be saved. For instance, previously Google Maps had to rely on teams of employees would check every address for accuracy. When Google Brain came online, it transcribed Street View data from France in under an hour.

Was this helpful?