The human brain is possibly the most complex entity in the Universe. It’s absolutely remarkable and beautiful to contemplate, and the things we are capable of because of our brains are outstanding. Even though most people might seem like they’re using their brains absolutely trivially the truth is the brain is incredibly complex. Let’s look at technicalities alone: the human brain is littered with some 100 billion nerve cells, together these form connections in tandem as each neuron is simultaneously engaged with another 1000 or so. In total some 20 million billion calculations per second are performed by the brain.

The unmatched computational strength of the human brain

That’s quite impressive. Some people think just because they can’t add, multiply or differentiate an equation in a heartbeat like a computer does, then that computer is ‘smarter’ than them. Couldn’t be farther from the truth. That machine can only do that – compute. Ask your scientific hand calculator to make you breakfast, write a novel or dig a hole. You can design a super scientific calculator with gripable limbs and program it to grab a shovel and dig – it will succeed probably, but again it will reach yet another limitation since that’s all been designed to do – it doesn’t ‘think’ for itself. Imagine this, if you were to combine the whole computing power on our planet – virtually combine all the CPUs in the world – only then would you able to reach the same computing speed of a human brain. To build a machine, by today’s sequential computational standards, similar to the human brain thus costs an enormous amount of money and energy. To cool such a machine you’d need to divert a whole river! In contrast, an adult human brain only consumes 20 Watts of energy!

Mimicking the computing power of the brain, the most complex computational ‘device’ in the Universe, is a priority for computer science and artificial intelligence enthusiasts. But we’re just beginning to learn how the brain works and what lies within our deepest recesses – the challenges are numerous.

A new step forward in this direction has been made by scientists at the Harvard School of Engineering and Applied Sciences (SEAS) who reportedly built a transistor that behaves like a neuron, in some respects at least.

The brain is extremely plastic as it creates a coherent interpretation of the external world based on input from its sensory system. It’s always changing and highly adaptable. Actually, some neurons or whole brain regions can switch functions when needed, fact attested by various medical cases in which severe trauma was inflicted. One should remember a fellow named Phineas Gage. Gage worked as a construction worker during the railroad boom of the mid XIX century. A freak accident propelled a large iron rod directly through his skull completely severing his brain’s left frontal lobe. He survived to live many years afterword, though his personality was severely altered – the prime example at the time that personality is deeply intertwined with the brain. What it also demonstrates, however, is that key brain functions were diverted to other parts of the brain.

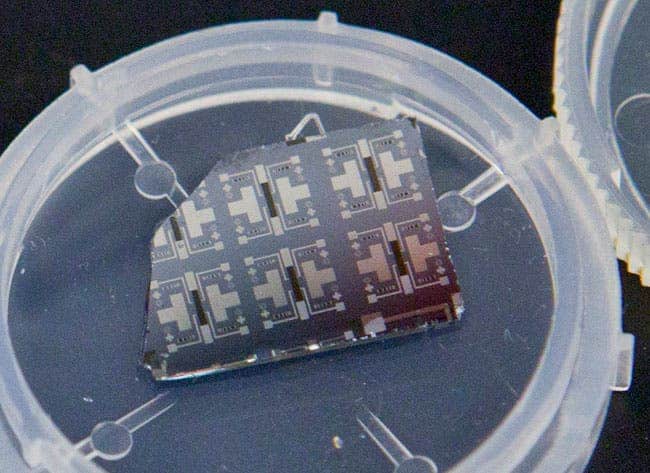

A synaptic transitor

So how do you mimic this amazing plasticity? Well, if you want a chip that behaves like a human brain you first need to have its constituting elements behave like the constituting elements of the brain – transistors for neurons. A transistor in some ways behaves like a synapse, acting as a signal gate. When two neurons are in connection (they’re never in direct contact!), electrochemical reactions through neurotransmitters relay specific signals.

In a real synapse, calcium ions induce chemical signaling. The Harvard transistors uses instead oxygen ions, engulfed in a 80-nanometer-thick layer of samarium nickelate crystal, which is the analog to the synapse channel. When a voltage is applied to the crystal, oxygen ions slip through, changing the conductive properties of the lattice and altering signal relaying capabilities.

The strength of the connection is based on the time delay in the electric signal fed into it. It’s the same way for real neurons that get stronger as they relay more signals. Exploiting unusual properties in modern materials, the synaptic transistor could mark the beginning of a new kind of artificial intelligence: one embedded not in smart algorithms but in the very architecture of a computer.

“There’s extraordinary interest in building energy-efficient electronics these days,” says principal investigator Shriram Ramanathan, associate professor of materials science at Harvard SEAS. “Historically, people have been focused on speed, but with speed comes the penalty of power dissipation. With electronics becoming more and more powerful and ubiquitous, you could have a huge impact by cutting down the amount of energy they consume.”

“The transistor we’ve demonstrated is really an analog to the synapse in our brains,” says co-lead author Jian Shi, a postdoctoral fellow at SEAS. “Each time a neuron initiates an action and another neuron reacts, the synapse between them increases the strength of its connection. And the faster the neurons spike each time, the stronger the synaptic coection. Essentially, it memorizes the action between the neurons.”

So, it does in fact run a bit like a neuron, in the sense that it adapts and strengthens and weakens connections accordingly to external stimuli. Also, opposed to traditional transistors, the Harvard creation isn’t restricted to the binary system of ones and zeros and interestingly enough runs on non-volatile memory, which means even when power is interrupted, the device remembers its state. Still, it can’t form new connections like a human neuron can.

“We exploit the extreme sensitivity of this material,” says Ramanathan. “A very small excitation allows you to get a large signal, so the input energy required to drive this switching is potentially very small. That could translate into a large boost for energy efficiency.”

It does have a significant advantage over the human brain – these transistors can run at high temperatures exceeding 160 degrees Celsius. This kind of heat typically boils the brain, so kudos.

So, in principle at least, integrating millions of tiny synaptic transistors and neuron terminals could take parallel computing into a new era of ultra-efficient high performance. We’re still light years away from something like this happening, still it hints of a future of highly efficient and fast parallel computing! This is the very fist baby step – a proof of concept.

“You have to build new instrumentation to be able to synthesize these new materials, but once you’re able to do that, you really have a completely new material system whose properties are virtually unexplored,” Ramanathan says. “It’s very exciting to have such materials to work with, where very little is known about them and you have an opportunity to build knowledge from scratch.”

“This kind of proof-of-concept demonstration carries that work into the ‘applied’ world,” he adds, “where you can really translate these exotic electronic properties into compelling, state-of-the-art devices.”

The findings were reported in the journal Nature Communications.

Was this helpful?