A new study suggests that our fMRI technology might be relying on faulty algorithms — a bug the researchers found in fMRI-specific software could invalidate the past 15 years of research into human brain activity.

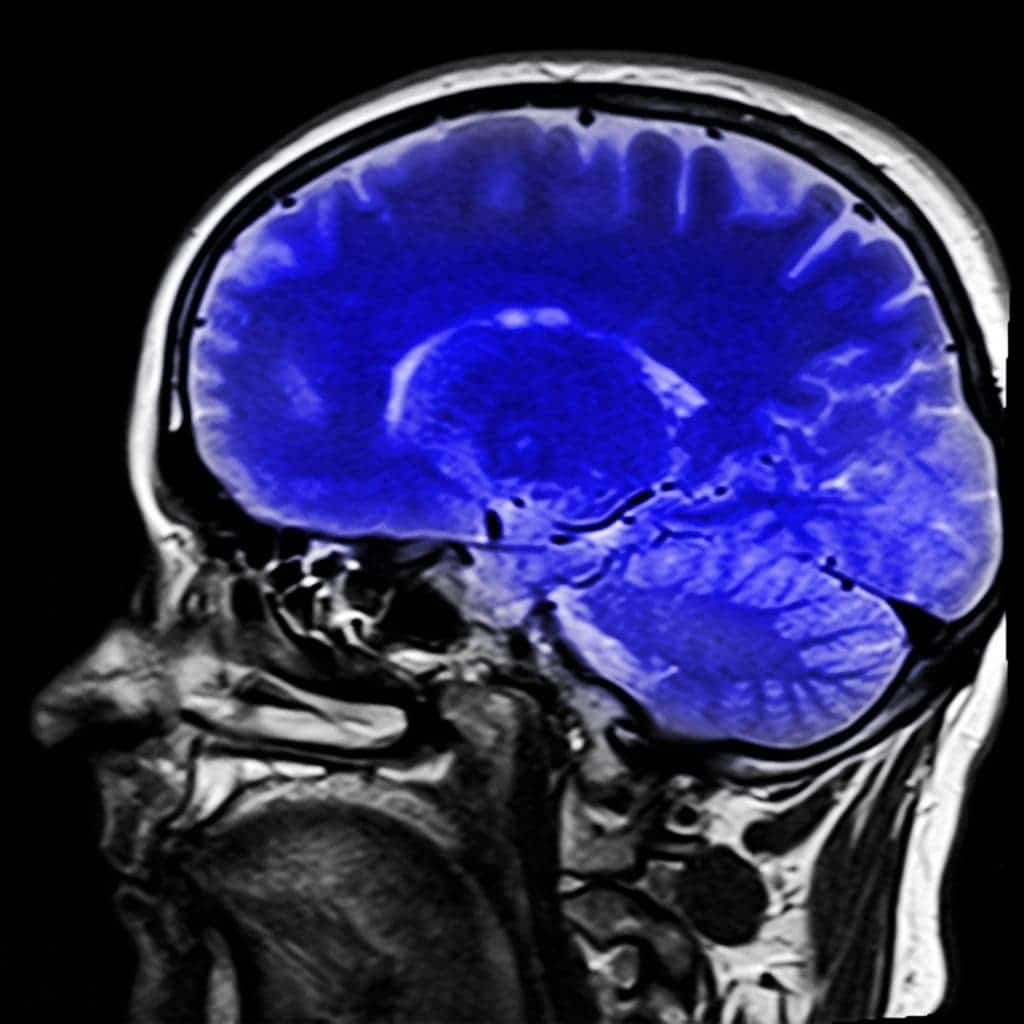

The best tool we have to measure brain activity today is functional magnetic resonance imaging (fMRI.) It’s so good in fact that we’ve come to rely on it heavily — which isn’t a bad thing, as long as the method is sound and provides accurate readings. But if the method is flawed, the results of years of research about what our brains look like during exercise, gaming, love, drug usage and more would be put under question. Researchers from Linköping University in Sweden have performed a study of unprecedented scale to test the efficiency of fMRI, and their results are not encouraging.

“Despite the popularity of fMRI as a tool for studying brain function, the statistical methods used have rarely been validated using real data,” the researchers write.

The team lead by Anders Eklund gathered rest-state fMRI data from 499 healthy individuals from databases around the world and split them intro 20 groups. They then measured them against each other, resulting in a staggering 3 million random comparisons. They used these pairs to test the three most popular software packages for fMRI analysis – SPM, FSL, and AFNI.

While the team expected to see some differences between the packages (of around 5 percent), the findings stunned them: the software resulted in false-positive rates of up to 70 percent. This suggests that some of the results are so inaccurate that they might be showing brain activity where there is none — in other words, the activity they show is the product of the software’s algorithm, not of the brain being studied.

“These results question the validity of some 40,000 fMRI studies and may have a large impact on the interpretation of neuroimaging results,” the paper reads.

One of the bugs they identified has been in the systems for the past 15 years. It was finally corrected in May 2015, at the time the team started writing their paper, but the findings still call into question the findings of papers relying on fMRI before this point.

So what is actually wrong with the method? Well, fMRI relies on a massive magnetic field pulsating through a subject’s body that can pick up on changes of blood flow in areas of the brain. These minute changes signal that certain brain regions have increased or decreased their activity, and the software interprets them as such. The issue is that when scientists are looking at the data they’re not looking at the actual brain — what they’re seeing at is an image of the brain divided into tiny ‘voxels’, then interpreted by a computer program, said Richard Chirgwin for The Register.

“Software, rather than humans … scans the voxels looking for clusters,” says Chirgwin. “When you see a claim that ‘Scientists know when you’re about to move an arm: these images prove it,’ they’re interpreting what they’re told by the statistical software.”

Because fMRI machines are expensive to use — around US$600 per hour — studies usually employ small sample sizes and there are very few (if any) replication experiments done to confirm the findings. Validation technology has also been pretty limited up to now.

Since fMRI machines became available in the early ’90s, neuroscientists and psychologists have been faced with a whole lot of challenges when it comes to validating their results. But Eklund is confident that as fMRI results are being made freely available online and validation technology is finally picking up, more replication experiments can be done and bugs in the software identified much more quickly.

“It could have taken a single computer maybe 10 or 15 years to run this analysis,” Eklund told Motherboard. “But today, it’s possible to use a graphics card”, to lower the processing time “from 10 years to 20 days”.

So what the nearly 40,000 papers that could now be in question? All we can do is try to replicate their findings, and see which work and which don’t.

The full paper, titled “Cluster failure: Why fMRI inferences for spatial extent have inflated false-positive rates,” has been published online in the journal PNAS.