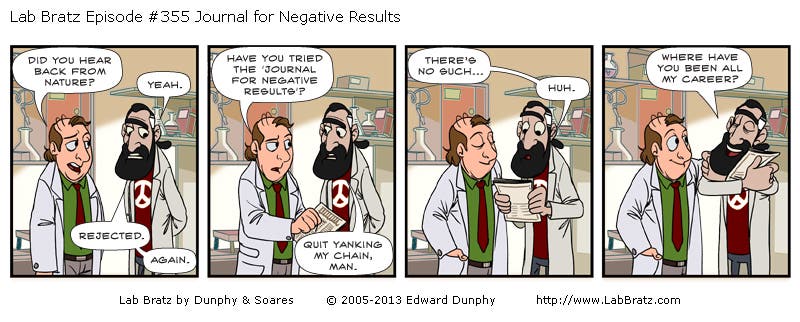

Science journal today seem to be dominated by positive results – that is those that are statistically significant and lead to a dramatic finding. The devil’s in the details they say, and the same hold true for the advances of science. While it’s true that groundbreaking research is what leads to leaps, these jumps are often ambiguous. Hundreds of other papers – some which control tidbits, other that replicate past findings – are paramount to filling in the blanks.

This is what researchers today are calling publication bias; the skewed tendency of journals to publish positive results. Ultimately, this provides a perverse incentive for researchers to either fit their data with a conclusion or abandon their research altogether because it doesn’t fit expectations. According to Nature, social sciences and medicine are most affected. After shifting through 221 sociological studies conducted between 2002 and 2012, Stanford researchers found only 48% of the completed studies had been published. Of all the null studies, just 20% had appeared in a journal, and 65% had not even been written up. By contrast, roughly 60% of studies with strong results had been published.

Daniele Fanelli, an evolutionary biologist who studies publication bias and misconduct, found a similar trend after he analysed over 4,600 papers published in all disciplines between 1990 and 2007, measuring the frequency of papers that, having declared to have ‘‘tested’’ a hypothesis, reported a positive support for it. During this time frame, the frequency of positive support has grown by 22%, with much more so in social and biomedical fields. On a country level, the United States had published significantly fewer positive results than Asian countries, but more than European countries.

Science in the file drawer

What disheartening is that this sort of situation promotes a “failure is bad” outlook. Thomas Edison’s associate, the story goes, was frustrated with nearly a thousand unsuccessful experiments for a project. He was ready to throw in the towel, but Edison talked him out of it. “I cheerily assured him that we had learned something,” he is reported to have said in a 1921 interview. “We had learned for a certainty that the thing couldn’t be done that way, and that we would have to try some other way.” Having negative results isn’t bad, what’s bad is failing to report them.

There are a few explanations why negative results might be less considered, although I’ve yet to find a journal that openly rejects these. Officially, negative and positive studies are given equal consideration. Off the book, editors are pressured by their publishers to increase the impact of their journals. Positive results are cited more often. Some speculate that some researchers refuse to publish their results so they don’t help the “competition”.

“My sense is that the ‘file-drawer’ effect is a real problem,” says Neal Young of the National Heart, Lung, and Blood Institute (NHLBI), a research practices expert. “The entire publishing process has gotten more competitive and journals have much more power,” Young says. “That’s not a bad thing in itself, competition is good.”

However, the pressure on young scientists to present “novel” results clearly represents a potential problem, Young adds, particularly in clinical trials to test new medicines.

Recognizing this very harmful bias, PLoS One launched last week a collection of null, negative and incomplete results under a special edition called “Missing Pieces”. One study found no evidence that suggests support groups help with postpartum disorder in Bangladeshi mothers. Another failed to replicate the findings of four previous experiments for the “depletion model” of self control, which suggests self-control is a limited resource that runs out.

“The publication of negative results, such as the works featured in the collection, is essential to research progress,” the journal writes. This new home for science’s “missing pieces” can “prevent duplication of research effort and potentially expedites the process of finding positive results.”

Efforts made by PLoS One and other journals, like the Journal of Negative Results in Biomedicine, might prove effective in bridging the gap. The message that failure is good needs to stick – for science.