Researchers from Google Brain — the company’s inventive machine-learning lab — have developed a new software that can generate Wikipedia-style articles by summarizing info from the web.

The software written by the Google engineers first scrapes the top ten web pages for a given subject, excluding the Wikipedia entry — think of it as a summary of the information found in the top 10 results of a Google search. Most of these pages are used to train the machine-learning algorithm, while a few are kept to test and validate the output of the software.

Paragraphs from each page are collected and ranked to create a long document, which is then shortened by splitting it into 32,000 individual words. This large text is used as input for an abstractive model where the long sentences are cut shorter — a trick to create a summary of the text.

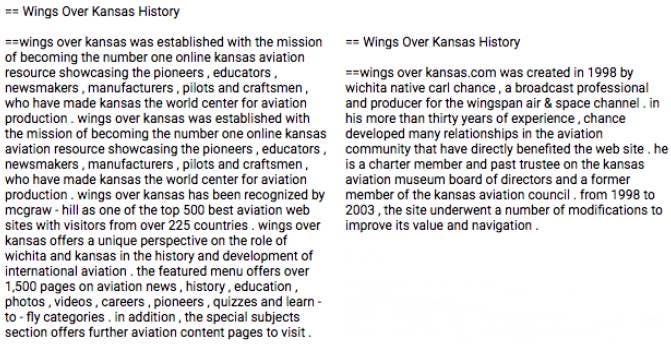

Because the sentences are shortened from the earlier extraction phase, rather than written from scratch, the end result can sound rather repetitive and dull. For instance, here’s what the AI’s Wikipedia-style blur looks like compared to the text currently online edited by humans.

Mohammad Saleh and colleagues at Google Brain hope that they can improve their bot by designing models and hardware that support longer input sequences. Their study will be presented at the upcoming International Conference on Learning Representations (ICLR).

As things stand now, it would be unwise to have Wiki entries written by this AI but progress is good. Perhaps, one day, a hybrid solution between AI content generation and human supervision might populate Wikipedia at an unprecedented rate.

Currently, the English Wikipedia alone has over 5,573,495 articles of any length, and the combined Wikipedias for all other languages greatly exceed the English Wikipedia in size, giving more than 27 billion words in 40 million articles in 293 languages. That’s a lot but with an AI solution could come up with even more info, especially for the millions of Wiki pages that are unpopulated “stubs”.

And if an AI will one day be good enough to populate Wikipedia, perhaps it will be good enough to “write” all sorts of other content. You wouldn’t have to pay someone to write a paper or yours truly for the news. News-writing AIs are actually quite advance nowadays. Reuters’ algorithmic prediction tool helps journalists gauge the integrity of a tweet, the BuzzBot collects information from on-the-ground sources at news events, and the Washington Post uses its in-house built Heliograf, a bot that writes short news.