The world’s most popular website security system may soon become obsolete.

Researchers at the Lancaster University, UK, Northwest University, and Peking University (both in China) have developed a new Ai that can defeat the majority of captcha systems in use today. The algorithm is not only very good at its job — it also requires minimal human effort or oversight to work.

The breakable code

“[The software] allows an adversary to launch an attack on services, such as Denial of Service attacks or spending spam or fishing messages, to steal personal data or even forge user identities,” says Mr Guixin Ye, the lead student author of the work. “Given the high success rate of our approach for most of the text captcha schemes, websites should be abandoning captchas.”

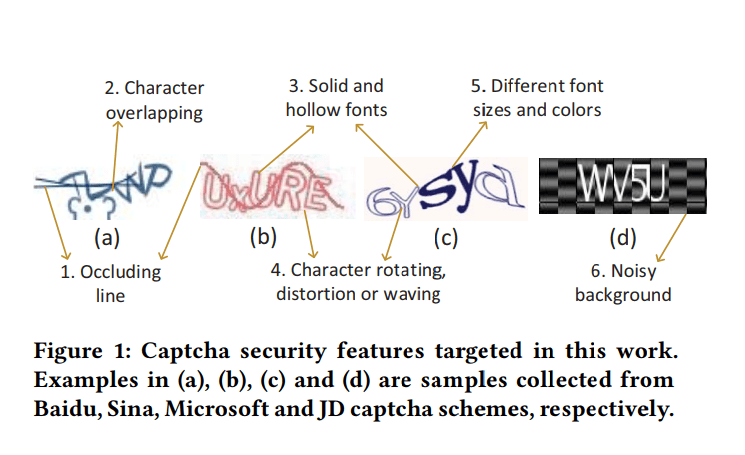

Text-based captcha (Completely Automated Public Turing test to tell Computers and Humans Apart) do pretty much what it says on the tin. They’re systems that typically use a hodge-podge of letters or numbers, which they run through additional security features such as occluding lines. The end goal is to generate images that a human can distinguish as being text while confusing a computer. It relies on our much stronger pattern recognition abilities to weed out machines. All in all, it’s considered pretty effective.

Image credits Guixin Ye et al., 2018, CCS ’18.

The team, however, plans to change this. Their AI draws on a technique known as a ‘Generative Adversarial Network’, or GAN. In short, this approach uses a large number of (software-generated) captchas to train a neural network (known as the ‘solver’). After going through boot camp, this neural network is then further refined and pitted against real captcha codes.

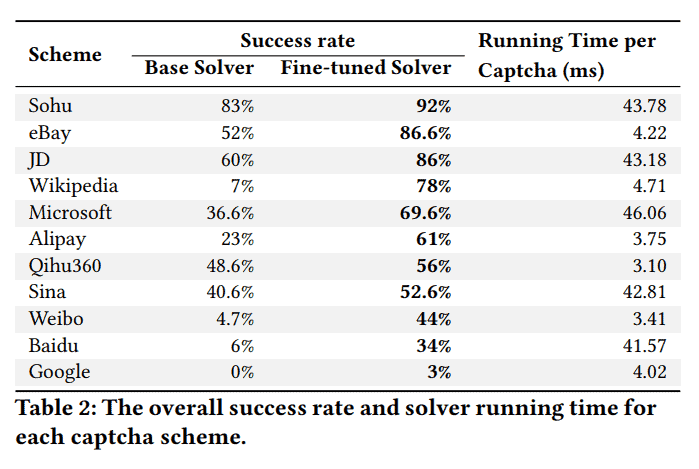

In the end, what the team created is a solver that works much faster and with greater accuracy than any of its predecessors. The programme only needs about 0.05 seconds to crack a captcha when running on a desktop PC, the team reports. Furthermore, it has successfully attacked and cracked versions of captcha that were previously machine-proof.

The programme was tested on 33 captcha schemes, of which 11 are used by many of the world’s most popular websites — including eBay, Wikipedia, and Microsoft. The system had much more success relative to its counterparts, although it did have some difficulty breaking through certain “strong security features” used by Google. Still, even in this case, the system saw a success rate of 3% which sounds pitiful, but “is still above the 1% threshold for which a captcha is considered to be ineffective,” the team writes.

Image credits Guixin Ye et al., 2018, CCS ’18.

So the solver definitely delivers. But it’s also much easier to use than any of its competitors. Owing to the GAN-approach the team used, it takes much less effort and time to train the AI — which would involve manually deciphering, tagging, and feeding captcha examples to the network. The team says it only takes 500 or so genuine captcha codes to adequately train their programme. It would take millions of examples to manually train it without the GAN, they add.

One further advantage of this approach is that it makes the AI system-independent (it can attack any variation of captcha out there). This comes in stark contrast to previous machine-learning captcha breakers. These manually-trained systems were both laborious to build and easily thrown off by minor changes in security features within the codes.

All in all, this software is very good at breaking codes; so good, in fact, that the team believes they can no longer be considered a meaningful security measure.

“This is the first time a GAN-based approach has been used to construct solvers,” says Dr Zheng Wang, Senior Lecturer at Lancaster University’s School of Computing and Communications and co-author of the research. “Our work shows that the security features employed by the current text-based captcha schemes are particularly vulnerable under deep learning methods.”

“We show for the first time that an adversary can quickly launch an attack on a new text-based captcha scheme with very low effort. This is scary because it means that this first security defence of many websites is no longer reliable. This means captcha opens up a huge security vulnerability which can be exploited by an attack in many ways.”

The paper “Yet Another Text Captcha Solver: A Generative Adversarial Network Based Approach” has been published in the journal CCS ’18 Proceedings of the 2018 ACM SIGSAC Conference on Computer and Communications Security.

Was this helpful?