Computers can compute a lot faster than humans, but they’re pretty dumb when it comes to learning. In fact, machine learning itself is only beginning to take off, i.e. real results showing up. A team from New York University and Massachusetts Institute of Technology are now leveling the field, though. They’ve devised an algorithm that allows computers to recognize patterns a lot faster and with much less information at their disposal than previously.

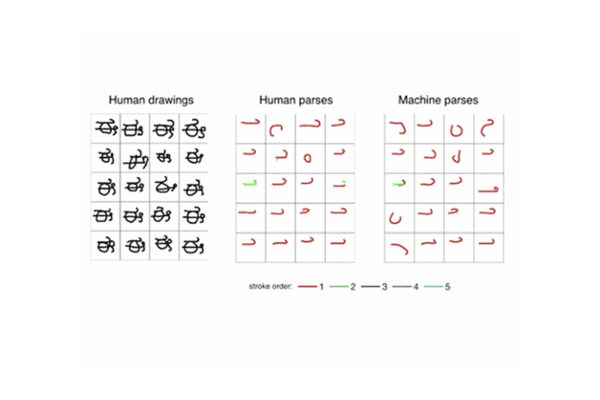

Image shows 20 different people drawing a novel character (left) and the algorithm predicting how those images were drawn (right)

When you tag someone in photos on Facebook, you might have noticed that the social network can recognize faces and suggests who you should tag. That’s pretty creepy, but also effective. Impressive as it is, however, it took million and millions of photos, trials and errors for Facebook’s DeepFace algorithm to take off. Humans on the other hand, have no problem distinguishing faces. It’s hard wired into us. See a face once and you’ll remember it a lifetime — that’s the level of pattern recognition and retrieval the researchers were after.

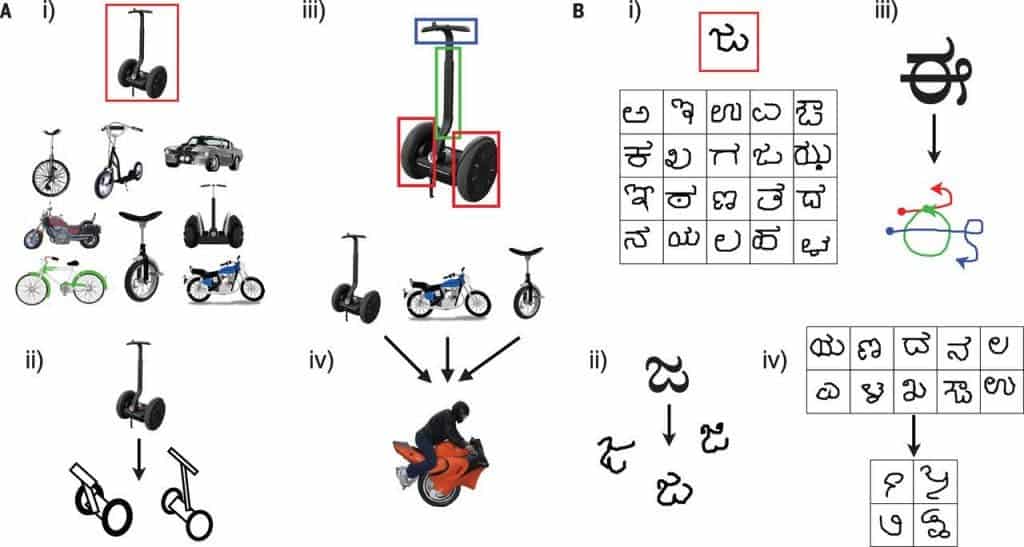

The framework the researchers presented in their paper is called Bayesian Program Learning (BPL). It can classify objects and generate concepts about them using a tiny amount of data, mirroring the way humans learn.

“It has been very difficult to build machines that require as little data as humans when learning a new concept,” Ruslan Salakhutdinov, an assistant professor of computer science at the University of Toronto, said in a news release. “Replicating these abilities is an exciting area of research connecting machine learning, statistics, computer vision, and cognitive science.”

BPL was put to the test by being presented 20 handwritten letters in 10 different alphabets. Humans also performed the test as control. Both human and machine were asked to match the letter to the same character written by someone else. BPL scored 97%, about as well as the humans and far better than other algorithms. For comparison, a deep (convolutional) learning model scored about 77%.

[ALSO READ] Machine learning used to predict crimes before they happen

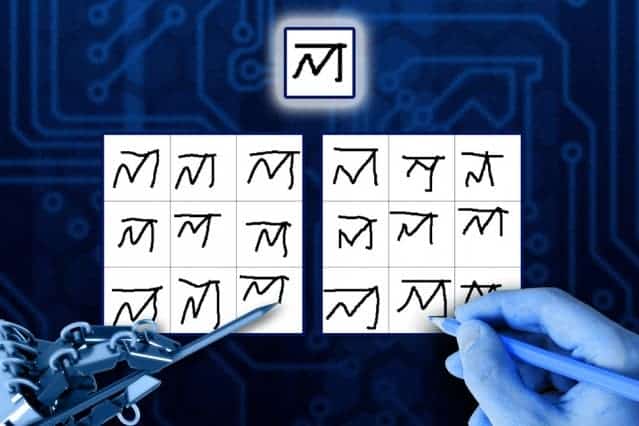

BPL also passed a visual form of the Turing Test by drawing letters that most humans couldn’t distinguish from a human’s handwriting.The Turing test was first proposed by British scientist Alan Turing in the 1940s as a way to test whether the product of an artificial intelligence or computer program can fool humans it’s been made by humans.

“I think for the more creative tasks — where you ask somebody to draw something, or imagine something that they haven’t seen before, make something up — I don’t think that we have a better test,” Tenenbaum told reporters on a conference call. “That’s partly why Turing proposed this. He wanted to test the more flexible, creative abilities of the human mind. Why people have long been drawn to some kind of Turing test.”“We are still far from building machines as smart as a human child, but this is the first time we have had a machine able to learn and use a large class of real-world concepts – even simple visual concepts such as handwritten characters – in ways that are hard to tell apart from humans,” Joshua Tenenbaum, a professor at MIT in the Department of Brain and Cognitive Sciences and the Center for Brains, Minds and Machines, said in the release.

“What’s distinctive about the way our system looks at handwritten characters or the way a similar type of system looks at speech recognition or speech analysis is that it does see it, in a sense, as a sort of intentional action … When you see a handwritten character on the page what your mind does, we think, [and] what our program does, is imagine the plan of action that led to it, in a sense, see the movements, that led to the final result,” said Tenanbaum. “The idea that we could make some sort of computational model of theory of mind that could look at people’s actions and work backwards to figure out what were the most likely goals and plans that led to what [the subject] did, that’s actually an idea that is common with some of the applications that you’ve pointed to. … We’ve been able to study [that] in a much more simple and, therefore, more immediately practical and actionable way with the paper that we have here.”

The research was funded by the military to improve its ability to collect, analyze and act on image data. Like most major military applications, though, it will surely find civilian uses.

Was this helpful?