The word on every tech executive’s mouth today is data. Curse or blessing, there’s so much data lying around – with about 2.5 quintillion bytes of data added each day – that it’s become increasingly difficult to make sense of it in a meaningful way. There’s a solution to the big data problem, though: machine learning algorithms that get fed countless variables and spot patterns otherwise oblivious to humans. Researchers have already made use of machine learning to solve challenges in medicine, cosmology and, most recently, crime. Tech giant Hitachi, for instance, developed a machine learning interface reminiscent of Philip K. Dick’s Minority Report that can predict when, where and possibly who might commit a crime before it happens.

Machines listening from crime

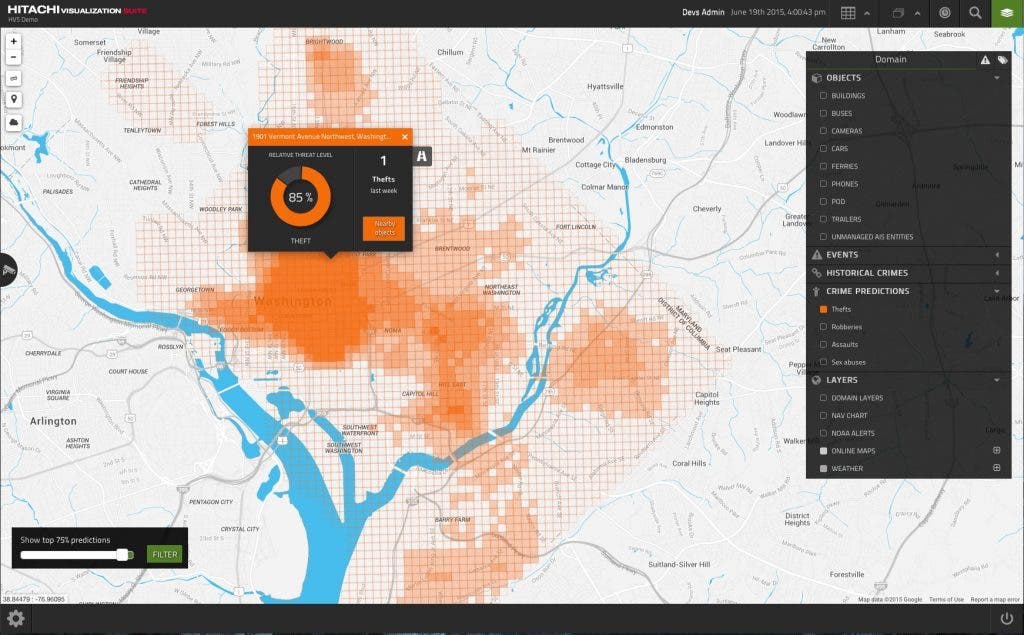

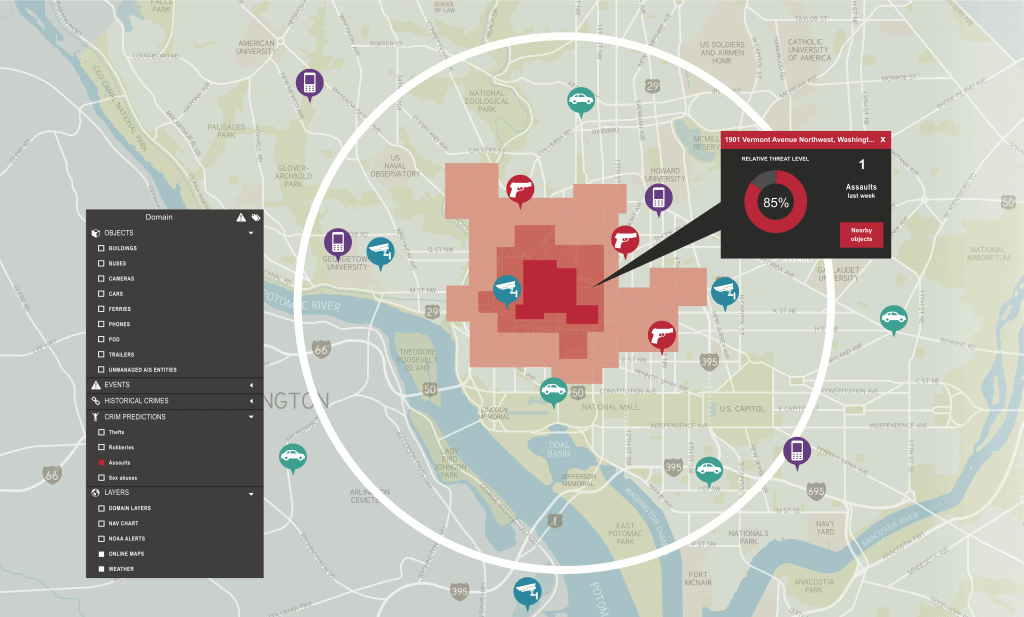

It’s called Visualization Predictive Crime Analytics (PCA) and while it hasn’t been tested in the field yet, Hitachi claims that it works by gobbling immense amounts of data from key sensors layered across a city (like those that listen for gun shots), weather reports and social media to predict where crime is going to happen next. “A human just can’t handle when you get to the tens or hundreds of variables that could impact crime,” says Darrin Lipscomb who is directly involved in the project, “like weather, social media, proximity to schools, Metro [subway] stations, gunshot sensors, 911 calls.”

Police nowadays use all sorts of gimmicks to either rapidly intervene when a crime is taking place or take cues and sniff leads that might help them avert one. For instance, police officers might use informers, scour social media for gang altercations or draw a map of thefts to predict when the next one might take place. This is a cumbersome process and officers are only human after all. They will surely miss some valuable hints a computer might easily draw out. Of course, the reverse is also true, as is often the case in fact, but if we’re talking about volume – predicting thousands of possible felonies every single day in a big city – the deep learning machine will beat even the most astute detective.

PCA is particularly effective, supposedly, at scouring social media which Hitachi says improves accuracy by 15%. The company used a natural language processing algorithm to teach their machines how to understand colloquial text or speech posted on facebook or twitter. It knows, for instance, how to pull out geographical information and tell if a drug deal might take place in a neighborhood.

Officers would use PCA’s interface – quite reminiscent of Minority Report, again – to see which areas are more vulnerable. A colored map shows where cameras and sensors are placed in a neighborhood and alerts the officer on duty if there’s a chance a crime might take place there, be it a robbery or a gang brawl. Dispatch would then send officers in the area to intervene or possibly deter would-be felons from engaging in criminal activity.

In all event, this is not evidence of precognition. The platform just returns vulnerable neighborhoods and alerts officers of a would-be crime. You might have heard about New York City’s stop-and-frisk practice, where suspicious people are searched for guns or drugs. PCA works fundamentally different since it actually offers officers something to start with – it at least provides a more focused leverage. “I don’t have to implement stop-and-frisk. I can use data and intelligence and software to really augment what police are doing,” Lipscomb says. Of course, this raises the question: won’t this lead to innocent people being targeted on mere suspicion fed by a computer? Well, just look at stop-and-frisk. More than 85% of those searched on New York’s streets are either Latino or African-American. Even if you account for differences ethnic crime rates, stop-and-frisk is clearly biased. The alternative sounds a lot better since police might actually know who to target.

Hitachi’s crime prediction tool will be tested in six large US cities soon, which Hitachi has declined to spell. The trials will be double-blinded, meaning police will go on business as usual, while the machine will run in the background. Then Hitachi will compare what crimes the police report with the crimes the machine predicted might have happened. If the two overlap beyond a statistical threshold, then you have a winner.