Elon Musk, the man with more outrageous ventures than fingers, is at it again. This time, he’s announcing a new startup called TruthGPT, which he claims will be the ultimate truth-seeker, “trying to understand the nature of the universe.”

But let’s be real here. Is Musk really trying to save us from the looming dangers of artificial general intelligence (which isn’t even a thing yet), or is this just another PR stunt to boost his ego?

Musk vs. ChatGPT: The Battle of the AIs

The world wasn’t ready for ChatGPT when OpenAI first unveiled it in December 2022. With just a few simple prompts, ChatGPT can generate entire articles, essays, jokes, and even poetry. It’s not perfect, but it’s eerily good.

By January, it had already reached 100 million active users. For comparison, it took TikTok nine months and Instagram two and a half years that they took to reach the same number of users. Since the advent of the internet, no app or online service has been able to achieve such a fast launch ramp.

The chatbot’s ‘reasoning’ abilities are so good that ChatGPT-4 (the latest public version) can score among the top 10 percent of students on the Uniform Bar Examination, which means ChatGPT can theoretically qualify as a lawyer in 41 US states and territories. It can also score 1,300 out of 1,600 on the SAT and a five out of five on Advanced Placement high school exams in biology, calculus, psychology, statistics, and history.

But while ChatGPT is incredibly human-like in its replies and interactions — almost frighteningly so — it is far from perfect. Its biggest flaw is that it still makes stuff up.

All current chatbots, whether they’re made by the Microsoft-backed OpenAI or Google, suffer from this problem, which AI researchers affectionately call “hallucinating”. Depending on what you ask it to do, the chatbot will almost always comply and make up stuff on the fly if it has to. When pressed for sources, it will invent fake websites, science journals and papers, and even fictitious people just to prove its point — all with the utmost confidence.

In some instances, these confusing and inaccurate statements churned out by ChatGPT can cancel out its strong points. ChatGPT can be incredibly insightful and inspiring during one moment but dumb as a brick the next.

This is a really tough nut to crack because such systems do not have real reasoning abilities the way people do, so it is very challenging to make them understand what is true and what isn’t.

And this is where Musk comes in. Or claims to come in.

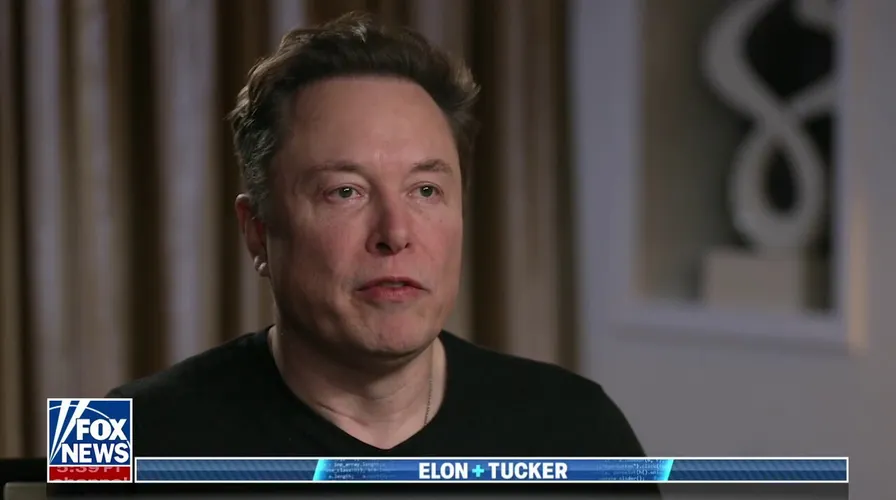

TruthGPT on Fox News: Oh, the irony

Musk’s TruthGPT is being touted as the answer to ChatGPT’s flaws. According to Musk, TruthGPT will be a “maximum truth-seeking AI,” as opposed to ChatGPT’s incoherent ramblings.

Okay, sure, Elon. But let’s not forget that you were squeezed out of OpenAI, the company behind ChatGPT, after you were initially a founding board member in 2015 and you tried to take the company over (and failed). Could this be a case of sour grapes, or is there really a need for a new AI language model?

If you thought things couldn’t get any weirder, Musk made this announcement on Fox News during an interview with Tucker Carlson. Yes, the same Tucker Carlson who is the embodiment of fake news. The man’s job is to literally lie all day on national television.

The irony is palpable. Musk is promoting a truth-seeking AI on a network that is infamous for its lack of factual reporting. My head is spinning. But hey, at least the name “TruthGPT” has a nice ring to it, right?

“I’m going to start something which I call TruthGPT or a maximum truth-seeking AI that tries to understand the nature of the universe,” Musk said. “And I think this might be the best path to safety in the sense that an AI that cares about understanding the universe is unlikely to annihilate humans because we are an interesting part of the universe.”

If you’re scratching your head right now, you’re not alone.

Fox News is the perfect audience to drop doomsday scenarios because the viewers are fed fear-mongering on a moment-by-moment basis. That’s the network’s business. I mean, look at Tucker’s face during the interview.

Like any grifter worth their salt, Musk reveals an existential problem you never thought you had prior, and then immediately offers the solution. His solution.

But why? To save humanity of course.

In Musk’s vision, a super general artificial intelligence would be capable of dooming us all, but with the right constraints pioneered by TruthGPT, it would use this power responsibly. Musk offers a daft example as if to illustrate this point.

“We recognize humanity could decide to hunt down all the chimpanzees and kill them. We’re actually glad that they exist, and we aspire to protect their habitats,” said Musk, whose other company Neuralink has attracted the fury of animal welfare groups for its cruel experiments on monkeys that are implanted with devices in their brains.

And it was just last month that Elon penned an open letter urging AI companies and labs to pump the brakes on AI development for six months, claiming that recent advances pose “profound risks to society and humanity.”

Why six months though? Is that how much time you need to launch TruthGPT?

How does a truth-seeking AI work, anyway?

But let’s put the irony aside for a moment and focus on the real question: how does a truth-seeking AI actually work?

According to Musk, TruthGPT will be trained on a corpus of “truthful” data and use this information to generate responses to queries. But here’s the thing: what counts as “truthful” data?

Truth is a tricky thing. It’s not always black and white. What one person considers to be true might not be true for someone else.

But that’s what science is for, you might quip — and I agree. But are we going to pretend that TruthGPT will be used by people only to ask about how moons Jupiter has or what is a black hole? As a generative AI, TruthGPT will be used for all types of inquiries, including those that do not have a single right answer or which may require academic training in order to fully internalize the nuances of a thorny topic.

This is all the more confusing considering Musk had a very controversial interview with the BBC last week. When pressed by the BBC journalist on whether his tumbling handling of Twitter is promoting a rise in misinformation and hate speech on the social media platform, Musk immediately retorted “Who should be the arbiter of truth?”

He then went on to question whether journalists are fair arbiters of truth, adding he had more trust in “ordinary people” and finally calling the BBC journalist a liar because he couldn’t come up with examples of Twitter’s mismanagement of hate speech on the spot.

With this latest announcement, things are coming together. It is rather transparent that Musk has been planning this move into generative AI for months now and his dance around ‘what is truth’ has a definite goal: that of painting Musk and his projects as the harbingers of truth.

I’m gonna go on a limb right now and speculate that Musk is no savior.

The risks of generative AI

Despite Elon’s unsavory antics, he is right that generative AI poses many risks. However, the risks posed are not concerned with dooming civilization. That is rubbish.

Perhaps the biggest problem posed by generative AI in the near future is misinformation. Bad actors can use the technology to create convincing and tailored lies that could sway public opinion, manipulate elections, and incite social unrest.

For instance, there is AI-based software openly available to the public that can be used to create convincing fakes, such as impersonating a person’s voice and face on video. For instance, check out this 2023 Joe Rogan podcast with Apple founder Steve Jobs, who’s been dead since 2011.

And then there’s the issue of generative AI in general. The pace at which AI technology is advancing is much faster than our ability to regulate it.

We’ve already seen examples of generative AI being used to create deepfakes and spread false information. What’s to stop TruthGPT from being used in a similar way?

This is an opinion article. The author’s views do not necessarily reflect those largely held by ZME Science’s editorial staff.