ChatGPT has taken the world by storm, setting a record for the fastest user growth in January when it reached 100 million active users two months after launch. For those of you who’ve been living under a rock, ChatGPT is a chatbot launched by OpenAI, a research laboratory founded by some of the biggest names in tech such as Elon Musk, Reid Hoffman, Peter Thiel, and Sam Altman. ChatGPT can write emails, essays, poetry, answer questions, or generate complex lines of code all based on a text prompt.

In short, ChatGPT is a pretty big freaking deal, which brings us to the news of the week: OpenAI just announced the launch of GPT 4, the new and improved large multimodal model. Starting today, March 14, 2023, the model will be available for ChatGPT Plus users and to select 3rd party partners via its API.

Every time OpenAI has released a new Generative Pre-trained Transformer, or GPT, the latest version nearly always marked at least one order of magnitude of improvement over the previous iteration. I’ve yet to test the tool out but judging from the AI company’s official research blog post, this new update is no different, bringing a number of important improvements and new features.

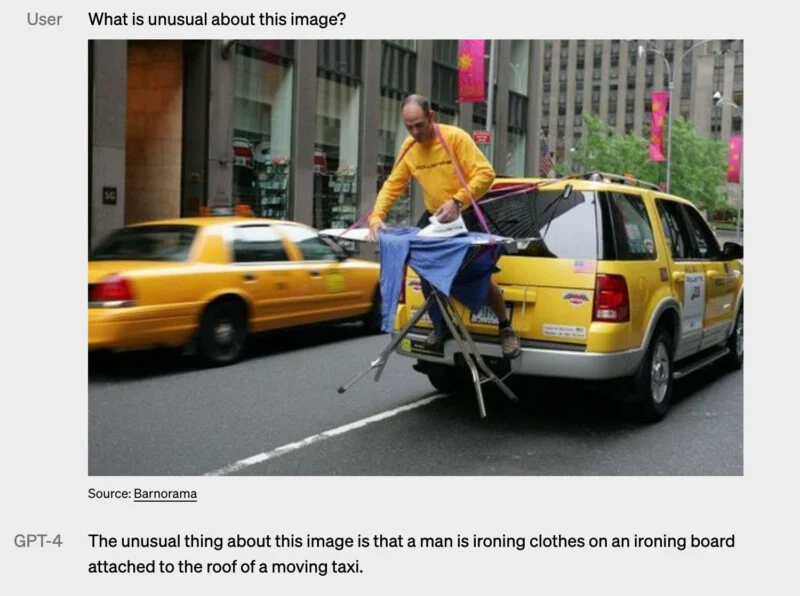

GPT-4 can now use images as prompts

Until GPT-3.5, the next-generation AI could only understand and output text. But now GPT-4 can accept images as prompts.

“It generates text outputs given inputs consisting of interspersed text and images,” the OpenAI announcement reads. “Over a range of domains — including documents with text and photographs, diagrams, or screenshots — GPT-4 exhibits similar capabilities as it does on text-only inputs.”

What this means in practical terms is that you can now upload an image and ask GPT-4 to do a number of things with it based on its analysis. For instance, say you upload an image depicting a bunch of balloons floating in the sky tethered by strings. If you ask GPT-4 what would happen if you cut the strings, the model can reason that the balloons will fly away into the sky.

In the example below shared by the OpenAI team, a purposefully strange image is uploaded showing a man ironing clothes while attached to the back of a taxi, and ChatGPT-4 can actually reason what’s unusual about the image if you ask it.

There were rumors that GPT-4 would also have video abilities, but we now know that if there were any such plans, they were scraped for this version. As of yet, there are no video or animation features but those are certainly not too far away.

It’s (a lot) smarter too

Per OpenAI, GPT-4 scores a lot higher on various aptitude tests. GPT-3.5 scored in the bottom 10th percentile at the Uniform Bar Exam and the 31st percentile at the Biology Olympiad. GPT 4 scores in the 90th and 99th percentile for the bar exam and olympiad, respectively, placing it on par with some of the brightest human students.

Here’s an example of GPT-4’s improved reasoning abilities.

INPUT: Andrew is free from 11 am to 3 pm, Joanne is free from noon to 2 pm and then 3:30 pm to 5 pm. Hannah is available at noon for half an hour, and then 4 pm to 6 pm. What are some options for start times for a 30 minute meeting for Andrew, Hannah, and Joanne?

| GPT-3.5 | GPT-4 |

| Here is a list of their availability: – Andrew: 11 am to 3 pm – Joanne: noon to 2 pm, and 3:30 pm to 5 pm – Hannah: noon to 12:30 pm, and 4 pm to 6 pm Based on their availability, there is a 30-minute window where all three of them are available, which is from 4 pm to 4:30 pm. So, the meeting can be scheduled at 4 pm. | Andrew: 11 am – 3 pm Joanne: 12 pm – 2 pm, 3:30 pm – 5 pm Hannah: 12 pm – 12:30 pm, 4 pm – 6 pm Common availability for a 30-minute meeting: 12 pm – 12:30 pm |

Finding the open window for when three people with different schedules are available can be complicated even for a human to figure out quickly. In this scenario, GPT-3.5 fails in suggesting 4 pm as a proper time because it conflicts with Andrew’s schedule. However, GPT-4 was able to reason correctly and offers a good solution that works for all three.

GPT-4 will be integrated into Microsoft services, including Bing

In February, Microsoft integrated a modified version of GPT-3.5 into Bing, its search engine that for years has been laughingly behind Google. Not anymore, though. Microsoft has invested over $10 billion in OpenAI, which highlights how serious it is about the coming generative AI revolution and in going after Google. In response, Google made a clumsy release announcement for its own AI-powered search engine called Bard, which at the moment looks underwhelming, to say the least.

GPT-4 can only answer questions about non-fiction events and people that it has information on until September 2021. But Bing will start using GPT-4 which has access to the open web, thereby enabling it to answer questions about events happening almost in real-time, as soon as they are reported across the internet.

In addition, GPT-4 is now available through the app’s API, which enables select 3rd parties to access the AI engine in their products. Duolingo the language app is using GPT-4 to deepen conversations with users looking to learn a new language. Similarly, Khan Academy integrated the new GPT to offer personalized, one-on-one tutoring to students for math, computer science, and a range of other disciplines available on their platform.

The image prompt feature is currently available to only one outside partner. Be My Eyes, a free app that connects blind and low-vision people with sighted volunteers, integrated GPT-4 with its Virtual Volunteer.

“For example, if a user sends a picture of the inside of their refrigerator, the Virtual Volunteer will not only be able to correctly identify what’s in it, but also extrapolate and analyze what can be prepared with those ingredients. The tool can also then offer a number of recipes for those ingredients and send a step-by-step guide on how to make them,” says Be My Eyes in a blog post explaining this feature.

However, these features are pricy to have. OpenAI charges $0.03 per 1,000 “prompt” tokens, which is about 750 words. The image processing pricing has not been made public yet.

GPT-4 is still not perfect though

ChatGPT is as famous for its convincing, sometimes hilarious lies and hallucinations as it is for its phenomenal ability to synthesize information and drive human-like conversations. The good news is that GPT-4 is much more accurate and factual.

“We spent 6 months making GPT-4 safer and more aligned. GPT-4 is 82% less likely to respond to requests for disallowed content and 40% more likely to produce factual responses than GPT-3.5 on our internal evaluations,” OpenAI said.

“In a casual conversation, the distinction between GPT-3.5 and GPT-4 can be subtle,” OpenAI wrote in a blog post announcing GPT-4. “The difference comes out when the complexity of the task reaches a sufficient threshold — GPT-4 is more reliable, creative and able to handle much more nuanced instructions than GPT-3.5.”

However, while it’s 40% more likely to deliver factual information, this doesn’t mean that it won’t continue to make mistakes, something that OpenAI acknowledges. This means that ChatGPT should be used with extreme caution, especially in high-stakes situations such as for outputting content for your job presentation.

Nevertheless, GPt-4 marks yet another huge milestone in the ongoing AI revolution that is set to transform our lives in more than one way.

Was this helpful?