Adobe, the company known for giving us Photoshop, is trying to help you recognize what photos have been tampered with.

As we previously wrote, it’s becoming harder and harder to detect tampered images and videos from the real thing, and AI tools continue to make it more difficult. When you consider the ability of social media to spread these images like wildfire without even the slightest fact-checking, this becomes more than a nuisance — it becomes a real problem in society.

An early arms race

Many companies (including Adobe) are developing their own tools to make it easier and easier to manipulate the visual, but there’s also the other side: detecting what’s been manipulated. At the CVPR computer vision conference, Adobe demonstrated how this field, called digital forensics, can be automated quickly and efficiently. This type of approach could ultimately be incorporated into our daily lives to establish the authenticity of social media photos.

Although the research paper does not represent a breakthrough per se, it’s intriguing to see Adobe plunging into this field.

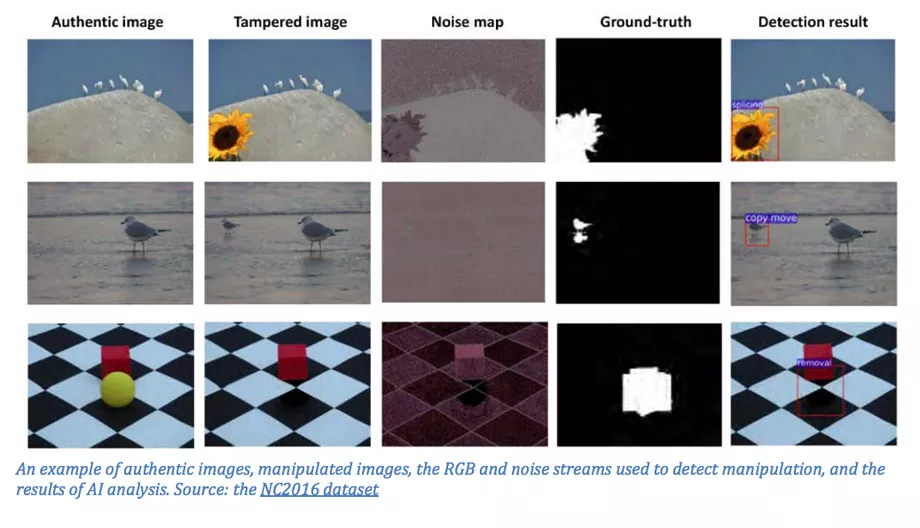

They work on three types of manipulation:

- splicing, where two different images are combined

- cloning, where objects are copied and pasted

- removal, where an object is edited out altogether

When researchers or digital workers try to assess the validity of images, they look for artifacts left behind by editing. For instance, when an object is copied from an image and pasted onto another, the background noise level of the two is often inconsistent. Adobe used an already established approach — taking a large dataset of images and “training” an algorithm — to detect tampering.

The new algorithm scored higher than other existing tools, but only marginally so. Furthermore, the tool has no application in so-called “deep fakes” — images and videos entirely created by AI. The algorithm is also only as good as the database it’s fed. For now, it’s still an early stage program.

It’s not hard to see this turning into an arms race of sorts. As detection algorithms improve, we might see more tools that better hide these manipulations. For now, we should all keep in mind just how easy it is to manipulate an image before we share it on Facebook. It’s becoming clearer that in much of today’s media, we’re already in an arms race between truth and lies. There’s a good chance this type of algorithm could play an important role in the future.