Climate — which should not be confused with weather — represents a long-term meteorological state, the statistical average of weather taken over a meaningful period of time.

Talking climate

Whether it’s on the news, at our workplace, or even while having a coffee with friends, we often hear about climate — it’s a hot topic. But climate is often misunderstood and misrepresented, or simply mistaken for weather.

If we’re talking about climate, we first need to consider longer periods of time. Simply put, climate is the long-term average of weather. The standard averaging period is 30 years, but sometimes, climate is also discussed in terms of year-to-year variations. However, speaking of daily or hourly climate variations doesn’t really make much sense.

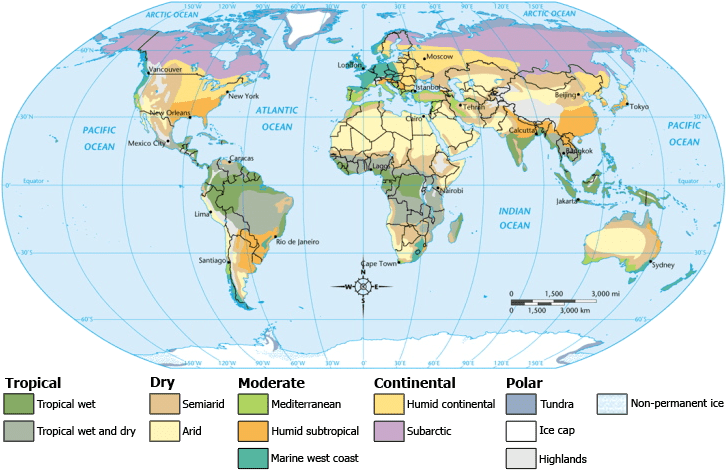

Loosely speaking, we can split it into microclimate, local or regional climate, and planetary climate. Microclimate refers to a very small or restricted area, especially when this differs from the surrounding area. We won’t focus on it here. Regional climates, on the other hand, are more interesting.

The climate of a place is affected by several factors, most importantly its latitude and altitude. Other elements such as terrain and nearby water bodies and their currents can also be important. Still, defining a local climate is no easy feat. A local climate needs to consider several different variables, the most important of them being temperature and precipitation.

With local climates being so complex, there are several widely accepted classifications, each focusing on slightly different environmental aspects. Climates are usually defined either by geographic area (ie Mediterranean) or a defining feature (ie arid). Climates can also be defined by a biome — the community of plants and animals which share similar environmental conditions (ie montane forests).

Moving on, we can also talk about a global climate. The global climate takes the whole planet into consideration. The Intergovernmental Panel on Climate Change (IPCC), a scientific and intergovernmental body established to provide the world with a clear scientific view of the planet’s climate, defines climate as follows:

Climate in a narrow sense is usually defined as the “average weather,” or more rigorously, as the statistical description in terms of the mean and variability of relevant quantities over a period ranging from months to thousands or millions of years. The classical period is 30 years, as defined by the World Meteorological Organization (WMO). These quantities are most often surface variables such as temperature, precipitation, and wind. Climate in a wider sense is the state, including a statistical description, of the climate system.

Climate vs Weather

Although this has been briefly mentioned above, it’s important to emphasize the difference between climate and weather. The difference between climate and weather is often summed up as “Climate is what you expect, weather is what you get.”

To put it differently, weather represents the state of the atmosphere at a particular place over a short period of time, whereas climate implies meaningful averages — the weather’s long-term, global patterns. Day and night do affect the weather, but they have no impact on climate, as they’re averaged out.

So the weatherman not forecasting tomorrow’s rain doesn’t mean we’re getting climate models wrong, they’re two different things. Weather forecasts are also based on models, but these models incorporate observations of air pressure, temperature, humidity and winds to produce the best estimate of current and future conditions. Then, the forecaster looks at the most plausible scenarios and decides what’s the most likely outcome. The forecast depends both on the model, and on the forecaster’s skill, and the forecast is only short-term. Climate models, on the other hand, rely on statistical relationships between large-scale climate events, to predict long-term developments. It can sometimes be easier to predict the long-term development of the climate than the short-term.

Similarly, colder weather doesn’t necessarily mean the climate is getting colder. For instance, some parts of the US were extremely cold during the 2017-2018 winter, and yet, 2017 was one of the hottest years in recorded history. Weather is experienced locally, whereas climate refers to a wider area. It’s impossible to gauge what’s happening on a planetary scale from your local experiences alone. Weather also changes faster, while climate changes slower.

Another way to go about it is to think about weather as what you see on the window. Even if you’d look out the window every day to check the weather, you’d write it down, and after 30 years somehow made an average of it all, that still wouldn’t be the climate. Even if all the people in the world did the same thing, it still wouldn’t be the climate — because people don’t inhabit all areas of the world. We’d need people spread all around the planet, looking out the window every day, taking notes of it, and then averaging it over years or decades, to have an accurate view of what’s happening. This is why understanding climate can be so complex and confusing at times.

Thankfully, Earth-orbiting satellites and other technological advances have allowed scientists to have a good look at the broader picture, revealing clear signs of a changing climate.

Climate change

Earth’s climate is always changing; it always has, and it always will. But this doesn’t mean that all climate change is natural. Even though the climate is constantly modifying, it’s usually doing so at a much slower pace than a human lifespan. Right now, Earth’s climate is shifting at an unnaturally fast pace, and there is a mountain of scientific evidence tying that shift to human activity. But let’s take it step by step.

If we first want to talk about climate change, we need to consider what it changed from — what’s the initial state of the climate, the one we’re using to compare the current climate The World Meteorological Organization describes climate “normals” as “reference points used by climatologists to compare current climatological trends to that of the past or what is considered ‘normal’.” It does make much sense to compare today’s climate with, say, the Jurassic, 200 million years ago. Most climate scientists agree that it makes sense to consider climate change since the end of the last glacial period — which, coincidently or not, largely coincides with the start of human civilization.

Even more commonly, researchers consider the industrial revolution (1840) as a “normal,” reference point. That’s because over the past few thousand years, the climate hasn’t changed that much, and that’s when mankind started processes which can significantly affect the climate. Basically, the industrial revolution kickstarted man-made climate change.

So what’s causing climate change? For starters, there are the natural causes, such as shifts in Earth’s orbit around the Sun or in the solar energy that reaches our planet. Oceanic changes or volcanic eruptions can also play a big role. But one aspect, in particular, has proven to strongly affect climate: greenhouse gases.

Greenhouse gases absorb and emit energy within the thermal infrared range. In other words, they are a group of compounds that trap heat, making our planet much hotter than it would be otherwise.

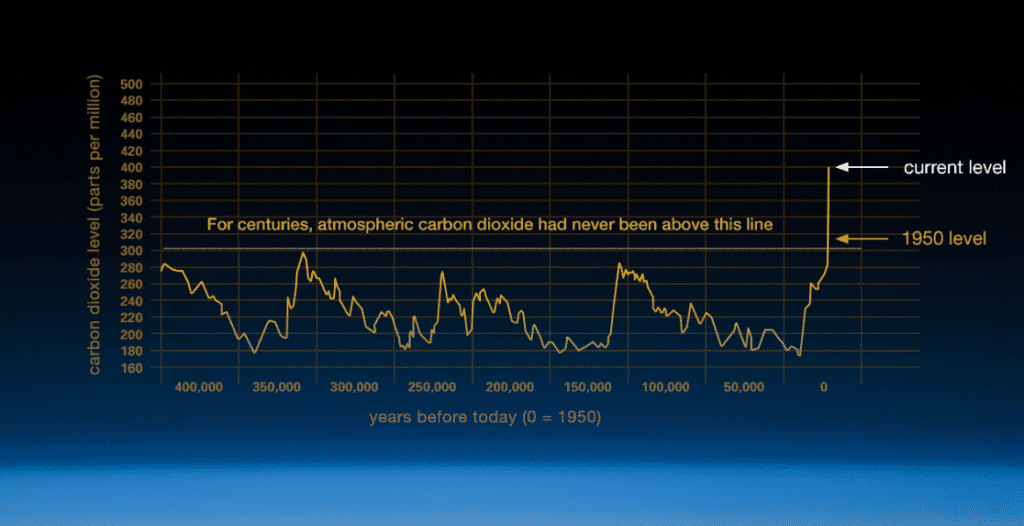

Our atmosphere comprises of several gases. The air we breathe is roughly 78.09% nitrogen, 20.95% oxygen, 0.93% argon, 0.04% carbon dioxide, and small amounts of other gases. Although it makes up only a small percentage, carbon dioxide is a potent greenhouse gas. The concept of atmospheric CO2 increasing ground temperature was first published by a Nobel-Prize winning Swedish scientist by the name of Svante Arrhenius in 1896. Since then, the phenomenon has been widely studied, understood, and accepted. Despite what some snake oil politicians might say, we’ve known that carbon dioxide can warm the planet since before we had cars.

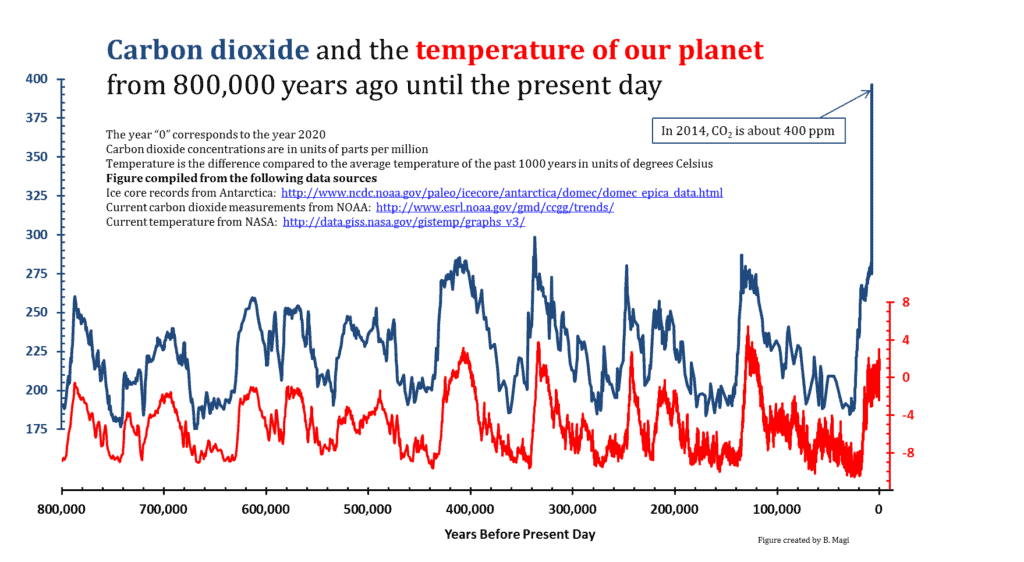

This is also confirmed in the long-term trends, as can be seen below.

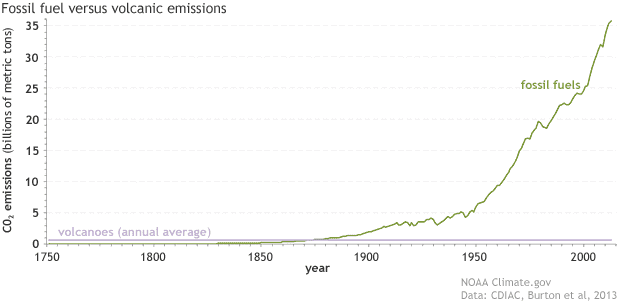

Temperature soon follows suit after CO2. Credits: UNC Charlotte.Global temperatures are tightly related to the amount of CO2 in the atmosphere. There are other greenhouse gases in the atmosphere, and other factors impacting global temperatures (such as volcanic eruptions, which can cause a massive yet temporary temperature drop) — but in the long term, CO2 and temperature go hand in hand. This is particularly important as in the past century, a new source of CO2 has emerged: us.

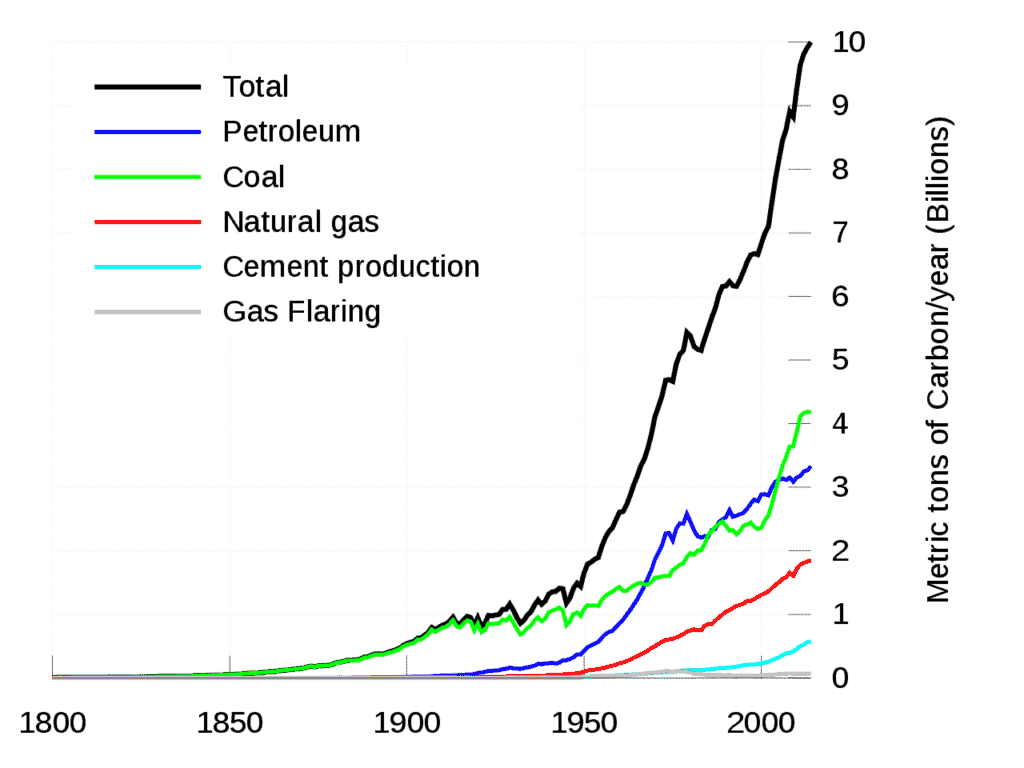

The industrial revolution was powered by burning coal. The process not only generated the power to support emerging industries, but it also generated carbon dioxide. Burning coal (and subsequently oil and other hydrocarbons) is a chemical reaction which creates carbon dioxide, water, and heat. Mankind needs the heat, but the carbon dioxide comes as a mandatory side effect.

Initially, mankind didn’t think much of this. After all, the idea that something as small as burning coal could affect the entire planet seems hardly believable — even laughable. But the scale of mankind’s emissions grew year after year, becoming a force to be reckoned with.

After a while, humans learned they could also burn a much more valuable hydrocarbon: petroleum. As humans diversified their hydrocarbon portfolio, emissions continued to grow.

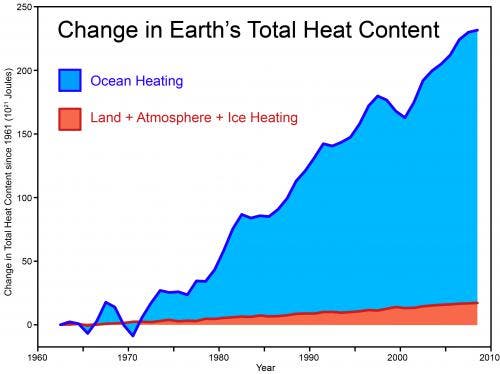

Towards the latter part of the 20th century, evidence started to pile up that we were, in fact, causing temperatures to rise. Here is just some of the compelling evidence that supports climate change:

- Global temperatures are rising. Both land-based and satellite sensors are indicating that surface temperatures have increased by 2.0 degrees Fahrenheit (1.1 degrees Celsius) since the late 19th century. To make things crystal clear, 16 of the 17 warmest years on record have occurred since 2001

- Ice sheets are shrinking overall. As expected after a temperature rise, polar ice is melting. Data from NASA’s Gravity Recovery and Climate Experiment show Greenland lost 150 to 250 cubic kilometers (36 to 60 cubic miles) of ice per year between 2002 and 2006, while Antarctica lost about 152 cubic kilometers (36 cubic miles) of ice between 2002 and 2005.

- Sea levels are rising. Another logical consequence of rising temperatures and melting ice — global sea levels rose about 8 inches in the last century. The rate has increased dramatically in the past few decades.

- Extreme events are becoming more and more common. While a causality between climate and extreme weather events is difficult to establish, it seems quite probable. In recent years, for instance, the US has witnessed both extreme drought and hurricanes much more than the average.

- Flowers are blooming faster and birds have shifted their migratory seasons.

- Ocean acidification is increasing. Often the most overlooked aspect of global warming, ocean acidification can be devastating. As some of the carbon dioxide is absorbed into the atmosphere, a part of it sinks to the oceanic water, where it makes the entire environment more acidic, with potentially devastating consequences.

This is just a part of the overwhelming evidence indicating to a global warming. Ice cores drawn from Greenland, Antarctica, and tropical areas, data from tree rings, geologic records, chemistry models — everything points to the same thing: greenhouse gases are causing climate change, and we are emitting the extra greenhouse gases.

There are literally thousands of papers concluding that climate change is happening now. A 2012 paper concluded that 13,950 peer-reviewed papers found evidence of climate change — only 24 didn’t. To make things even better, most of those were funded by fossil fuel companies.

Are we certain we’re causing climate change?

Yes, about as certain as we can be; about as certain as we rationally can be. About as certain as we are that smoking cigarettes is bad for your health — which, coincidentally or not, was also strongly denied by producers and lobby groups.

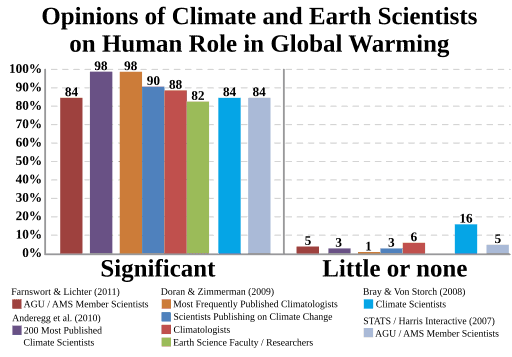

The vast (really vast) majority of climate scientists agree that we are causing climate change. We often talk about a consensus among scientists, but to some, that sounds like something people just dreamed up last night, ignoring the massive quantity of work carried out by some of the planet’s brightest minds.

For instance, the IPCC Fifth Assessment Report analyzed 9,200 peer-reviewed studies. If you’ve ever read through a peer-reviewed study, you’ll know just what a drag a single study can be, let alone thousands of them. The resulting report was over 2,000 pages long, its main conclusions being that warming of the atmosphere and ocean system is unequivocal and that it is extremely likely that human influence has been the dominant cause of observed warming since 1950. Some have demonized IPCC as a partisan group, but their conclusions fall right in line with those of researchers all around the world.

There have been several studies documenting existing information on climate change. In 2009, Doran et al found that 97% of climate scientists found evidence of man-made climate change. The same figure was reported by Andregg et al (2010) and Cook et al (2013). Oreskes et al found a 100% agreement within the papers they analyzed.

A group of 18 American leading scientific organizations issued a joint statement, endorsing the position. Among them were NASA, American Chemical Society, American Physical Society, The Geological Society of America, American Association for the Advancement of Science, the U.S. National Academy of Sciences. They wrote:

“Observations throughout the world make it clear that climate change is occurring, and rigorous scientific research demonstrates that the greenhouse gases emitted by human activities are the primary driver.”

Internationally, similar echoes were heard all around the scientific community. Here’s a list of other, international bodies and agencies with similar positions. Sure, you can cherry-pick a study or an organization to support your views — but it’s like with weather versus climate. No matter how much climate heats up, you’ll still have the occasional cold day.

Climate change is happening whether we like it or not, and it’s happening due to us — whether we like it or not.