David Dao, a PhD candidate at ETH Zurich, wears several hats. In addition to working on his doctorate, he’s the founder of Gain Forest Now (a decentralized fund using artificial intelligence to measure and reward sustainable nature stewardship), and he’s also the owner of a rather interesting GitHub page — on his page, he tracks some of the most concerning usages of AI (artificial intelligence).

While Dao seems busy with his other ventures and hasn’t updated his page in a few months, it’s still a most interesting collection. We keep hearing about the accomplishments and potential of artificial intelligence, but there’s a lot of risks and threats that come with AI as well. It’s not the robot revolution or anything in the distant future we’re talking about, we’re talking about real problems happening now.

Here’s an example: a “gaydar“. That’s right, there’s an AI that claims to have over 90% accuracy at identifying whether someone is gay just by looking at five photos of their faces — much better than human accuracy.

While this raises some interesting questions regarding the nature of homosexuality, it raises even more concerns regarding the potential for discrimination. If this type of AI becomes widespread, it would be like having a homosexuality badge on your chest, something which authoritarian regimes and discriminatory groups would be bound to use.

Dao also documented several employment AIs that were meant to analyze CVs and applications and find the best potential employees — but ended up discriminating against women. Amazon famously used this type of AI and scrapped it after it found systematic bias against women.

“In the case of Amazon, the algorithm quickly taught itself to prefer male candidates over female ones, penalizing CVs that included the word “women’s,” such as “women’s chess club captain.” It also reportedly downgraded graduates of two women’s colleges,” Dao’s page reads. Other employment AIs like HireVue or PredictiveHire also ended up doing the same thing.

The potential for discrimination abounds. Among others, Dao mentions a project that tries to assess whether you’re a criminal by looking at your face, an algorithm used in the UK to predict based on historical data which ended up biased against students of poor backgrounds, and a chatbot that started spouting antisemitic messages after a day of “learning” from Twitter.

It’s only natural that AIs will be faulty or imperfect at first. The problems emerge when the authors aren’t aware that the data they are feeding the AI is biased, and when these algorithms are implemented in the real world with underlying problems.

These biases are often racially charged. For instance, a Google image recognition program labeled several black people as gorillas. Meanwhile, Amazon’s Rekognition labeled darker-skinned women as men 31% of the time, while only doing the same thing for lighter-skinned women 7% of the time. Amazon is pushing this algorithm despite these problems, which it alleges are “misleading. Most identification algorithms seem to struggle with darker faces.

Surveillance

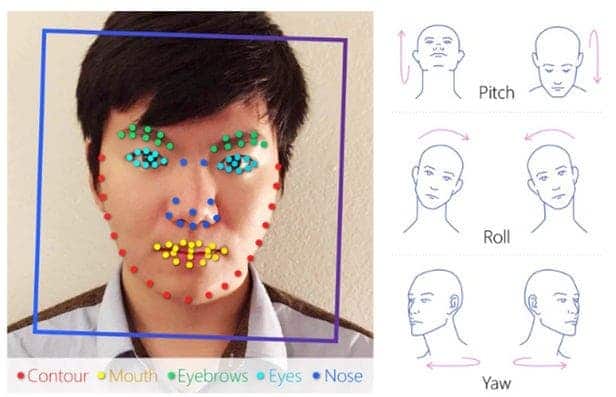

Another scary application that AI could be (and is) used in is surveillance. Clearview.ai, a little-known start-up, could end privacy as we know it. The company built a facial recognition database, matching photos of people to their name and images from social media. The application is already being used by some law enforcement to get names and addresses for potential suspects, and has even become a plaything for the rich to let them spy on customers and dates.

Another facial recognition system (Anyvision) is being used by the Israeli military for the surveillance of Palestinians living across the West Bank. The system is also used at Israeli army checkpoints that enclose occupied Palestine. AIs can also identify people (sometimes) from their gait and in China, this type of technology is already widely deployed. There are even companies interested in using gait analysis to track when and where their employees travel.

If that still doesn’t spook you out, AIs can also be used for censorship — and at least in China, they’re already deployed on a large scale. WeChat, a messaging app used by almost one billion people (mostly in China), uses automatic analysis to detect all text and images sent throughout the platform — and it does so in real-time. Text and images that are deemed harmful (which includes images of Winnie the Pooh) are removed in real-time, and users are potentially banned or investigated. The system becomes more and more powerful with each image sent through the platform, and censorship is already inescapable in China.

Perhaps even more concerning is the fact that all this surveillance could one day be used in a social credit system — where an algorithm determines how worthy you are of, say, getting a loan, or how serious your punishment should be in case of a crime. Yet again, China is at the forefront and already has such a system in place; among other things, the system bans millions from traveling (because they have too low a credit score) and penalizes people who share “harmful” images on social media. It also judges people based on what type of purchases they make and on their “interpersonal relationships”.

Military AIs

Naturally, it wouldn’t be a complete list without some scary AI military applications.

Perhaps the most notable incident involving the AI usage of weapons was the ‘Machine-gun with AI’ used to kill an Iran scientist pivotal to the country’s nuclear system. Essentially, a machine gun was mounted on the back of a regular truck equipped with an AI satellite system. The system tried to identify an Iranian scientist and the automatic gun “controlled by satellite” struck him thirteen times from a distance of 150 metres (490 ft), leaving his wife (who was sitting just 25 cm away from him) physically unharmed. The entire system then self-destructed, leaving no significant traces behind. The incident sent ripples throughout the world, showing that autonomous or semi-autonomous AI weapons are already good enough to be used.

Armed autonomous drones that can act in wars were also used in the Armenia-Azerbaijan conflict, deciding the tide of the battle by autonomously flying and destroying key targets and killing people. Autonomous Tanks such as the Russian Uran-9 were also tested in the Syrian Civil War.

There is no shortage of scary AI usages.

From surveillance and discrimination to outright military offensive, the technology seems sufficiently mature to cause real, substantial, and potentially long-lasting damage in society. Authoritarian governments seem eager to use them, as do various interest groups. It’s important to keep in mind that, while AI has huge potential to produce a positive effect in the world, the potential for nefarious uses is just as great.

Was this helpful?