Instinctively, we know a lot about the surrounding world by touching it, poking it and getting a feel for it. But that kind of information is extremely difficult to convey to computers, which is why augmented reality is still in its infancy. But a team of researchers from MIT believe they have a solution for that, and this means – among many other applications – that everyone’s favorite game could get a beautiful revamp.

It’s hard to believe that Pokemon Go came about only a few weeks ago, it seems that everyone has been chasing Pokemon for ages. Of course, nostalgia and our love for the franchise was a decisive factor in the game’s success, but players were delighted by the augmented reality. Augmented reality (AR) is a live direct or indirect view of a physical environment which includes computer-generated elements – such as Pokemon.

However, the Pokemon don’t interact with the environment around them – and this is where the new technology kicks in. Researchers from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) have recently done just that, developing an imaging technique called Interactive Dynamic Video (IDV) that lets you reach in and “touch” objects in videos.

“This technique lets us capture the physical behavior of objects, which gives us a way to play with them in virtual space,” says CSAIL PhD student Abe Davis, who will be publishing the work this month for his final dissertation. “By making videos interactive, we can predict how objects will respond to unknown forces and explore new ways to engage with videos.”

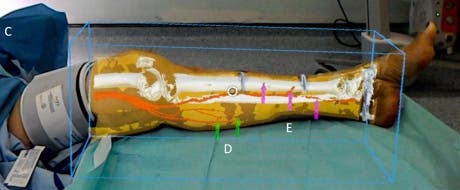

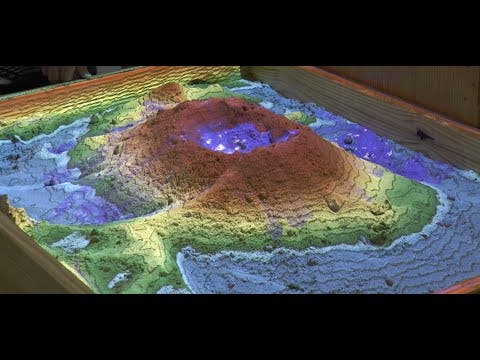

Of course, IDV has many more, and far less trivial practical applications. For instance, it could produce visual cues to help architects and engineer assess the structural stability and condition of a building, and it might even help with practice for invasive surgeries.

“The ability to put real-world objects into virtual models is valuable for not just the obvious entertainment applications, but also for being able to test the stress in a safe virtual environment, in a way that doesn’t harm the real-world counterpart,” says Davis.

Or of course, we could use it for games.

How it works

Typically, if you want to model something in the real world you must first build a 3D model for it. That’s time and resource consuming, and can be borderline impossible for many objects. With Davis’ work, even five seconds of video can have enough information to create realistic simulations, at least in simple environments.

In order to create simulations, he analyzed video clips to find “vibration modes” at different frequencies. These vibration modes represent the way in which an object can move, and by understanding these modes, researchers can predict how the object will move in a new environment.

“Computer graphics allows us to use 3-D models to build interactive simulations, but the techniques can be complicated,” says Doug James, a professor of computer science at Stanford University who was not involved in the research. “Davis and his colleagues have provided a simple and clever way to extract a useful dynamics model from very tiny vibrations in video, and shown how to use it to animate an image.”

In order to test the technology, Davis used IDV on things such as a bridge, a jungle gym, and a ukelele. With just a few mouse clicks, he was able to make the image bend and stretch in different directions, as well as making his own hand telekinetically control the leaves of a bush.

“If you want to model how an object behaves and responds to different forces, we show that you can observe the object respond to existing forces and assume that it will respond in a consistent way to new ones,” says Davis, who also found that the technique even works on some existing videos on YouTube.

Before this technology starts affecting a Pokemon near us, we can just feast on these videos. I for one, am looking forward to seeing the technology live.