A groundbreaking research out of the Salk Institute suggests synapses are 10 times bigger in the hippocampus. Conversely, this means the memory capacity is 10 times larger than previously thought, given synapse size is directly related to memory. Moreover, the team found these synapses adjust in size constantly.

Every 20 minutes, synapses grow bigger or smaller, adjusting themselves for optimal neural connectivity. The clues could prove paramount to developing artificial intelligence or computers that are more akin to the human brain: phenomenal computing power using minimal energy input.

What are memories? Neuroscientists reckon memories are microscopic chemical changes at the connection points between neurons in the brain called synapses. These junctions connect the output ‘wire’ (an axon) from one neuron to an input ‘wire’ (a dendrite) of a second neuron. The signal travels across the synapse via neurotransmitters — chemicals that communicate information like glutamate, aspartate, D-serine, γ-aminobutyric acid (GABA), glycine etc. — and each neuron can have thousands of these synapses. There are 100 billion neurons in the human brain, each of which connects to up to 10,000 other neurons. By some estimates, there are over 100 trillion synapses in the human brain.

Though information is thought to travel through most of the neural circuits and networks of the brain, the consensus is that memories are coded and structured in the area of the brain called the hippocampus.

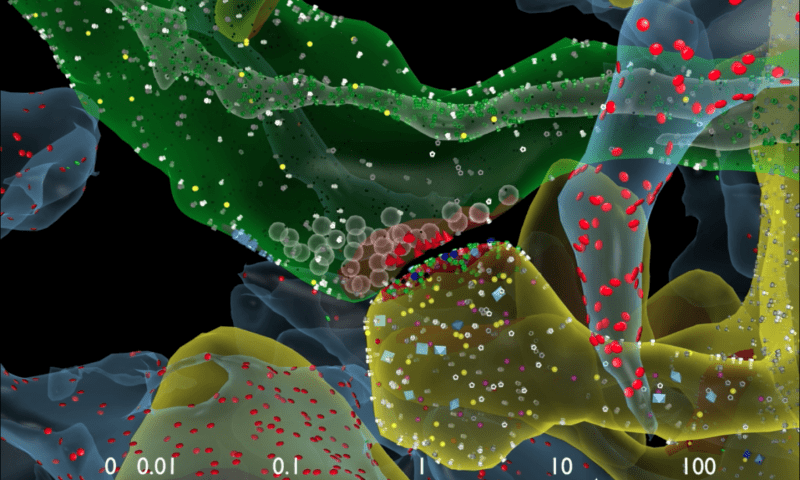

Terry Sejnowski, Salk professor, and Kristen Harris, co-senior author and professor of neuroscience at the University of Texas, Austin, reconstructed every dendrite, axon, glial process, and synapse from a volume of hippocampus the size of a single red blood cell. They were baffled by the complexity they discovered.

This 3D reconstruction of rat hippocampus tissue showed that in some cases a single axon from one neuron formed two synapses reaching out to a single dendrite of a second neuron. In other words, the first neuron was sending a duplicate signal. But was this really a duplicate? What about the connection strength? The researchers sought to answer these questions and more by measuring the size of the synapses.

Typically, neuroscientists class synapses size as being small, medium and large with little room left for interpretation in between.

Advanced microscopy and computational algorithms were deployed to reconstruct the rat’s brain in terms of connectivity, shapes, volumes and surface area down to a nanomolecular level.

“We were amazed to find that the difference in the sizes of the pairs of synapses were very small, on average, only about eight percent different in size. No one thought it would be such a small difference. This was a curveball from nature,” says Tom Bartol, a Salk staff scientist.

When the team plugged this seemingly dismissive eight percent difference into their models, they found it mattered a lot. Typically, the difference in size between the smallest and largest synapses vary by a factor of 60. Most synapses are small. Given synapses of all sizes differ by 8%, the researchers determined there could be as many as 26 different categories of synapse size — not just small, medium or large. “Our data suggests there are 10 times more discrete sizes of synapses than previously thought,” says Bartol. “This is roughly an order of magnitude of precision more than anyone has ever imagined,” says Sejnowski.

There’s more to it. Synapses don’t always work. Only 10 to 20% of the time these activate neurons. “We had often wondered how the remarkable precision of the brain can come out of such unreliable synapses,” says Bartol.

We now may have an answer. The team used their new data and a statistical model to find out how many signals it would take a pair of synapses to get to that eight percent difference. For every 1,500 events, a small synapse will change in size, or every 20 minutes. Bigger synapses need a couple hundred events, or 1 to 2 minutes.

“This means that every 2 or 20 minutes, your synapses are going up or down to the next size. The synapses are adjusting themselves according to the signals they receive,” says Bartol.

“Our prior work had hinted at the possibility that spines and axons that synapse together would be similar in size, but the reality of the precision is truly remarkable and lays the foundation for whole new ways to think about brains and computers,” says Harris. “The work resulting from this collaboration has opened a new chapter in the search for learning and memory mechanisms.” Harris adds that the findings suggest more questions to explore, for example, if similar rules apply for synapses in other regions of the brain and how those rules differ during development and as synapses change during the initial stages of learning.

“The implications of what we found are far-reaching,” adds Sejnowski. “Hidden under the apparent chaos and messiness of the brain is an underlying precision to the size and shapes of synapses that was hidden from us.”

“This is a real bombshell in the field of neuroscience,” says Terry Sejnowski, Salk professor and co-senior author of the paper, which was published in eLife. “We discovered the key to unlocking the design principle for how hippocampal neurons function with low energy but high computation power. Our new measurements of the brain’s memory capacity increase conservative estimates by a factor of 10 to at least a petabyte, in the same ballpark as the World Wide Web.”

The adult brain works on only 20 watts of continuous power. That’s less than a dim light bulb, but a conventional machine that could simulate the entire human brain would need to have an entire river’s course bent just to cool it! Now, computer scientists could exploit these findings to devise artificial neurons that are more energy-efficient. “This trick of the brain absolutely points to a way to design better computers,” says Sejnowski. “Using probabilistic transmission turns out to be as accurate and require much less energy for both computers and brains.”

Was this helpful?