Not only are people unable to distinguish between real faces and AI-generated faces, but they also seem to trust AI-generated faces more. The findings from a relatively small study suggest that nefarious actors could be using AI to generate artificial faces to trick people.

Worse than a coin flip

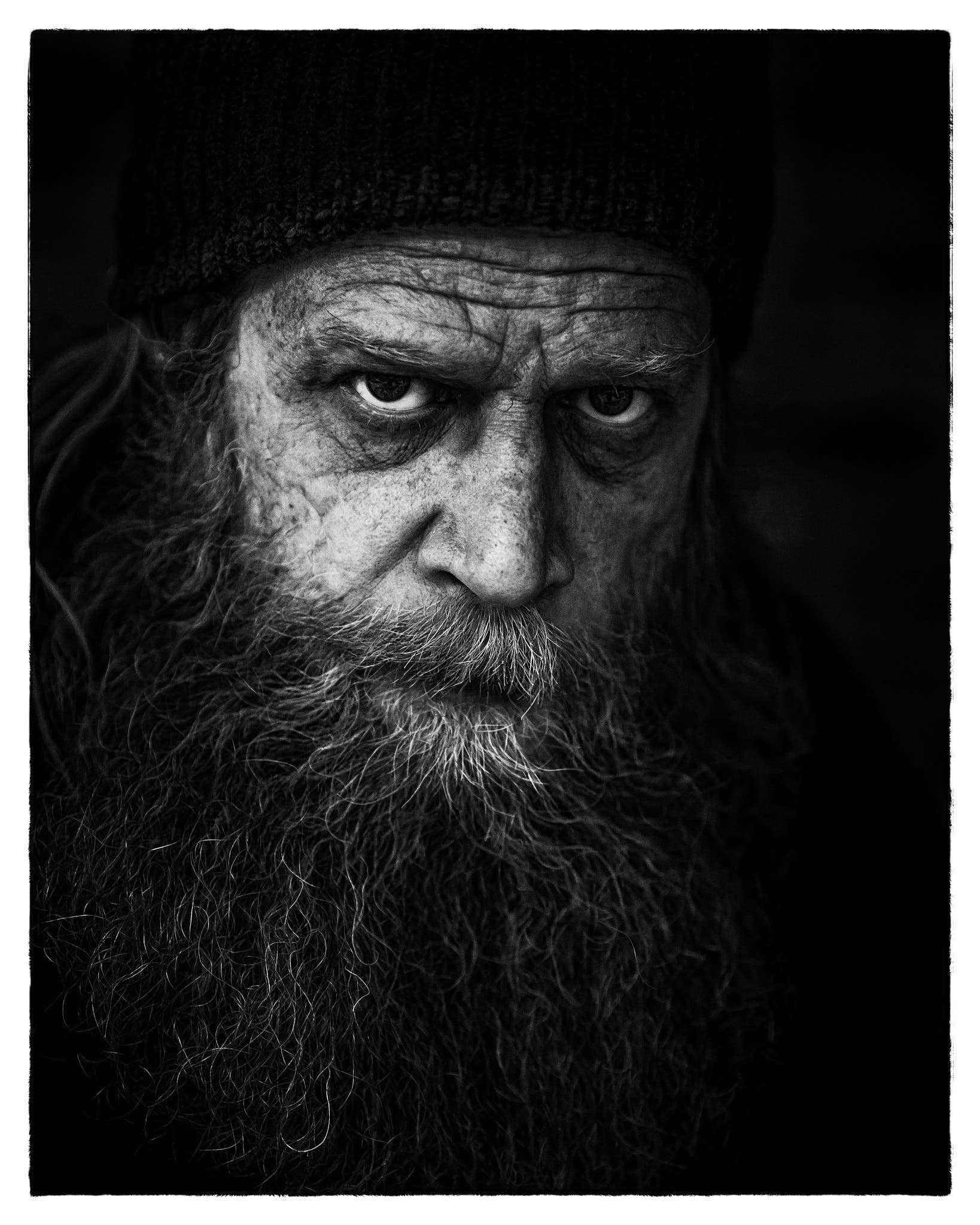

In the past years, Artificial Intelligence has come a long way. It’s not just to analyze data, it can be used to create text, images, and even video. Particularly intriguing applications are AI generated models and the creation of human faces.

In the past couple of years, algorithms have become strikingly good at creating human faces. This could be useful on one hand — it enables low-budget companies to produce ads, for instance, essentially democratizing access to valuable resources. But at the same time, AI-synthesized faces can be used for disinformation, fraud, propaganda, and even revenge pornography.

Human brains are generally pretty good at telling apart real from fake, but when it comes to this area, AIs are winning the race. In a new study, Dr. Sophie Nightingale from Lancaster University and Professor Hany Farid from the University of California, Berkeley, conducted experiments to analyze whether participants can distinguish state of the art AI-synthesized faces from real faces and what level of trust the faces evoked.

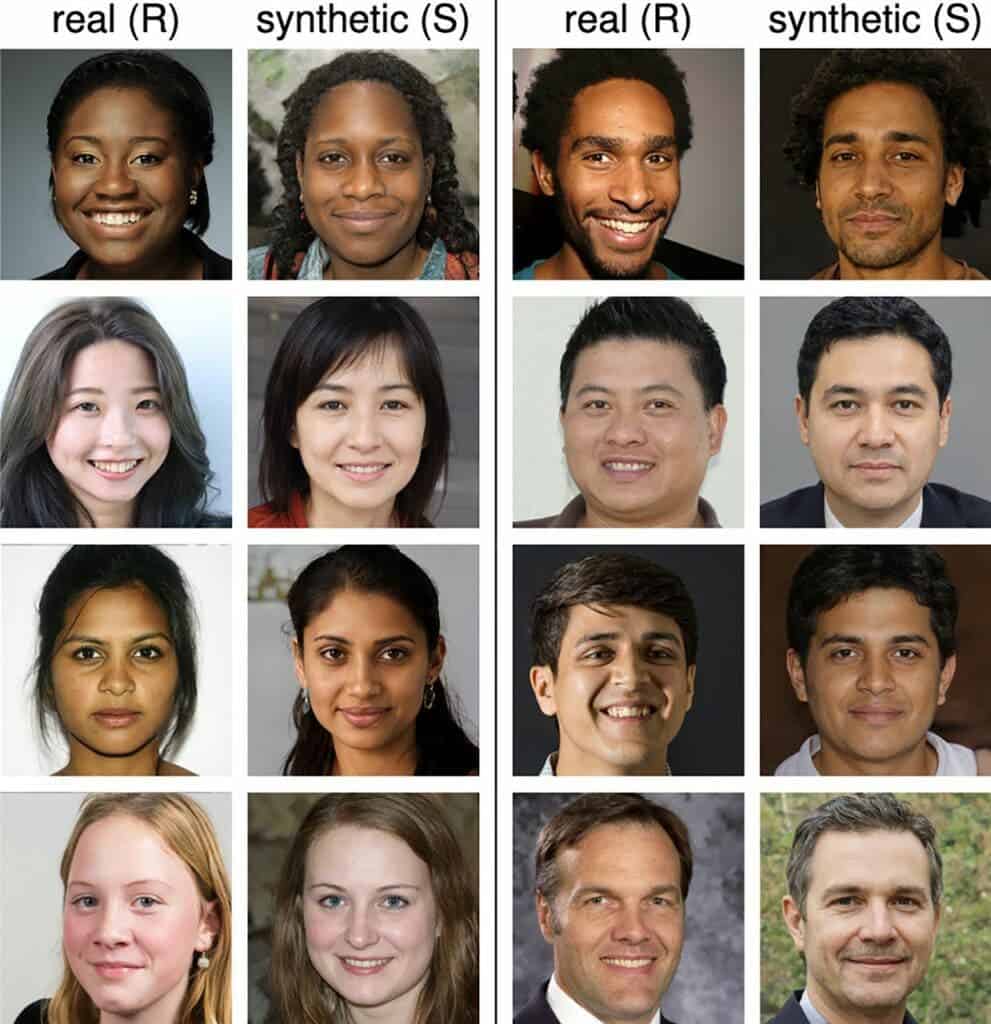

“Our evaluation of the photo realism of AI-synthesized faces indicates that synthesis engines have passed through the uncanny valley and are capable of creating faces that are indistinguishable—and more trustworthy—than real faces,” the researchers note.

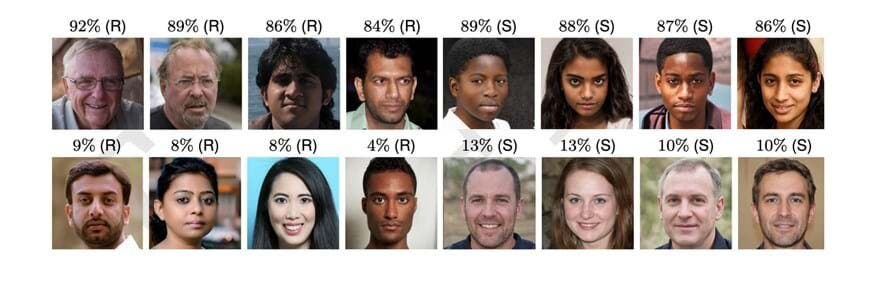

The researchers designed three experiments, recruiting volunteers from the Mechanical Turk platform. In the first one, 315 participants classified 128 faces taken from a set of 800 (either real or synthesized). Their accuracy was 48% — worse than a coin flip.

More trustworthy

In the second experiment, 219 new participants were trained on how to analyze and give feedback on faces. They were then asked to classify and rate 128 faces, again from a set of 800. Their accuracy increased thanks to the training, but only to 59%.

Meanwhile, in the third experiment, 223 participants were asked to rate the trustworthiness of 128 faces (from the set of 800) on a scale from 1 to 7. Surprisingly, synthetic faces were ranked 7.7% more trustworthy.

“Faces provide a rich source of information, with exposure of just milliseconds sufficient to make implicit inferences about individual traits such as trustworthiness. We wondered if synthetic faces activate the same judgements of trustworthiness. If not, then a perception of trustworthiness could help distinguish real from synthetic faces.”

“Perhaps most interestingly, we find that synthetically-generated faces are more trustworthy than real faces.”

There were also some interesting takeaways from the analysis. For instance, women were rated as significantly more trustworthy than men, and smiling faces were also more trustworthy. Black faces were rated as more trustworthy than South Asian, but otherwise, race seemed to not affect trustworthiness.

“A smiling face is more likely to be rated as trustworthy, but 65.5% of the real faces and 58.8% of synthetic faces are smiling, so facial expression alone cannot explain why synthetic faces are rated as more trustworthy,” the study notes

The researchers offer a potential explanation as to why synthetic faces could be seen as more trustworthy: they tend to resemble average faces, and previous research has suggested that average faces tend to be considered more trustworthy.

Although it’s a fairly small sample size and the findings need to be replicated on a larger scale, the findings are pretty concerning, especially considering how fast the technology has been progressing. Researchers say that if we want to protect the public from “deep fakes,” there should be some guidelines on how synthesized images are created and distributed.

“Safeguards could include, for example, incorporating robust watermarks into the image- and video-synthesis networks that would provide a downstream mechanism for reliable identification. Because it is the democratization of access to this powerful technology that poses the most significant threat, we also encourage reconsideration of the often-laissez-faire approach to the public and unrestricted releasing of code for anyone to incorporate into any application.

“At this pivotal moment, and as other scientific and engineering fields have done, we encourage the graphics and vision community to develop guidelines for the creation and distribution of synthetic-media technologies that incorporate ethical guidelines for researchers, publishers, and media distributors.”

The study was published in PNAS.