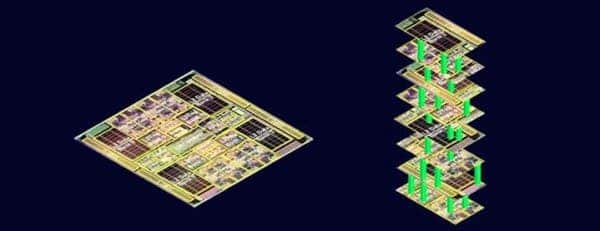

Computer chips today have billions of tiny transistors just a few nanometers wide (a hair is 100nm thick), all crammed up in a small surface. This huge density allows multiple complex operations to run billions of times per second. This has been going on since the ’60s when Gordan Moore first predicted that the number of transistors on a given silicon chip would roughly double every two years. So far, so good – Moore is still right! But for how long? There’s only so much you can scale down a computer chip. At some point, once you cross a certain threshold, you pass from the macroworld into the spooky domain of quantum physics. Past this point, quantum fluctuations might render the chips useless. Moore might still be right, though. Or he could be wrong, but in a way that profits society: computer chips could increase in computer power at a far grater pace than Moore initially predicted (if you still keep Moore’s law but replace transistors with the equivalent computing power). This doesn’t sound so crazy when you factor in quantum computers or, more practical, a 3D computer architecture demonstrated by a team at Stanford University which crams both CPU and memory into the same chip. This vastly reduces the “commuting time” electrons typically have to go through while traveling through conventional circuits and makes them more efficient. Such a 3D design could make a chip 1,000 faster than what we typically see today, according to the researchers.

According to Max Shulaker, one of the designers of the chip, the breakthrough lies in interweaving memory (store data) and processors (compute date) into the same space. That’s because, Shulaker says, the greatest barrier that’s holding today’s processors from reaching higher computing speeds lies not with transistors but with memory. As a computer shuttles vasts amounts of data, it constantly dances electrons between storage mediums (hard drives and RAM) and the processors through data highways (the wires). “You’re wasting an enormous amount of power,” said Shulaker at the “Wait, What?” technology forum hosted by DARPA. Basically, 96% of the time a computer stays idle, waiting for information to be retrieved. So, what you do is you put the RAM and processor together.

Sounds simple enough, but in reality there are a number of challenges. One of them is that you can’t put the two on the same silicon wafer. During manufacturing, these wafers are heated to 1,000 degrees Celsius, far too much for the metal parts that make up hard drives or solid state drives. So, the workaround was found to be using a novel material: carbon nanotubes – tubular cylinders of carbon atoms that have extraordinary mechanical, electrical, thermal, optical and chemical properties. Because of their electrical properties similar to silicon, many believe someday these could replace silicon as the de facto semiconductor building block of choice. In this particular case, carbon nanotubes can be processed at low temperatures.

The challenge with working with nanotubes is these are very difficult to ‘grow’ in a predictable, regular pattern. They’re like spaghetti, but in computing if only a couple of your nanotubes are misaligned this spells disaster. Also, inherent defects in manufacturing means that while most CNTs will work as semiconductors (switch current on/off), some will work as conductors and fry your transistors. Luckily, the Stanford researchers found a nifty trick: turn off all the CNTs then run a powerful current through the circuit to blow off the defective conductive CNTs, just like fuses.

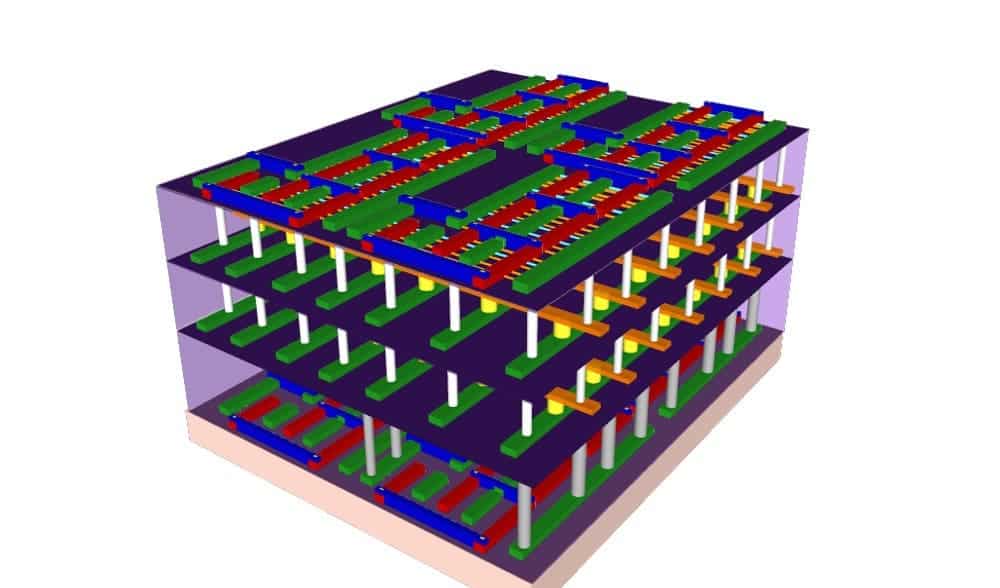

Ultimately, the Stanford team built a system that stacks memory and processing power in the same unit, with tiny wires connecting the two. The architecture can produce lightning-fast computing speeds up to 1,000 times faster than would otherwise be possible. To demonstrate, they used this architecture to devise sensors that detect anything from infrared light to various chemicals.

So, when will we see stacked interfaces like these in our computers or smartphones? Nobody knows for sure due to one issue: cooling. We’ve yet to see a solution that works well for a 3D stacked CPU-memory unit.

Was this helpful?