Eye contact plays a very important role in human interactions, however a recent research study made by psychologists at UC Santa Barbara found that looking below the eyes is the best place to get the feel of what a person is up to. Besides, apparently most of us are already hard-wired to fix our initial gaze to this point, albeit for an extremely short period and unconsciously.

“It’s pretty fast, it’s effortless –– we’re not really aware of what we’re doing,” said Miguel Eckstein, professor of psychology in the Department of Psychological & Brain Sciences

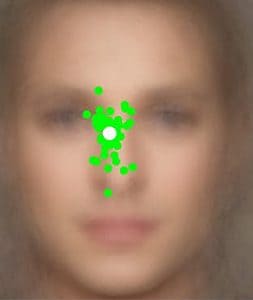

Miguel Eckstein and Matt Peterson used high-speed eye tracking cameras, more than 100 photos of faces and a sophisticated algorithm to pinpoint the first place participants looked at when fixing their gaze towards a person, in order to assess their identity, gender, and emotional state.

“For the majority of people, the first place we look at is somewhere in the middle, just below the eyes,” Eckstein said.

The whole initial, involuntary glance lasts a mere 250 millisecond, yet despite this and the relatively featureless point of focus, during these highly important initial moments our brain performs incredibly complex computations that plan eye movement in advance to ensure the best information gathering possible, as well as assess whether its time to run, fight or entertain.

“When you look at a scene, or at a person’s face, you’re not just using information right in front of you,” said Peterson.

The eyes are the windows to one’s soul, but what lies beneath it?

You might have noticed whenever you look at something, anything, the center of your point of view appears more refined and clearer than its surroundings, which offers less spatial detail. The high resolution areas are picked up by a region of the eye known as the fovea, which is a slight depression in the retina.

When sitting next to a person at a conversational distance, the fovea can read the whole person’s face in great detail and catch even the most subtle gestures. More detail spatial information relating to face features like the nose, mouth and eyes is readily available. Despite this, however, when study participants were asks to asses the identity, gender and emotions of an individual based on a photograph of his forehead or mouth alone, for instance, they did not perform as well as they did when looking close to the eyes.

These empirical data were correlated with the output of a sophisticated computer algorithm that mimics the varying spatial detail of human processing across the visual field and integrates all information to make decisions, allowing the researchers to predict what would be the best place within the faces to look for each of these perceptual tasks. The common denominator derived from both the computer model and actual human participant data is that looking below the eyes is the optimal place to look, say the scientists, because it allows one to read information from as many features of the face as possible.

“What the visual system is adept at doing is taking all those pieces of information from your face and combining them in a statistical manner to make a judgment about whatever task you’re doing,” said Eckstein.

This doesn’t seem to be a general rule for all humans, though. Previous research, say the scientists involved with the paper published in the journal PNAS, has found that t East Asians, for instance, tend look lower on the face when identifying a person’s face. Next Peterson and Eckstein are looking to refine their algorithm in order to provide insight into conditions like schizophrenia and autism, which are associated with uncommon gaze patterns, or prosopagnosia – an inability to recognize someone by his or her face.

source: UCSB