Researchers from the University of Washington have come up with a software program that helps blind people (and not only) make accurate yoga poses. The software watches the user’s movements and gives spoken, simple and easy to understand feedback on what to change to complete a yoga pose.

“My hope for this technology is for people who are blind or low-vision to be able to try it out, and help give a basic understanding of yoga in a more comfortable setting,” said project lead Kyle Rector, a UW doctoral student in computer science and engineering.

The project, which is called Eyes-Free Yoga, uses Microsoft Kinect software to track body movements and offer auditory feedback in real time for six of the most popular yoga poses; as it will be developed, more and more poses will be available, and it can also include other relatively simple activities besides yoga. Kinect is a motion sensing input device by Microsoft for the Xbox 360 video game console and Windows PCs.

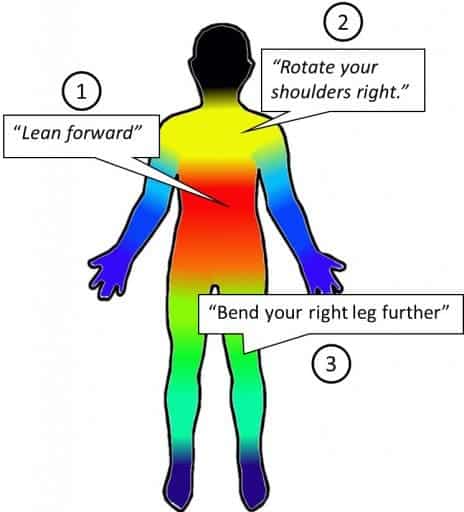

First of all, the software instructs Kinect to read a user’s body angles, then gives verbal feedback on how to adjust the position of the arms, legs, neck or back to complete the pose. For example, the program might say: “Rotate your shoulders left,” or “Lean sideways toward your left.”

The result is basically a yoga “exergame” (a video game used for exercise) that allows those with visual impairments to interact with a simulated yoga instructor, and ultimately, do the poses thanks to the verbal indications.

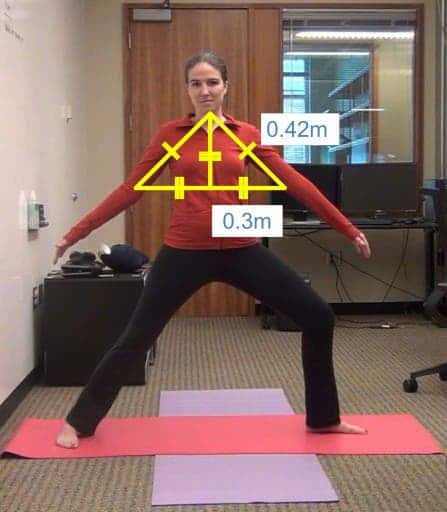

In order to see what the user is doing wrong, the program uses simple geometry and relies a lot on the law of cosines to calculate angles created during yoga; it reads the angle of the pose using cameras and skeletal-tracking technology and then gives pre-recorded indications to achieve the wanted positions.

Rector and his collaborators want to make this technology freely available on the internet, so users could download the program, plug in their Kinect and start doing yoga.

Scientific reference (open access):

Kyle Rector, Cynthia L. Bennett, Julie A. Kientz, Eyes – Free Yoga : An Exergame Using Depth Cameras for Blind & Low Vision Exercise, Proceedings of the 15th International ACM SIGACCESS Conference on Computers and Accessibility, 2013, DOI: 10.1145/2513383.2513392