The latest in brain-computer interface technology was recently demonstrated after woman with quadriplegia shaped a sophisticated robotic hand with ten degrees of freedom using her thoughts. Through the interface, she instructed the robotic hand to move up, down or sideways, pick up small or big objects and even squeeze them. In just a couple of years, brain-computer interfaces have shown they have the potential to transform the world. Decades from now, handicapped might just be another world that describes a cybernatic organism and not a disability.

The body may be numb, but the mind isn’t

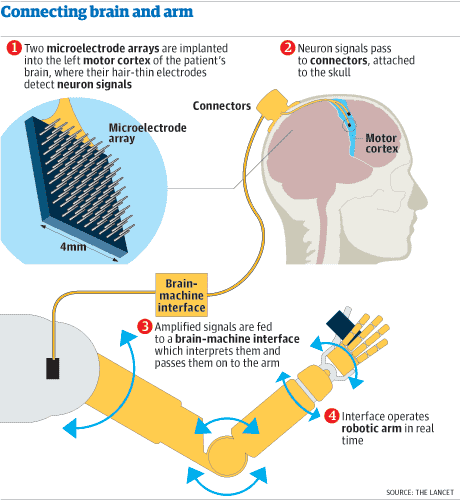

In 2012, ZME Science first reported the story of Jan Scheuermann, then 53, a women who is paralyzed from the neck down, but who was involved in one of the most revolutionary technology achievement of the 21st century. A team of doctors and engineers at University of Pittsburg implanted an electrode grid with 96 contacts onto her motor cortex – the area of the brain responsible for shaping locomotion and movement – in specific regions involved in coordinating her right arm and hand movement.

Each electrode point picked up signals from an individual neuron, which were then relayed to a computer to identify the firing patterns associated with particular observed or imagined movements, such as raising or lowering the arm, or turning the wrist. By watching animations of a hand moving and imagining herself doing the same, Jan calibrated the interface. Within a week after surgery (unfortunately, at this stage the electrodes need to be implanted, but this might change in the future), Jan could reach in and out, left and right, and up and down with the arm to achieve 3D control. Three months later she also could flex the wrist back and forth, move it from side to side and rotate it clockwise and counter-clockwise, as well as grip objects. This was 7D control. Now, researchers have upgraded the interface for a full 10D control.

“Our project has shown that we can interpret signals from neurons with a simple computer algorithm to generate sophisticated, fluid movements that allow the user to interact with the environment,” said senior investigator Jennifer Collinger, Ph.D., assistant professor, Department of Physical Medicine and Rehabilitation (PM&R), Pitt School of Medicine, and research scientist for the VA Pittsburgh Healthcare System.

The added arm and hand control came by replacing the initial pincer grip with four hand shape patterns: finger abduction, in which the fingers are spread out; scoop, in which the last fingers curl in; thumb opposition, in which the thumb moves outward from the palm; and a pinch of the thumb, index and middle fingers. The findings were reported in the Journal of Neural Engineering.

“Jan used the robot arm to grasp more easily when objects had been displayed during the preceding calibration, which was interesting,” said co-investigator Andrew Schwartz, Ph.D., professor of Neurobiology, Pitt School of Medicine. “Overall, our results indicate that highly coordinated, natural movement can be restored to people whose arms and hands are paralyzed.”

You have every right to be amazed at this point. What you’re seeing and learning is no SciFi dream – it’s all become reality. The only thing that can surprise us at this point is the next big future.

Story via KurzweilAI