On display at one of the featured stands at this year’s Royal Society Summer Science Exhibition is a pair of special glasses developed by scientists at Oxford University, which mixes technology already developed by gaming and smartphone manufacturers, and allows people with next to none vision orientate.

‘We want to be able to enhance vision in those who’ve lost it or who have little left or almost none,’ explains Dr Stephen Hicks of the Department of Clinical Neurology at Oxford University. ‘The glasses should allow people to be more independent – finding their own directions and signposts, and spotting warning signals,’ he says.

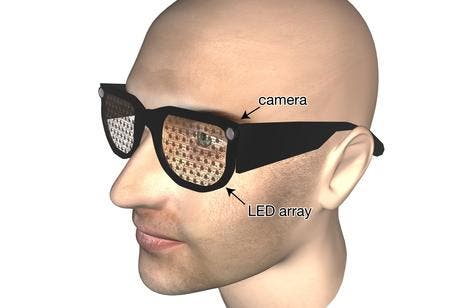

By using common elements found in, say, a touch-screen mobile or gaming portable, the Oxford researchers managed to build together video cameras, position detectors, face recognition and tracking software, and depth sensors to make the glasses. Seeing how the tech is widely available, this makes the glasses cheap too. A way of making them esthetically pleasing and non-intrusive was seen as one of the team’s design priorities, as well, seeing how any kind of social interaction is heavily based on eye contact. Imagine seeing a 65 year old senior with a metal box on his head asking you for directions; it would’ve been awkward. Scientists are trying to make lives easier, not more complicated.

It’s not like these glasses will be able to make people see better, there are other types of glasses for that. These are intended for people with severe optical deformities, either blind or almost (able only to distinguish vague shapes and shadows around).

‘The types of poor vision we are talking about are where you might be able to see your own hand moving in front of you, but you can’t define the fingers,’ explains Stephen.

How they work

A tiny camera placed in the corner of the glasses feeds a live stream of the wearers surroundings, while a display of tiny lights embedded in the see-through lenses of the glasses feed back extra information about objects, people or obstacles in view. The feed-back is driven by a smartphone computer located in the wearer’s pocket which interprets data fed by the camera and outputs signals to the LED lights.

‘The glasses must look discrete, allow eye contact between people and present a simplified image to people with poor vision, to help them maintain independence in life,’ says Stephen.

Different colors and brightness intensities each would mean something different to the wearer, from how close an object or a person is or how far an object of interest is located. With character recognition, it’s possible to add to the glass a “text read” feature, so reading the newspaper could become once again a possibility. Same thing goes for a bar code reader, so people would be able to shop and tell what kind of product and how much it costs is in the shelve – many other ideas could be implemented easily. Of course, the learning curve might be a bit stiff to attain, considering the numerous signals the wearer needs to learn how to interpret themselves, but with practice and patience a person could taste independence once again.

I mentioned before these would be cheap; well, considering the tech employed and their capabilities, an estimated of £500 sounds affordable, as opposed to a guide dog which lallygagged costs between £25-30,000 to train.

The project is still in its infancy, but viable prototypes are already on display at the Royal Society Summer Science Exhibition, where people are invited to try them on and see how well they can navigate around with them. The group has also received funding from the National Institute of Health Research to do a year-long feasibility study and plan to try out early systems with a few people in their own homes later this year.