Artificial Intelligence (AI) is often hyped as the next big thing. Oftentimes, it’s an exaggeration; but sometimes, something truly disruptive and impressive comes along — as is the case with the GPT-3 engine, and the newest product based on it, ChatGPT. If you’re out of the loop with what it is and what it can do, here’s what you need to know.

It’s enormously popular

ChatGPT is a product created by OpenAI, an organization formed by the likes of Elon Musk and Peter Thiel. The original founders put a collectively $1 billion to start the organization; these days, OpenAI is valued at over $20 billion, and they are also behind other projects, like the image-generating AI Dall-e (which we’ve covered here).

In June 2020, OpenAI announced GPT-3, a language model trained on trillions of words from the Internet. ChatGPT is largely based on that architecture — though OpenAI says it’s based on a “GPT3.5” architecture. The ChatGPT, which is essentially a chatbot, has surged to popularity, reaching 1 million users in only 5 days. For comparison, it took Netflix 41 months and Instagram 2.5 months to reach that benchmark.

Gratend, the chatbot will likely never reach the popularity that Instagram enjoys because it’s a more niche product, but it’s not just the number of users, it’s who those users are. A lot of people from various fields ranging from journalism to programming to entrepreneurs have been fascinated by the new chatbot, showing that its days of glory are just starting.

It’s so popular because it’s very good

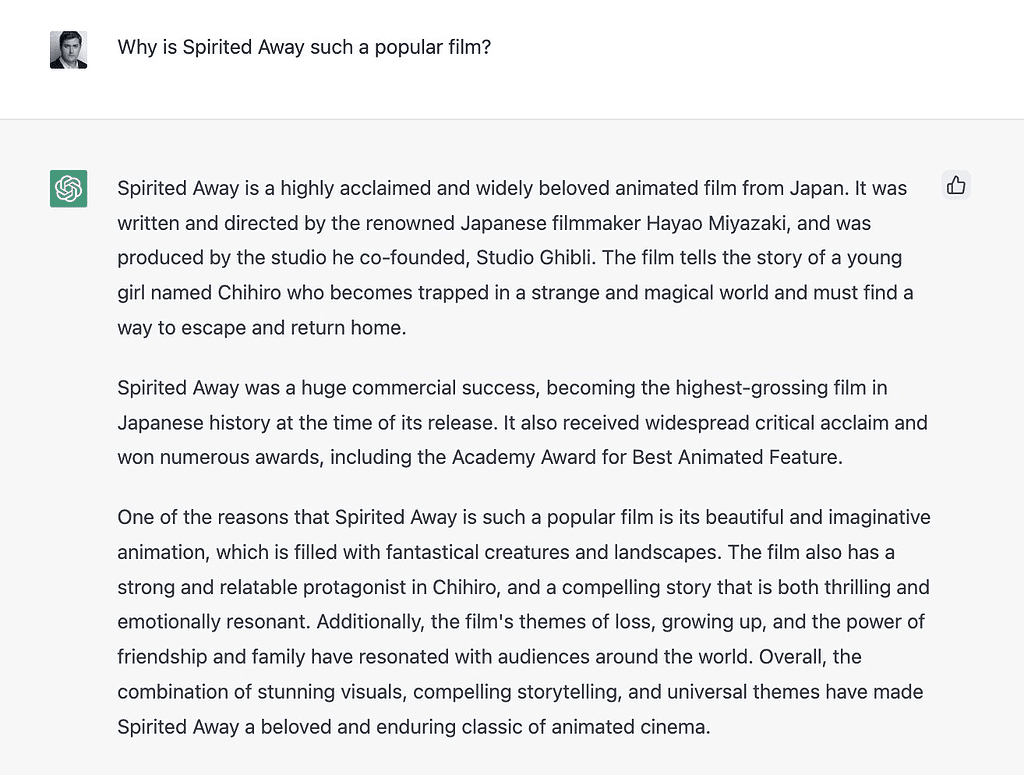

AI is indeed sometimes overhyped — but this is probably not one of those times. Simply put, ChatGPT is able to parse enormous amounts of information and oftentimes, synthesize it and answer questions eloquently.

Some have even speculated that it could be a Google-killer as it’s just so good at finding information and putting it together.

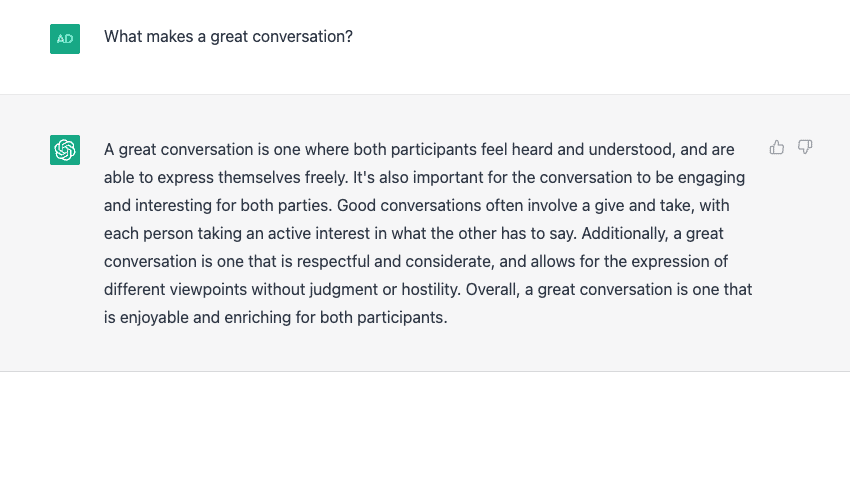

As it’s a chatbot, it’s also good at answering questions. Again, it’s just so surprisingly reasonable and on point that it almost feels hard to believe it’s an algorithm.

Part of the change from the general GPT-3 bot to ChatGPT seems to be that ChatGPT is less adventurous and less prone to making errors, while being surprisingly accurate even when dealing with complex questions.

At the very least, it’s by far the best chatbot we’ve seen so far, but it could be far more than that.

It’s not ‘intelligent’

Still, ChatGPT is not really intelligent.

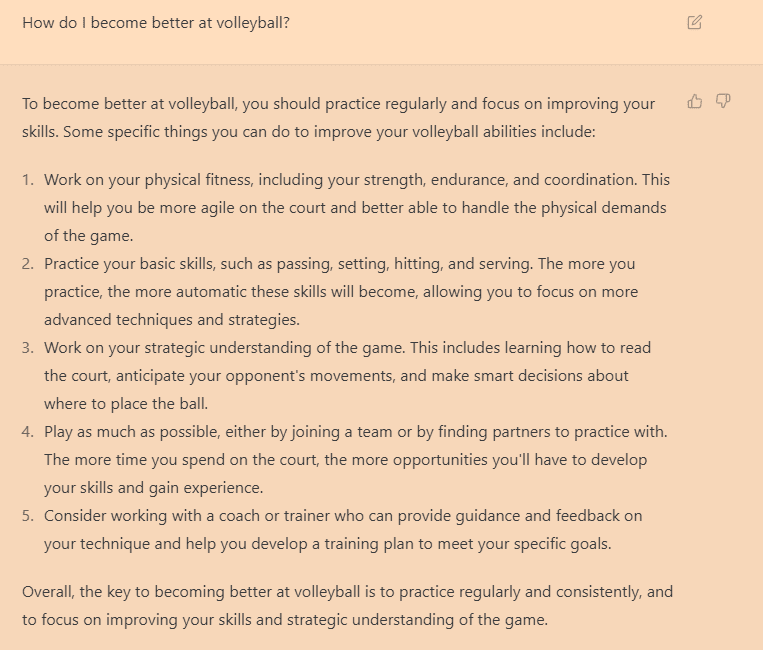

Sure, it’s given the name — Artificial Intelligence — but names can be deceiving. If you use ChatGPT long enough, you start to see some patterns. For starters, the answers it gives are usually generic and lack depth. Sure, it can be persuasive and coherent, eloquent and even creative (or rather, apparently creative) at times, but it is still a tool meant to complement, rather than replace human intelligence and creativity.

Ask it to give you some formatted information, like a cover letter or a report, and it will come up with a similar style of valid, but uninspired patterns. Ask it for general knowledge about anything from poetry to programming code and it will give you something that is likely useful.

But it does not produce accurate, insightful arguments. It produces text material that corresponds to what the request is asking, which, under some circumstances, can contain truth. But it won’t replace humans anytime soon, and it’s most definitely not aware of itself (even though it can do a good job of pretending that it is). Ultimately, it’s a tool, and it’s a recently-launched demo tool at that. How can it be best used? We’re probably still just figuring it out.

It’s free (for now) and easy to use

Most AI tools are difficult to use if you don’t have pre-existing knowledge. But ChatGPT is exactly what it says — it’s pretty much a chatbot. You can access it here by signing up, and if it’s not down because of overuse, you can start typing things into it and see how it works. It’s really, really easy to use. Using it well, however, may prove a bit more challenging.

Running the engine is definitely a cost, however, and while OpenAI has released it for everyone to use for free for now, this is likely to change at some point.

It can be used for anything from diet plans to writing code

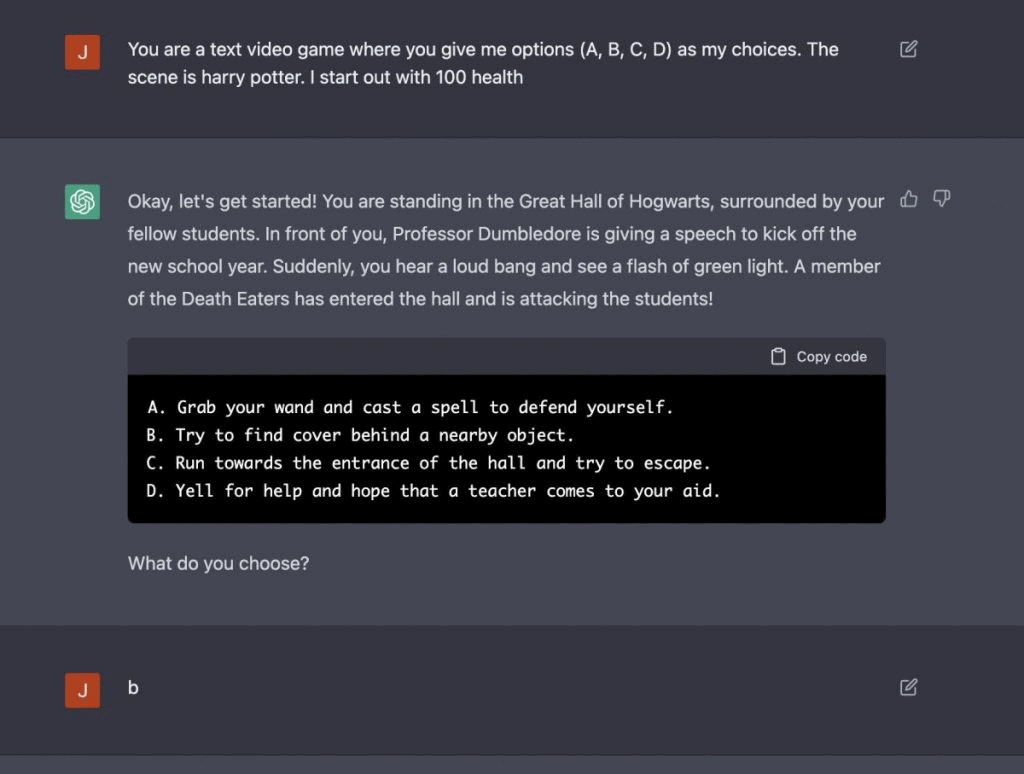

Already, an 11-year-old has come up with a ChatGPT game that generates scenarios and has people play through prompt. Even with a minimal input, it can generate new worlds and settings, helping in this case with game design. But the same approach could be used by writers and designers from multiple fields.

Other users asked it to come up with other helpful things like a meal plan. By inputing the desired nutritional objectives and dietary constraints, not only did ChatGPT come up with a meal plan, but it also shared the necessary grocery list.

Meanwhile, others are using it as an ambitious personal assistant, using it to automate repetitive tasks like writing emails or prompts or proposals. You still have to edit and check it, but it seems to be working, especially once you get the hang of how it operates.

But perhaps its most intriguing ability is that of writing code. Not only can it write code in various programming languages but it can even help debug code. In other words, it has the ability to completely revolutionize both how we write and debug code and how we learn about writing code.

It seems to be better than GPT-3

GPT-3 has been out for a couple of years already. It’s not exactly transparent and clear what the differences are between the original version and the one the chat uses, but a core change seems to be the algorithm’s reluctance to spew out random, non-factual things.

GPT-3 would often go on to make up a lot of things to try and satisfy the prompt. Meanwhile, ChatGPT is more likely to give you a version of “I don’t know” and stay truer to the facts.

You still have to double-check it through

However, you have to keep in mind that this AI offers text — not reasoning coated in text, but text. The text can look logical, can seem human, but if you dig deep enough, it is not. This doesn’t mean it’s not useful or that it’s not already a disruptive tool, but rather that it’s one we should tread carefully with, and try to employ critical reasoning with.

We’re still in the early days, but things can change very quickly. We’ve seen it with image-generating AIs, and now we’re seeing it with text generating AIs. All the threats are still there: the implicit biases in the data, the non-factual responses, the lack of creativity. As with any technology, it’s important to explore the potential advantages and disadvantages, preferably before it becomes widespread. While text-generating AI has the potential to revolutionize the way we communicate and create content, it is also crucial to approach it with caution and consideration for its potential impacts.