It’s clear that our political ideologies warp our ability to think clear – but we’re just starting to understand just how deep the problem really is. According to a new paper, our political beliefs can undermine even our most basic reasoning skills – including math.

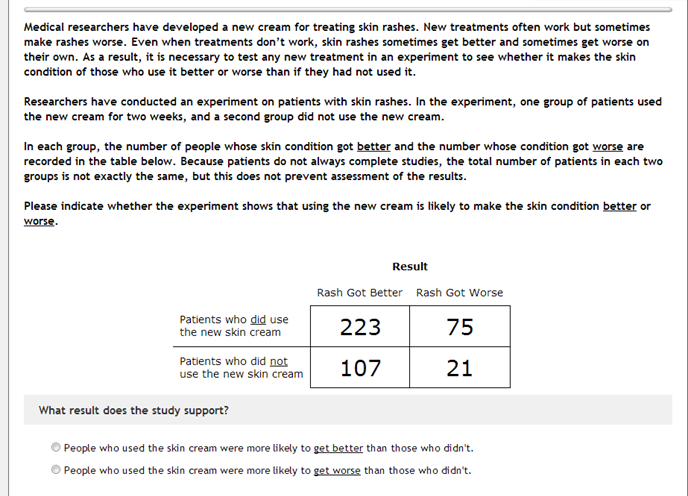

The study, which was led by Yale professor Dan Kahan and his colleagues, employed a rather ingenious technique. They asked 1.111 study participants about their political views and they also asked them to estimate their number skills. The participants were then asked to solve a rather complicated problem (for the average Joe), relying on a fake study. But here’s the trick: while the fake study data for some participants was about “new cream for treating skin rashes”, for some, the problem was about the effectiveness of “a law banning private citizens from carrying concealed handguns in public.” The numbers and everything was identical – just the meaningless objects in the problem were changed.

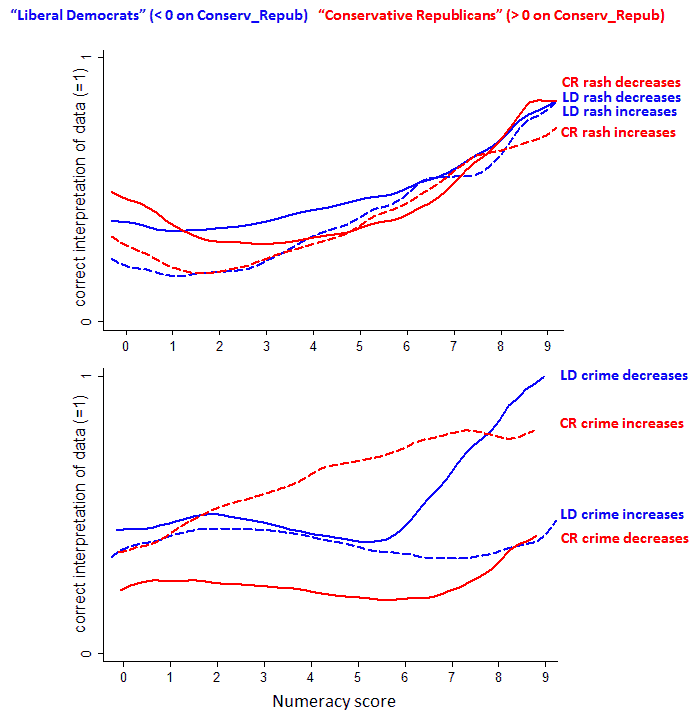

The results were quite shocking: survey respondents answers varied greatly on what is essentially the same problem – just because hand cream was switched with banning guns. Perhaps even more interesting was that highly numerate liberals and conservatives were even more susceptible to make mathematical mistakes based on personal beliefs than their not so able counterparts!

For the ‘control’ problem, results showed a direct connection between mathematical skills and the number of people which successfully answered the problem. But when you label the problem differently, with a politically interesting situation, people performed quite differently: strong political trends emerged, and people were influenced by their beliefs – liberal Democrats were more inclined to lean towards banning guns, while Repubilcans were against it – despite the mathematical arguments!

For study leader Kahan, this is a very strong indication against the information deficit model – the idea that if more information was available, people would reach a more positive consensus on some of the most touchy scientific issues (such as climate change, evolution, and vaccines, for example). It shows in a convincing fashion that people are significantly skewed towards their personal beliefs, regardless of rock solid arguments.

“If the wrong answer is contrary to their ideological positions, we hypothesize that that is going to create the incentive to scrutinize that information and figure out another way to understand it,” says Kahan.

Journal reference