You may have heard about ‘deepfakes’ before. These are essentially elaborate hoaxes generated by artificial intelligence-driven technology, most typically in a video format. During these highly realistic video forgeries, an actor’s facial expressions and lip movements are superimposed over the impersonated individual’s face. This isn’t some comical Photoshop. The voice is also impersonated, leading to lifelike apparitions that are both impressive and terrifying at the same time.

Obvious targets include celebrities like Mark Zuckerberg, Barack Obama, or Vladimir Putin who were turned into realistic puppets. Many others are pornographic, mapping faces from female celebrities onto porn stars — a staggering 96% of deepfakes posted online up to September 2019 were fake porn, showcasing the technology’s ability to be weaponized against women.

Besides deepfake pictures, videos, and audio, scientists at the University of Washington now warn that maps can also be faked using this technology via augmented satellite imagery.

Deepfakes: now a geography problem

Various agents, whether state-sponsored or not, have been forging satellite imagery for years. This isn’t news. What’s more, some inaccuracies are intentionally added by mapmakers as a means to prevent copyright infringement. These include fake streets, churches, or towns that are put on purpose so if someone copies the map then the map owner knows it was you because you couldn’t possibly have mapped these fake features.

Sometimes the cartographers have fun with these spoofs and even challenge users to find them as a sort of easter egg hunt. For instance, an official Michigan Department of Transportation highway map in the 1970s included the fictional cities of “Beatosu” and “Goblu,” a play on “Beat OSU” and “Go Blue,” because the then-head of the department wanted to give a shoutout to his alma mater.

But deepfake makes are anything but funny. Bo Zhao, an assistant professor of geography at the University of Washington and lead author of a recent study that exposes the dangers of AI-forged maps, claims that such misleading satellite imagery could be used to do harm in a number of ways. This is even more concerning if deepfakes are ever applied to WorldView 3 satellite imagery, whose resolution is so high you can zoom in to see individual people.

In fact, in 2019, the US military warned about this very prospect through its National Geospatial Intelligence Agency, the organization charged with supplying maps and analyzing satellite images for the U.S. Department of Defense. For instance, military planning software can be misled by fake data showing tactically important locations, such as a bridge, in an incorrect location.

“The techniques are already there. We’re just trying to expose the possibility of using the same techniques, and of the need to develop a coping strategy for it,” Zhao said.

For the new study, Zhao and colleagues fed maps and satellite images from three cities — Tacoma, Seattle, and Beijing — to a deep learning network that is not all that different from those used to create deepfakes of people. The technique is known as generative adversarial networks, or GANs.

After the machine was trained, it was instructed to generate new satellite images from scratch showing a fictitious region of one city, drawn from the characteristics of the other two.

Fake videos, now fake buildings and satellite images

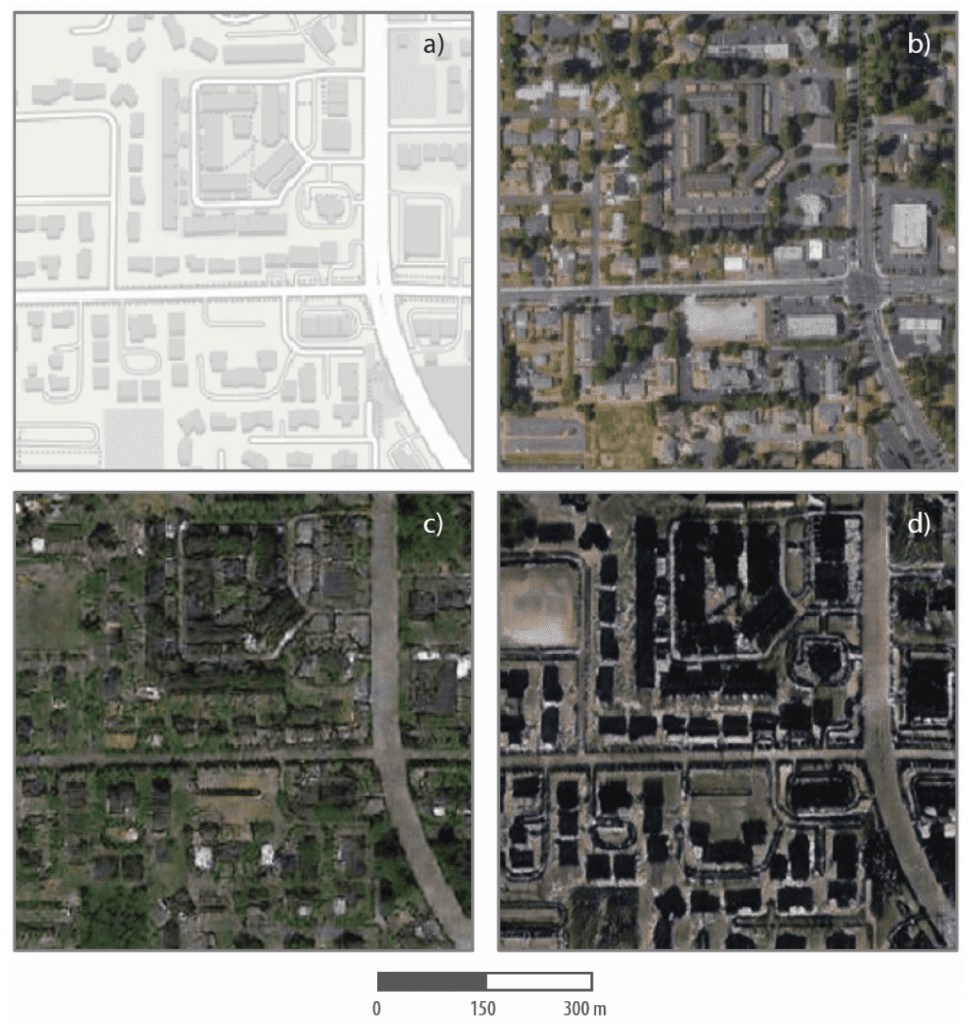

One such set of fake satellite images shows a supposed Tacoma neighborhood (the base map) but with visual patterns typical of Seattle and Beijing. In the image below, slides a) and b) feature the mapping software and an actual satellite image of the neighborhood as it truly is in real life, respectively. The bottom slides show the same neighborhood with low-rise buildings and greenery you’d expect to see in Seattle (slide c) and a Beijing version with taller buildings in which the AI cast a shadow over the building structures in the Tacoma map. In both genuine and fake maps, the road networks and building locations are similar but not exact. And it is these small but misleading details that can cause mayhem.

Telling apart the real satellite imagery from the fake one can be challenging to the untrained eye. This is why Zhao and colleagues also performed image processing analyses that can identify fakes based on artifacts found in color histograms, as well as in frequency and spatial domains.

In any event, the aim of this study wasn’t to show that satellite imagery can be falsified. That was already a foregone conclusion. Rather, scientists wanted to learn whether they could reliably detect fake satellite images, so that geographers may one day develop tools that allow them to spot fake maps similarly to how fact-checkers spot fake news today — all for the good of the public. According to Zhao, this was the first study to touch upon the topic of deepfakes in the context of geography.

“As technology continues to evolve, this study aims to encourage more holistic understanding of geographic data and information, so that we can demystify the question of absolute reliability of satellite images or other geospatial data,” Zhao said. “We also want to develop more future-oriented thinking in order to take countermeasures such as fact-checking when necessary.”

The findings appeared in the journal Cartography and Geographic Information Science.