Researchers at Stanford University have devised a novel algorithm which enables them to reproduce images of objects hidden from sight. An autonomous vehicle outfitted with a laser and high-precision photon detector using this technology could potentially see a child running after a bouncing ball on the street around the corner, for instance, and alert the vehicle that it should stop.

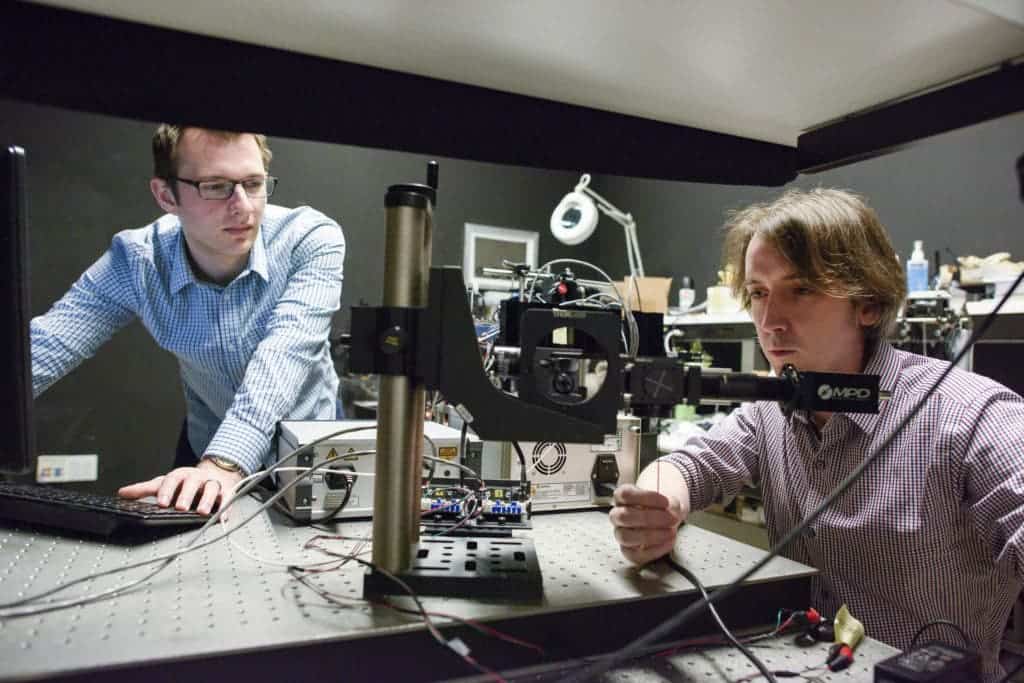

“It sounds like magic but the idea of non-line-of-sight imaging is actually feasible,” said Gordon Wetzstein, assistant professor of electrical engineering and senior author of the new paper.

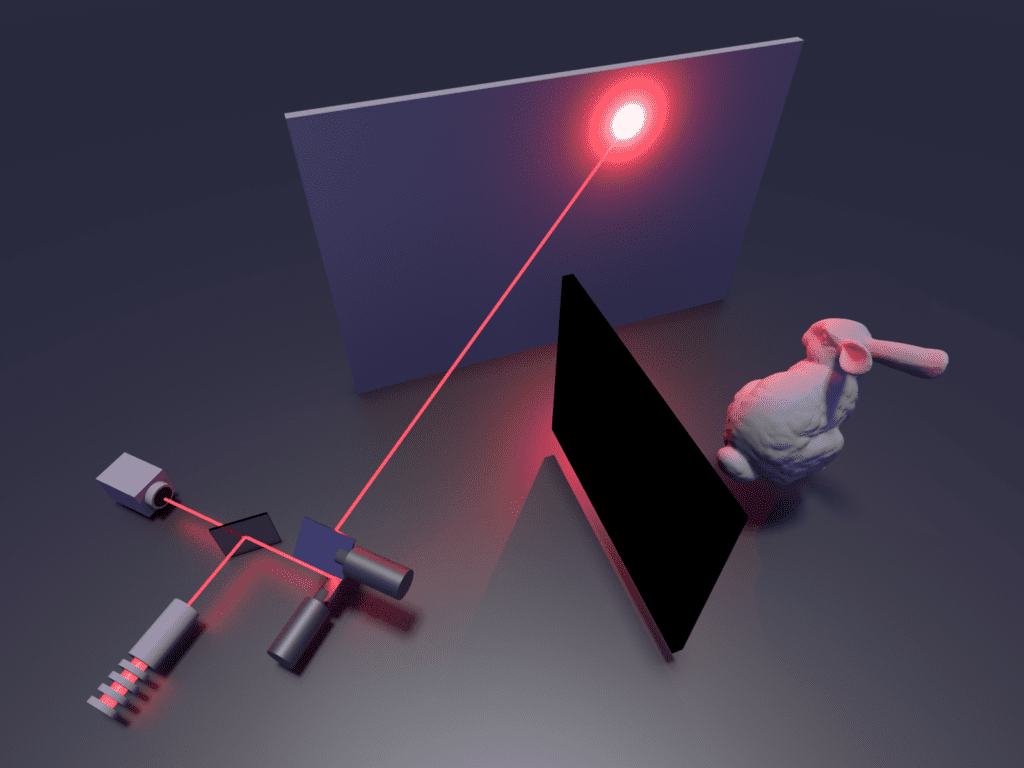

Organisms ‘see’ by absorbing the light that bounces off various surfaces. The reflected photons trigger a nervous signal which gets processed in the brain — it is this step that ultimately forms our sense of sight. The Stanford researchers employed a photon receptor (similar to a retina) alongside a laser which shot pulses at a wall. These pulses bounce around the corner of the wall and off objects that are obstructed, eventually finding their way back to the detector. The scan took anywhere from two minutes to an hour, depending on conditions such as lighting and the reflectivity of the hidden object.

Once the scan is complete, an algorithm reconstructs the paths the captured photons took, revealing an image that shows whatever objects lie behind the corner. The algorithm is so efficient it does its job in less than a second and can be run on a regular laptop. What’s more, Wetzstein is confident his team can enhance the algorithm to perform nearly instantaneously once a scan is complete.

This isn’t the first “see-around-the-corner” system. However, where this research shines is in the algorithm’s unprecedented efficiency, without which such a system would be extremely limited, to the point of being useless.

“A substantial challenge in non-line-of-sight imaging is figuring out an efficient way to recover the 3-D structure of the hidden object from the noisy measurements,” said David Lindell, graduate student in the Stanford Computational Imaging Lab and co-author of the paper. “I think the big impact of this method is how computationally efficient it is.”

One prime application for non-line-of-sight imaging would be for autonomous cars, which are already equipped with LIDAR-based guidance systems. These intentionally ignore scattered light particles, which the Stanford technique exploits to image objects around the corner. The technique could also prove very useful for aerial vehicles trying to see through foliage or for giving rescue teams the ability to find people blocked from view by walls and rubble.

Next, the team plans on tweaking their system so it can handle real-world variability and complete scans more quickly. Right now, it can’t perform very well in low ambient light or when the imaged objects are distant. The technology is already particularly adept at picking out retroreflective objects, such as safety apparel or traffic signs. This might prove useful to identify things such as road signs, safety vests, and road makers that are typically obstructed.

“This is a big step forward for our field that will hopefully benefit all of us,” said Wetzstein. “In the future, we want to make it even more practical in the ‘wild.'”

The findings were reported in the journal Nature.