One of the amazing things about modern technology is that we can use things such as cameras, remote sensors, and even Geiger counters to augment our five senses, which could be more like 100 nowadays. Imaging and tracking tech is so far out nowadays that it is now possible to see objects around corners or behind low-opacity obstructions. In a new study, engineers at Stanford’s Computational Imaging Lab have invented a new technique that enables them to see what physical objects are moving inside a room using a single laser beam.

Peephole vision

Imaging the shape or position of objects around corners is known as non-line-of-sight (NLOS) imaging. This emerging technology could, for instance, help autonomous cars see a pedestrian making a dangerous street crossing just around the corner and react in time.

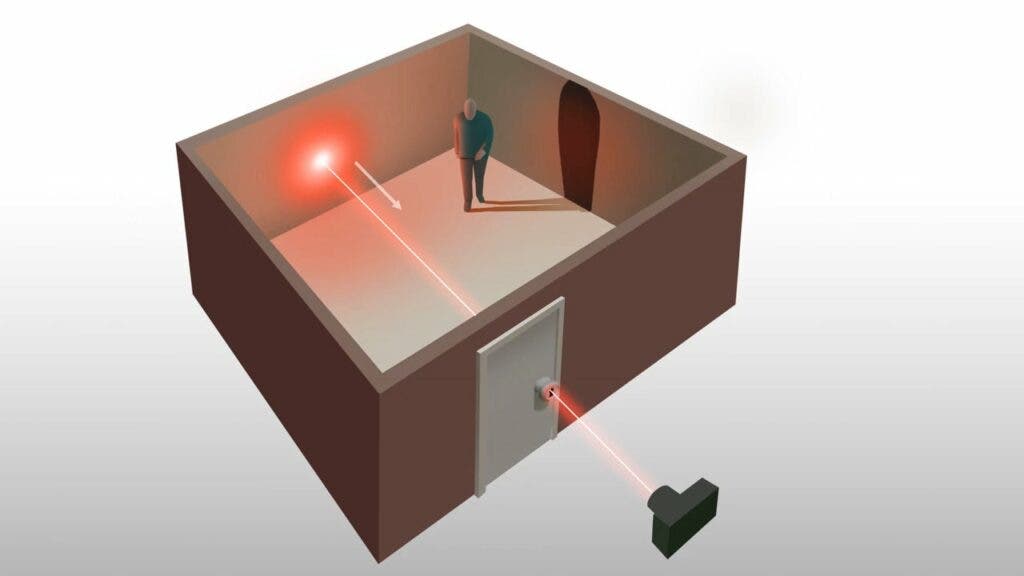

To gain ‘eyes’ around the corner, you only need a laser that shoots laser pulses. These laser beams bounce around the corner of the wall and off any objects that obstruct it, eventually finding their way back to the source, where they hit a detector. Algorithms can then reconstruct the paths the captured photons took based on the time required to make their way back to the detector.

Researchers at the Stanford Computational Imaging Lab have been experimenting with this sort of “see-around-the-corner” system for the last decade, with great results to show. The problem is that NLOS requires scanning a large area of the visible surface in order to deconstruct indirect light paths.

In new research, the Stanford researchers outdid themselves and managed to make NLOS work using a single optical path.

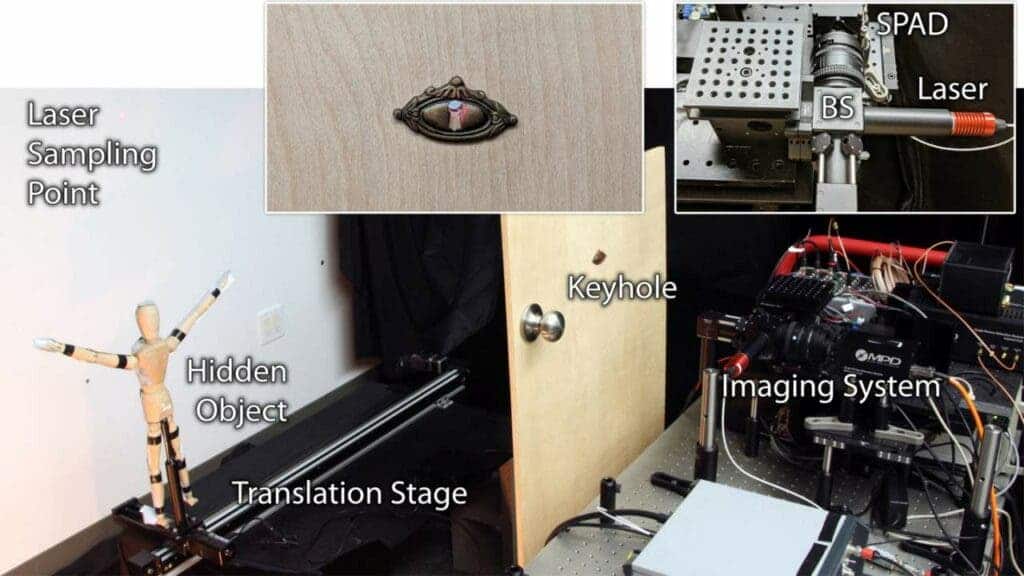

Aptly named ‘keyhole imaging’, the technique involves shining a laser beam through a small hole in a box or room. The photons from the laser beam bounce off the walls of the room and any object inside, eventually being reflected back through the hole.

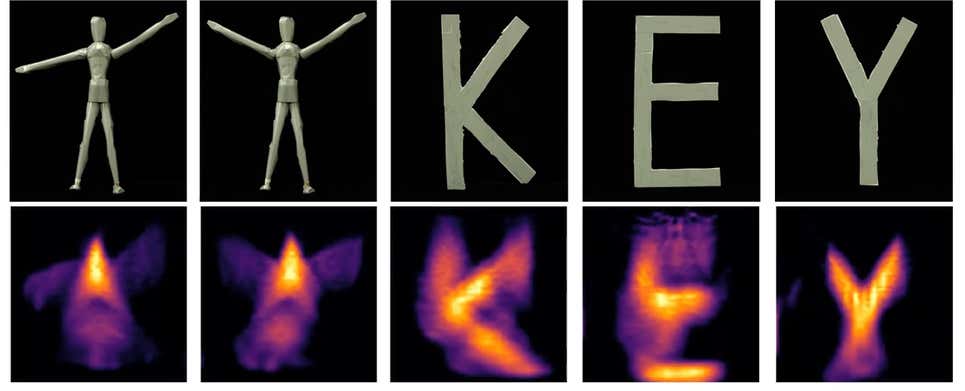

Because the field of view is so tiny, this method cannot image static objects inside the room. Nor is the resolution too great. However, the data is just enough to construct an image that is usable and makes sense, as you can see in the example below. Sure, that wooden mannequin doesn’t look too great, but the researchers believe they can refine their AI algorithms to such an extent that they should be able to distinguish the shape of a human moving inside a room.

“Assuming that the hidden object of interest moves during the acquisition time, we effectively capture a series of time-resolved projections of the object’s shape from unknown viewpoints. We derive inverse methods based on expectation-maximization to recover the object’s shape and location using these measurements. Then, with the help of long exposure times and retroreflective tape, we demonstrate successful experimental results with a prototype keyhole imaging system,” the researchers wrote in their technical paper.

These interventions could prove useful for law enforcement and military applications where assessing dangerous risks before entering a room could prove life-saving. Alternatively, this sort of technology could prove useful in some scientific fields. Archaeologists, for instance, may find keyhole imaging useful in mapping tombs or caves.