A team at Carnegie Mellon University (CMU) showed how to decode brain activation patterns, identifying complex thoughts such as entire sentences. In other words, not only is their tech able to infer that a person is thinking about a ‘banana’, it can tell you’re thinking ‘I like to eat bananas in evening with my friends,’ according to Marcel Just, a professor of psychology at CMU.

The pioneering work is the culmination of years of research in the field of machine learning and brain imaging technology. The work suggests that when we construct complex thoughts, these are formed by the brain’s various sub-systems. That’s not all. It seems conceptual representations in the brain are universal across people irrespective of language.

“We have finally developed a way to see thoughts of that complexity in the fMRI signal. The discovery of this correspondence between thoughts and brain activation patterns tells us what the thoughts are built of,” said Just.

Previously, Just and colleagues showed that just thinking about a familiar object like a chair or hammer activates distinct neural patterns used to deal with these objects. How you interact with a hammer involves various neural pathways like kinetics (how you hold it) or visual (what a hammer looks like).

The researchers tested their ‘mind reading’ technology on seven adult volunteers who had their brain scanned for 240 complex events, ranging from individuals and settings to types of social interaction or physical actions.Each type of information is processed in a different neural system and the algorithm developed at CMU used a backbone of 42 meaning components to make sense of it all.

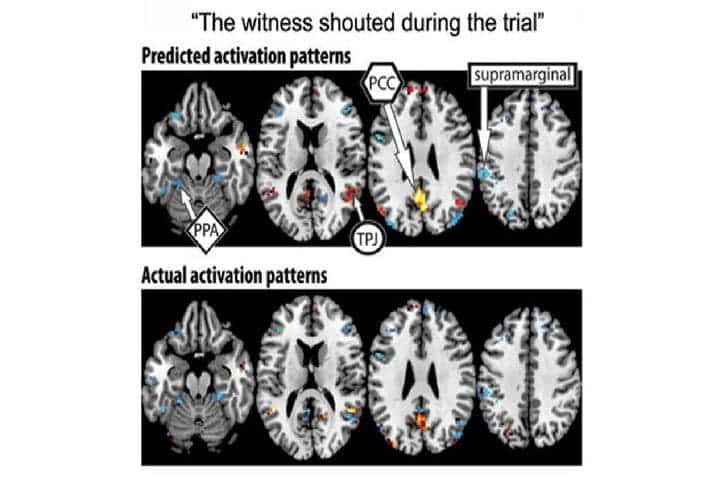

with the four large-scale semantic factors: people (yellow),

places (red), actions and their consequences (blue), and feelings

(green). Credit: Human Brain Mapping.

This system enabled the researchers to discern what the participants were thinking at any given time. After training the algorithm on 239 of the 240 sentences and their corresponding brain scans, the researchers were able to predict the final sentence based only on the brain scans of the subjects with 87 percent accuracy. That’s despite never being exposed to its activation before. The algorithm worked in reverse too, predicting the neural activation pattern of a previously unseen sentence with only its semantic features as a clue, as reported in Human Brain Mapping.

“Our method overcomes the unfortunate property of fMRI to smear together the signals emanating from brain events that occur close together in time, like the reading of two successive words in a sentence,” Just said. “This advance makes it possible for the first time to decode thoughts containing several concepts. That’s what most human thoughts are composed of.”

He added, “A next step might be to decode the general type of topic a person is thinking about, such as geology or skateboarding. We are on the way to making a map of all the types of knowledge in the brain.”

Was this helpful?