The future is here: scientists have unveiled a new decoder that synthesizes a person’s speech using brain signals associated with the movements of their jaw, larynx, lips, and tongue. This could be a game changer for people suffering from paralysis, speech impairment, or neurological impairments.

Technology that can translate neural activity into speech would be a remarkable achievement in itself — but for people who are unable to communicate verbally, it would be absolutely transformative. But speaking, a process which most of us take for granted in our day to day lives, is actually a very complex process, one that’s very hard to digitize.

‘It requires precise, dynamic coordination of muscles in the articulator structures of the vocaltract — the lips, tongue, larynx and jaw,” explain Chethan Pandarinath and Yahia Ali in a commentary on the new study.

Breaking up speech into its constituent parts doesn’t really work. Spelling, if you think about it, is a sequential concatenation of discrete letters, whereas speech is a highly efficient form of communication involving a fluid stream of overlapping and complex movements multi-articulator vocal tract movements — and the brain patterns associated with these movements are equally complex.

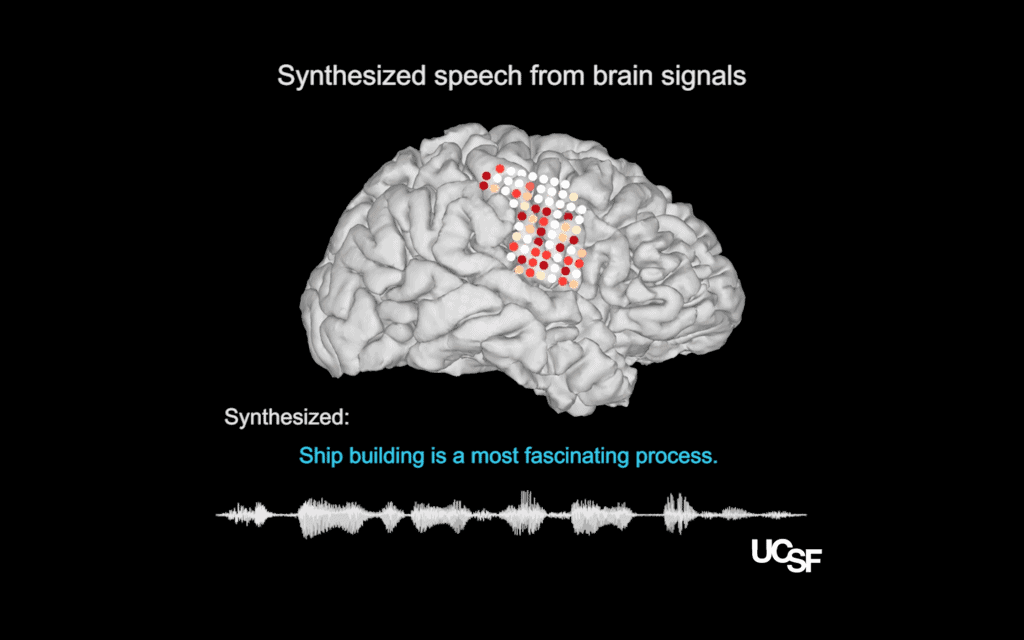

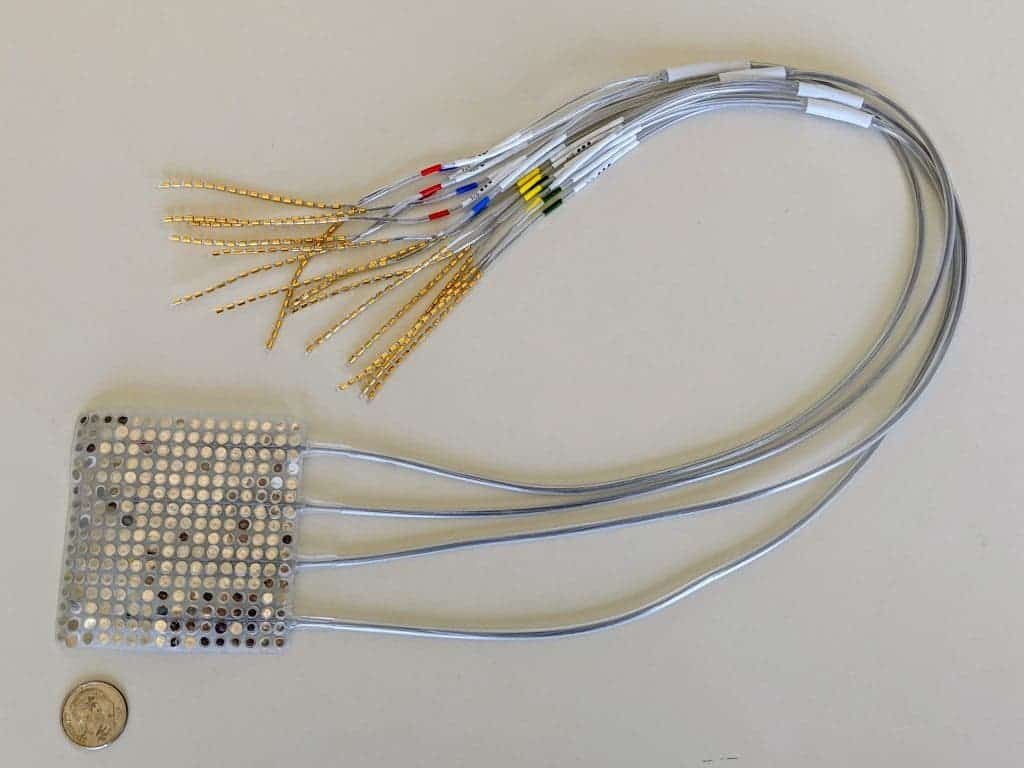

The first step was to record cortical activity from the brain of five participants. These volunteers had their brain activity recorded as they spoke several hundred sentences aloud. The movements of the vocal tract were also followed. Then, scientists reverse-engineered the process, producing speech from brain activity. In trials of 101 sentences, listeners could readily identify and transcribe the synthesized speech.

Several studies have used deep-learning methods to reconstruct audio signals from brain signals, but in this study, a team led by postdoctoral researcher Gopala Anumanchipalli tried a different approach. They split the process into two stages: one that decodes the movement associated with speech, and another which synthesizes speech. The speech was played to another group of people, who had no problem understanding.

In separate tests, researchers asked one participant to speak sentences and then mime speech (making the same movements as speaking, just without the sound). This test was also successful, with the authors concluding that it is possible to decode features of speech that are never audibly spoken.

The rate at which speech was produced was remarkable. Losing the ability to communicate due to a medical condition is devastating. Devices that use movements of the head and eyes to select letters one by one can help, but they produce a communication rate of about 10 words/minute — much slower than the average 150 words/minute in average speech. This new technology is comparable to the natural speech rate, marking a dramatic improvement.

It’s important to note that this device doesn’t attempt to understand what someone is thinking — only to be able to produce speech. Edward Chang, one of the study authors, explains:

“The lab has never investigated whether it is possible to decode what a person is thinking from their brain activity. The lab’s work is solely focused on allowing patients with speech loss to regain the ability to communicate.”

While this is still a proof-of-concept and needs much more work before it can be practically implemented, the results are compelling. With continued progress, we can finally hope to empower individuals with speech impairments to regain the ability to speak their minds and reconnect with the world around them.

The study was published in Nature. https://doi.org/10.1038/s41586-019-1119-1