Another week, another game that’s taken away from us. After the classics chess and Go succumbed to AIs, a current favorite in computer games — Dota2 — was mastered by machines just a week ago. Now, computer scientists reported that AIs are kicking butt at yet another popular computer game: Quake III.

If you like games where you go out and shoot stuff, then you’re probably familiar with the Quake series. If not, the premise is pretty simple — you roam around a map, you pick up guns, and you shoot your opponents before they shoot you. Rinse and repeat, it’s simplistic but highly addictive.

As it’s been done in previous similar efforts, the machine algorithm (stemming from DeepMind, a Google subsidiary) isn’t given much information on how to play the game — it’s left to its own devices, to figure out strategies on how to win. But DeepMind’s engineers added a twist: they trained a total of 30 agents with different playstyles to introduce a “diversity” of play styles.

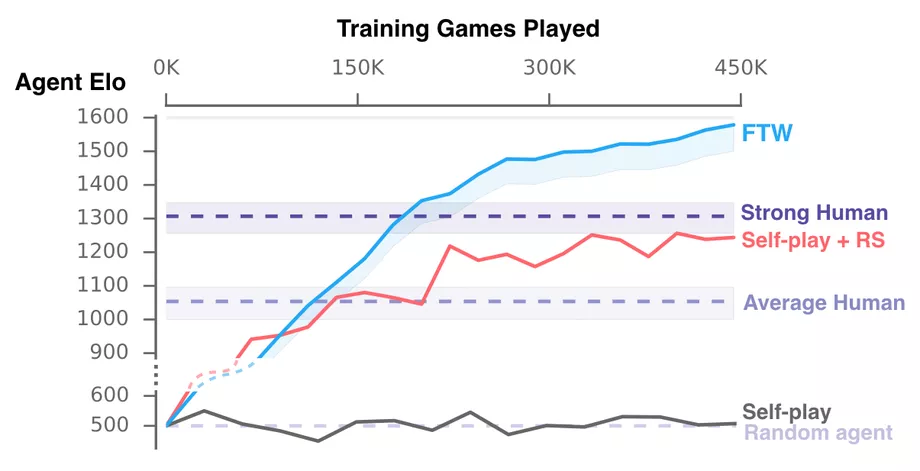

So how does the AI learn how to play? By playing a lot — a LOT. In this case, it took nearly half a million games, each lasting about 5 minute, to get the AI to its current level. The robots did not only learn how to play, but they devised the same strategies that human players typically use. A new map was generated procedurally for each game, to make sure that the AIs don’t develop strategies that work on a single map.

Also, programmers didn’t give them any numerical information, they had to learn to play just by “looking” at the screen, similar to how a human player would.

It’s worth noting that the stripped-down version of Quake III that the bots learned is much simpler than Dota2, for instance.

A graph showing the Elo (skill) rating of various players. The “FTW” agents are DeepMinds. Credit: DeepMind

To test the skill of the newly trained AIs, DeepMind hosted a contest: two-player teams of bots, humans, and a mixture of bots and humans squared off. The bot-only teams were the most successful, winning 74% of the time. However, the more bots researchers added in the team, the worse the team fared, indicating that they lack a specific understanding of team play.

Of course, the purpose of these AIs is not to beat us at out favorite games and take the fun out of everything (even though things sure seem that way sometimes). The goal is to design new ways to teach AIs different concepts more effective — though in this case, teaching bots how to get better at shooting people might not be the most soothing of applications.