Mixing in a typical fMRI brain scanner with advanced computer modeling simulations, scientists at the University of California have managed to achieve the the unthinkable – render the visual expressions triggered inside the brain and play them like a movie. This is the forefront technology which will one day allow us to tap inside the mind of coma patients or be able to watch the dream you had last night and still vaguely remember, just like a plain movie. Quite possibly one of the most fascinating SciFi ideas might become a matter of reality in the future.

“This is a major leap toward reconstructing internal imagery,” said Professor Jack Gallant, a UC Berkeley neuroscientist and coauthor of the study published online today (Sept. 22) in the journal Current Biology. “We are opening a window into the movies in our minds.”

This comes right on the heels of a recent, comparatively amazing study, from Princeton University who’ve managed to tell what study participants were thinking about, using a fMRI and a lexical algorithm. The neuroscientists from University of California have taken this one big step farther by visually representing what goes on inside the cortex.

A Sci-Fi dream come true that might show your dreams, in return

They first started out with a pictures experiment, showing participants black and white photos. After a while the researchers’ system allowed them to pick with absolute accuracy which picture the subject was looking at. For this latest one, however, scientists had to surrmount various difficult challenges which come with actually decoding brain signals generated by moving pictures.

“Our natural visual experience is like watching a movie,” said Shinji Nishimoto, lead author of the study and a post-doctoral researcher in Gallant’s lab. “In order for this technology to have wide applicability, we must understand how the brain processes these dynamic visual experiences.”

Nishimoto and two other research team members served as subjects for the experiment, as they stepped inside the fMRI for the experiments which requires them to sit still for hours at a time. During their enclosed space inside the fMRI, the scientists were presented with a few sets of Hollywood trailers, while blood flow through the visual cortex, the part of the brain that processes visual information, was measured. The brain activity recorded while subjects viewed the first set of clips was fed into a computer program that learned, second by second, to associate visual patterns in the movie with the corresponding brain activity.

A movie of the movie inside your head. Limbo!

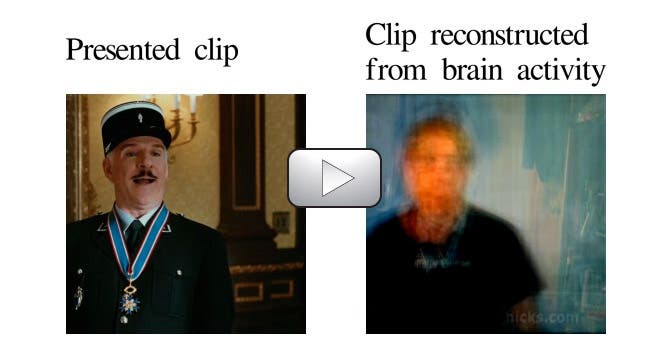

The second phase of the experiment is where it all becomes very interesting, as it implies the movie reconstruction algorithm. Scientists fed 18 million seconds of random YouTube videos into the computer program so that it could predict the brain activity that each film clip would most likely evoke in each subject. Then based on the brain imaging delivered by the fMRI, the computer program would morph various frames it had already learn into what it believed best describes the brain pattern. The result was nothing short of amazing. Just watch the video below.

This doesn’t mean that this new technology developed by UC scientists is able to read minds or the likes and visually tape ones memories on a display. Such technology, according to the researchers, is decades away, but their studies will help pave the way for future such developments. As yet, the technology can only reconstruct movie clips people have already viewed.

“We need to know how the brain works in naturalistic conditions,” he said. “For that, we need to first understand how the brain works while we are watching movies.”

Was this helpful?