The Sentinel-2 mission, two identical satellites operated by the European Space Agency, provides weekly coverage of the world. The twin satellites constantly monitor ecosystems, map natural disasters, and check water quality. However, compared to commercial satellites, Sentinel-2 has a relatively low resolution, meaning an offshore wind turbine, for example, only occupies ten pixels in the image — not enough to draw too many conclusions.

This is where the Satlas AI comes in. Satlas offers a new online map created by the Allen Institute for AI – founded by the late Microsoft co-founder Paul Allen. It uses images from the Sentinel-2 satellites and improves the blurry resolution of the ground using deep learning models to fill in the blanks and generate high-resolution images.

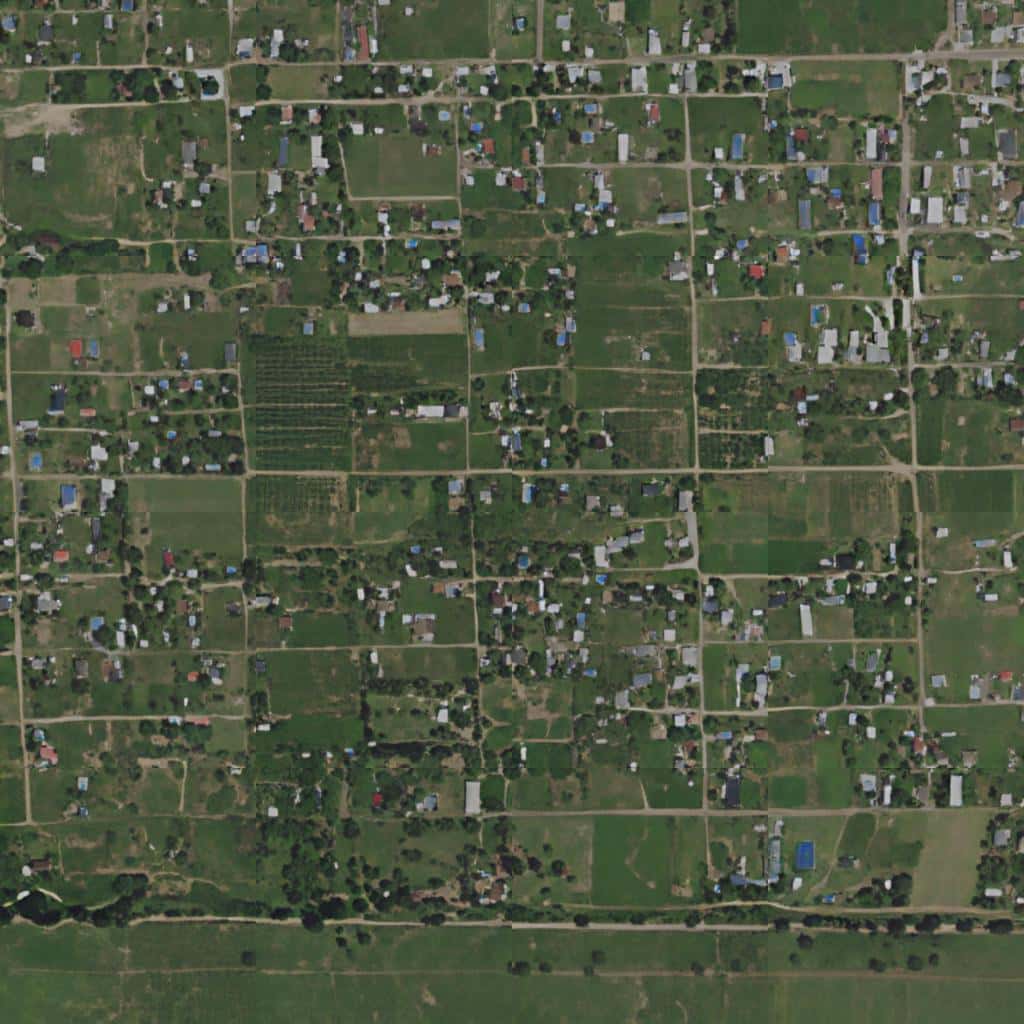

The results are quite striking and speak for themselves. Below you can see how a blurry satellite image of settlement was enhanced.

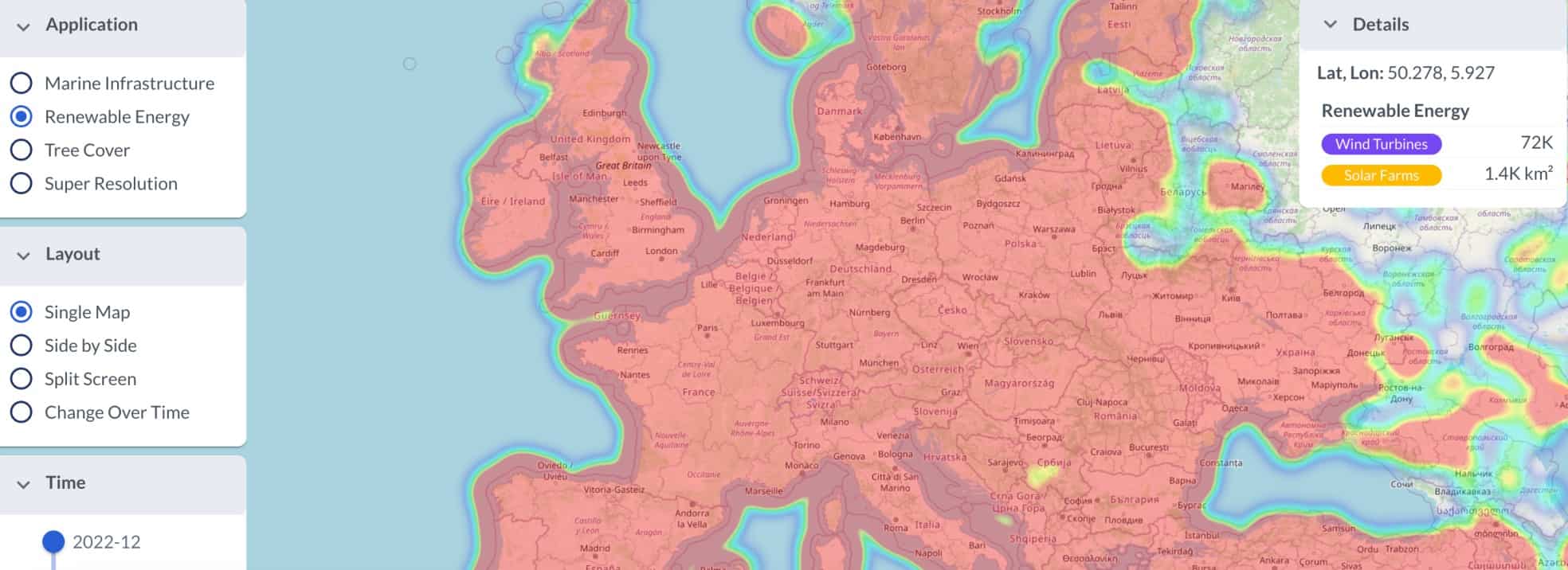

The enhanced map currently includes three types of data: marine infrastructure (offshore wind turbines and platforms), renewable energy infrastructure (onshore wind turbines and solar farms), and tree cover. The data is updated every month and includes the same parts of the world monitored by Sentinel-2 (most of the world excluding Antarctica).

The developers told The Verge that this is one of the first demonstrations of super-resolution imaging (techniques that increase the native resolution of an imaging system) in a global map. It’s free and the data and be downloaded. You can check out solar farms and wind turbines anywhere you want and analyze the evolution of forest coverage.

It wasn’t an easy job to set it up. The developers had to manually go through images to label wind turbines, offshore platforms, solar farms, and tree cover canopies – training the deep learning models to recognize these features. Then, they fed the model low-res images so it could predict sub-pixel details in the high-res images it generated.

“Satlas combines the power of modern AI with the scale of public domain satellite imagery to provide monthly monitoring of the planet,” Satlas website reads. “We leverage the latest advances in AI to robustly process images in this challenging domain and produce a variety of geospatial data products that we make freely available.”

The remaining challenges

While impressive, there are some details that still need to be improved. Satlas, like other AI models, is prone to hallucination – generating outputs different from what’s expected. Ani Kembhavi, computer vision director at the Allen Institute, told The Verge that it was drawing buildings in funny ways, such as trapezoidal instead of rectangular.

Satlas is already being used by AI2’s Skylight to detect vessels involved in illegal fishing. The developers hope other earth and environmental scientists and organizations can also start using it soon. Up next, they plan to create other kinds of maps, such as one that can spot the different kinds of crops that are planted across the planet.

“Our goal was to sort of create a foundation model for monitoring our planet,” Kembhavi said. “And then once we build this foundation model, fine-tune it for specific tasks and then make these AI predictions available to other scientists so that they can study the effects of climate change and other phenomena that are happening on the Earth.”

Was this helpful?