What you’re describing in a research grant proposal is important but how you say it also matters a lot, new research shows.

The study looked at health research proposals submitted to the Bill & Melinda Gates Foundation, in particular, the wording they used. It found that men and women tend to use different types of words in this context, both of which carry their own downsides. Female authors tend to use ‘narrow’ words — more topic-specific language — while men tend to go for ‘broad’ words, the team reports. The findings further point to some of the biases proposal reviewers can fall prey to, and may help design effective automated review software in the future.

The words in our grants

“Broad words are something that reviewers and evaluators may be swayed by, but they’re not really reflecting a truly valuable underlying idea,” says Julian Kolev, an assistant professor of strategy and entrepreneurship at Southern Methodist University’s Cox School of Business in Dallas, Texas, and the lead author of the study.

It’s “more about style and presentation than the underlying substance.”

The narrower language used by female authors seems to result in lower review scores overall, the team notes. However, broad language, which tended to see more use with male authors, let them down later throughout the scientific process: proposals that used more broad words saw fewer publications in top-tier journals after receiving funding. They also weren’t more likely to generate follow-up funding that publications with narrower language.

The researchers classified words as being “narrow” if they appeared more often in proposals dealing with a particular topic than others. Words that were more common across topics were classified as “broad”. In effect, this process allowed the team to determine whether certain terms were ‘specialized’ for a particular field or were more versatile. This data-driven approach resulted in word classifications that might not have been obvious from the outset: “community” and “health” were deemed to be narrow words, for example, whereas “bacteria” and “detection” were deemed to be broad words.

Reviewers favored proposals with broader words — and those words were used more often by men. So, should we just teach women to write like men? The team “would be hesitant to recommend” it, which is basically science-speak for ‘no’. Kolev says we should instead look at the potential biases reviewers can have, especially in cases where they are favoring language that doesn’t necessarily result in better research.

“The narrower and more technical language is probably the right way to think about and evaluate science,” he says.

Kolev’s team analyzed 6794 proposals submitted to the Gates Foundation by US-based researchers between 2008 and 2017 and how reviewers scored them. Overall, they report, reviewers tended to give female applicants lower scores, although the authors’ identities were kept secret during the review process. This gap in reviewer scores stood firm even after the team controlled for a host of conditions, such as the applicant’s current career stage or their publication record. The only element that correlated with the gap is the language applicants used in their titles and proposal descriptions, the team reports.

The team isn’t exactly sure whether their findings are broadly applicable to all scientific grant application review processes or not. Other research into this subject, but this one dealing with the peer-review process at the NIH, didn’t find the same pattern. It might be a peculiarity of the Bill & Melinda Gates Foundation.

One explanation could be found in the different takes these two organizations have on reviewing processes. The Gates Foundation draws on reviewers from several disciplines and employs a “champion-based” review approach, whereby grants are much more likely to be funded if they’re rated highly by a single reviewer. This less-specialized body of reviewers may be more susceptible to claims that look good on paper (“I’m going to cure cancer!”) rather than those which actually make for good science (such as “I’m going to study how this molecule interacts with cancerous cells”). This may, unwittingly, place women at a disadvantage.

The Gates Foundation hasn’t been deaf to these findings — in fact, they were the ones who called for the study and gave the team access to their peer-review data and proposals. The organization is “committed to ensuring gender equality” and is “carefully reviewing the results of this study — as well as our own internal data — as part of our ongoing commitment to learning and evolving as an organization,” according to a written statement.

The findings also have interesting implications for automated text-analysis software, which will increasingly take on tasks like this in the future. On the one hand, it shows how altering the wording of a proposal can trick even us — nevermind a bit of code — into considering it more valuable when it’s not. On the other hand, the findings can help us iron out these kinks.

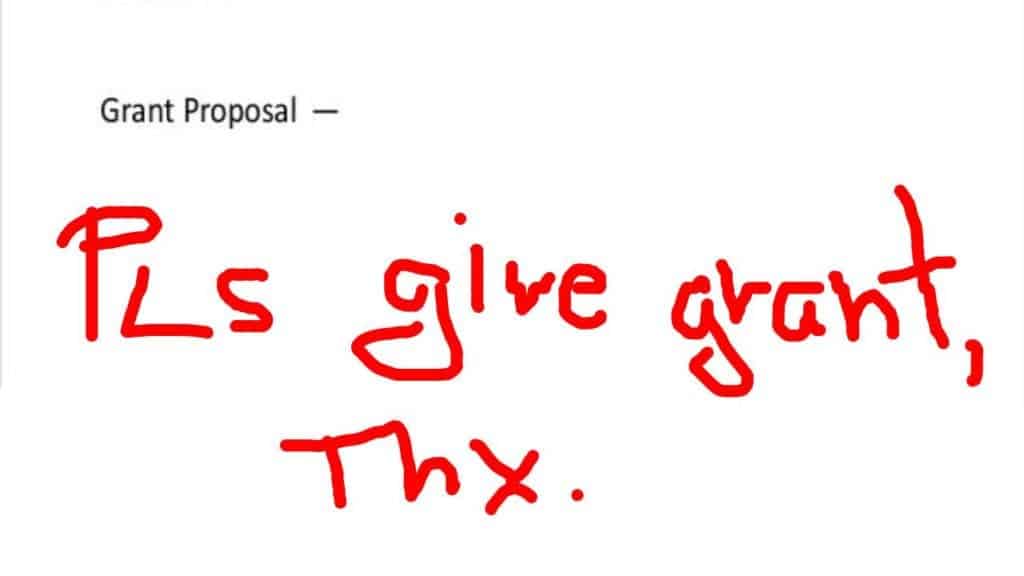

But that’s the larger picture. If you happen to be involved in academia and are working hard on a grant proposal, the study shows how important it is to tailor your paper to the peer-review process. You don’t need to be an expert –he Gates / NIH studies show that there isn’t a one-size-fits-all here, but there are services online that can help you out when the style and terminology of the assignment.

The paper “Is Blinded Review Enough? How Gendered Outcomes Arise Even Under Anonymous Evaluation” has been published in the journal NBER.

Was this helpful?