The 3-d printing industry is growing, and it’s growing darn fast. It’s no wonder why too. We’re on the brink of a small-manufacturing revolution, similar to how inkjet printers revolutionized home offices only at a totally different scale. So, your kid’s toy broke? No need to buy a new one, just print the broken part and fix it yourself. It can work for virtually any kind of commercially available products, from microwave components to plumbing fittings.

One obstacle in the way of the 3-d printing revolution is the actual part design, however. To print a 3-d object you first need a 3-d model of object in question. Sure, libraries are popping out all over the internet, some of which are already offering tens of thousands of 3D models for that matter, from sprockets to speaker housings. All of this is useless however if you can’t find exactly what you need, and that’s a pity considering your 3-D printer can rend just about anything.

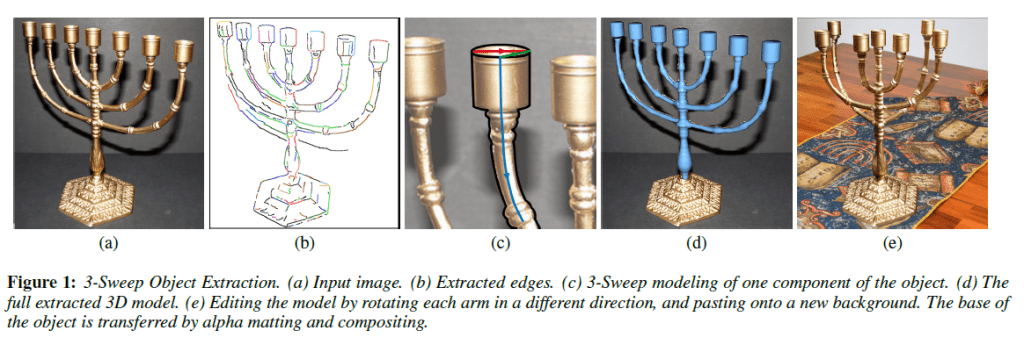

Ariel Shamir, of the Interdisciplinary Center at Herzliya, and Daniel Cohen-Or and Tao Chen of Tel Aviv University hope they can solve this problem with their 3-Sweep software that can instantly create 3-D models of objects from 2-D photographs. Using this highly simple idea, anyone can make their own models just by taking clear photos of their desired object.

Some CAD software companies have been offering this option for years, but most of the time these functions are pretty sloppy. You see, it’s very difficult for software to understand where an object begins and where it ends in a static photographs. Humans however have an innate ability to recognize unique object features instantly. It’s by exploring this idea that 3-Sweep shines. Basically, the software works in collaboration with the human user who identifies the simple three-dimensional objects for the computer by drawing a line across each of its three basic axes.

From there on the software highlights the object and the user can manipulate it at will: rotate it, skew it, re-size, translate it, whatever. It can smooth edits by replicating the lighting and texture of one part of an object and applying to another when the user signals that a part brought in from a different images forms part of a single whole. Really, the whole thing is amazing! The video presentation from below illustrates the software’s capabilities better than I could ever describe. Have a look.

It doesn’t work for any type of object though. First of all, the photos need to be very clear and high resolution, not that much of a problem considering digital cameras today are quite powerful. Then the object needs to be symmetric and rather simple, so an engine or anything with a lot of small, intricate parts won’t work – not yet at least.

“We show that with this intelligent interactive modeling tool, the daunting task of object extraction is made simple. Once the 3D object has been extracted, it can be quickly edited and placed back into photos or 3D scenes, permitting object-driven photo editing tasks which are impossible to perform in image-space. We show several examples and present a user study illustrating the usefulness of our technique.”

No word yet on when this software might be out for consumers or whether it will cost any money. Most likely, more info will follow once the researchers officially present their paper at Siggraph Asia in December.

via Singularity Hub

Was this helpful?