- Researchers have developed a camera that can capture translucent objects in 3D

- The camera is very cheap, at only $500

- The camera could be used in medical imaging and collision-avoidance detectors, among others

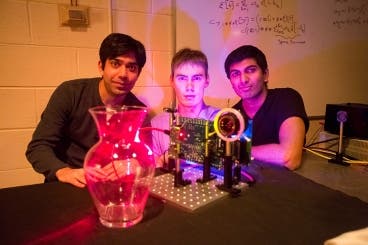

A $500 “nano-camera” that can operate at the speed of light and take 3D pictures has been developed by researchers in the MIT Media Lab.

The camera, which was presented last week at Siggraph Asia in Hong Kong, could be very useful in medical imaging collision-avoidance detectors for cars, and in motion tracking and gesture recognition technology. The camera is based on “Time of Flight” technology, just like that used by Microsoft in the second-generation Kinect device. In it, the location of objects is calculated by how long it takes a light signal to reflect off a surface and return to the sensor. However, the camera is smart enough not to be tricked by rain or fog objects.

“Using the current state of the art, such as the new Kinect, you cannot capture translucent objects in 3-D,” Kadambi says. “That is because the light that bounces off the transparent object and the background smear into one pixel on the camera. Using our technique you can generate 3-D models of translucent or near-transparent objects.”

In a conventional Time of Flight camera, a light signal is fired at a scene, where it bounces off an object and returns to strike the pixel. Since you know the speed of light, it’s very easy to calculate the distance the light has traveled and therefore the depth of the object it has been reflected from. However, changing weather conditions or foggy environments all mix with the signal, causing noise and uncertainties.

Instead, the new device uses an encoding technique commonly used in the telecommunications industry to calculate the distance a signal has travelled.

“We use a new method that allows us to encode information in time,” Raskar says. “So when the data comes back, we can do calculations that are very common in the telecommunications world, to estimate different distances from the single signal.”

Basically, the new camera probes the scene with a continuous-wave signal that oscillates at nanosecond periods – which allows the team to use inexpensive technology (off-the-shelf light-emitting diodes – LEDs), which strobe at nanosecond periods.

“By solving the multipath problem, essentially just by changing the code, we are able to unmix the light paths and therefore visualize light moving across the scene,” Kadambi says. “So we are able to get similar results to the $500,000 camera, albeit of slightly lower quality, for just $500.”

What makes it even more interesting is that the team’s approach is very similar to that already being shipped in devices such as the new version of Kinect, Davis says.

“So it’s going to go from expensive to cheap thanks to video games, and that should shorten the time before people start wondering what it can be used for,” he says. “And by the time that happens, the MIT group will have a whole toolbox of methods available for people to use to realize those dreams.”

Was this helpful?