Can you imagine an imaginary menagerie manager imagining managing an imaginary menagerie?

Sorry about that folks – that was a bit twisted right? Just earlier you’ve used your lips, tongue, jaw and larynx in a highly complex manner in order to render these sounds out loud. Still, very little is known of how the brain actually manages to perform this complex tongue twisting dance. A recent study from scientists at University of California, San Francisco aims to shed light on the neural codes that control the production of smooth speech, and in the process help better our understanding.

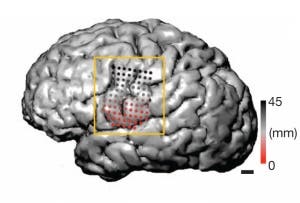

Previous neural information about the vocal tract has been minimum due to insufficient data. However, recently a team of US researchers have performed the most sophisticated scan of its kind, down to the millimeter and millisecond scale, after they recorded brain activity in three people with epilepsy using electrodes that had been implanted in the patients’ cortices as part of routine presurgical electrophysiological sessions.

As you might imagine, huge amounts of data were outputted. Luckily, the researchers developed a complex multi-dimensional statistical algorithm to filter out information so that they could reach that referring to how neural building blocks combine to form the speech sounds of American English.

First of all, the researchers found that neurons fired differently when the brain was prompted to utter a consonant than a vowel, despite the parts of speech “use the exact same parts of the vocal tract”, says author Edward Chang, a neuroscientist at the University of California, San Francisco.

The team found that the brain seems to coordinate articulation not by what the resultant phonemes sound like, as has been hypothesized, but by how muscles need to move. Data revealed three categories of consonant: front-of-the-tongue sounds (such as ‘sa’), back-of-the-tongue sounds (‘ga’) and lip sounds (‘ma’). Vowels split into two groups: those that require rounded lips or not (‘oo’ versus ‘aa’).

“This implies that tongue twisters are hard because the representations in the brain greatly overlap,” Chang says.

Even though the study has a very limited sample size of participants, and diseased on top of it, their findings provide nevertheless some invaluable information on a subject all too poorly studied. There are a lot of people who are suffering from speech impairments, either as a result of accidents resulting to the damage to the brain or the all too common strokes.

“If we can crack the neural code for speech motor control, it could open the door to neural prostheses,” Hickok says. “There are already neural implants that allow individuals with spinal-cord injuries to control a robotic arm. Maybe we could do something similar for speech?”

Findings were published in the journal Nature.

Was this helpful?