This AI was designed to work with text. Now, researchers have tweaked it to work with images, predicting pixels and filling out incomplete images.

GPT-2 is a text-generating algorithm. Trained on billions and billions of pages of words, it’s capable of absorbing the structure of the text and then writing texts of its own, starting from simple prompts. The algorithm also uses unsupervised learning, which makes it much easier for researchers to train it without taking a lot of their time. The AI system was presented in February and proved capable of writing convincing passages of English.

Now, researchers have put GPT-2 up to a different task: working with images.

The algorithm itself is not well-suited to working with images, at least not in a conventional sense. It was designed to work with one-dimensional data (strings of letters), not 2D images.

To bypass this shortcoming, researchers unfurled images into a single string of pixels, essentially treating pixels as if they were letters. After the algorithm was trained thusly, the new version of the algorithm was called iGPT.

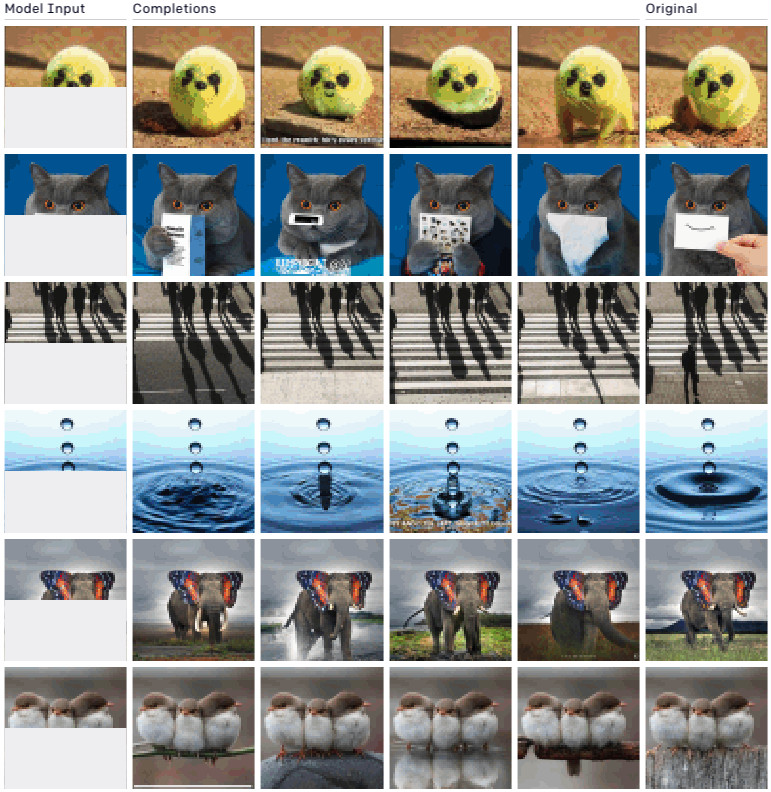

They then fed halves of images and asked the AI to complete the picture. Here are some examples:

The results are already impressive. If you look at the lower half of the photos above, they’re all generated by the AI, pixel by pixel, and they look eerily realistic. The three birds, for instance, are shown standing on different surfaces, all of them believable. The droplets of water too show different veridic possibilities, and all in all, it’s an amazing accomplishment from iGPT.

This also hints at one of the holy grails of machine learning: generalizable algorithms. Nowadays, AIs can be very good at a single task (whether it’s chess, text, or images), but it’s still only one task. Using one algorithm for multiple tasks is an encouraging sign for generalizable approaches.

The results are even more exciting when you consider that GPT-2 is already last year’s AI. Recently, the next generation, GPT-3, was presented by researchers and it’s already putting its predecessor to shame, by generating some stunningly realistic texts.

There’s no telling what GPT-3 will be capable of, both in terms of text generation and image generation. It’s exciting — and a little bit scary — to imagine the results.

The original paper can be read here.

Was this helpful?