The programmer’s wife told her husband: “Go to the store and buy a loaf of bread. If they have eggs, buy a dozen.”

The husband returns with 12 loaves of bread. Why? Because they had eggs.

The joke hinges on our intuitive understanding of how silly the situation is. It’s obviously not what the programmer should have done, but he was following an algorithm. Let’s take a dive into the surprisingly down-to-earth world of algorithms.

Cooking is an algorithm

The mere word is enough to evoke images of smartphones, apps, computers, and math. But we all employ algorithms, all the time, even when our favorite device isn’t in reach and we don’t call them ‘algorithms’. Every single day, humans use algorithms. You can make an argument that animals and maybe even plants, to some extent, do as well. We often feel that algorithms are weird and computer-like, but they’re really not.

So what are algorithms?

In essence, an algorithm is a set of instructions. Want to multiply two numbers, there’s an algorithm for how to do it. Want to make an omelet? There’s an algorithm for it: you pour oil into a pan, then heat it up, then break eggs, then put the eggs into the pan, then take them out when they’re done. It’s a sequence of instructions we follow so in essence, an algorithm. In fact, every cooking recipe is essentially an algorithm.

Here’s an even simpler example. You look out the window before heading out. If it’s raining, you take your umbrella. If not, you don’t. Think of algorithms as step-by-step guide to solving a problem.

So whenever you follow a set of instructions, you’re essentially going through an algorithm. It has to be unambiguous and it has to be precise. Remember the programmer joke from above? That algorithm wasn’t very clear, that’s why the two parties understood different things from it. An informal definition could be “a set of rules that precisely defines a sequence of operations.”

Of course, while cooking can loosely be considered an algorithm, the word is typically used in a different context — not necessarily technological, but just math. You can think of algorithms, especially those that underpin software, as math applied to certain tasks. But algorithms aren’t a new thing.

The origin of algorithms

The word ‘algorithm’ itself comes from the name of Persian mathematician Muhammad ibn Musa al-Khwarizmi. He was an important scholar, the most widely read mathematician in Europe in the late Middle Ages, primarily through one of his books, The Algebra. In the year 825, al-Khwarizmi wrote an Arabic language treatise on the Hindu-Arabic numeral system, a book which was translated into Latin in the 1100s. The manuscript starts with the phrase ‘Dixit Algorizmi’ (‘thus spake Al-Khwarizmi’). It came out as a funny word game to many: ‘Algorizmi’ was the translators’ Latinization of his name, but ‘algorism’ also meant the decimal number system.

The word was first used in English in the year 1230 and then by Chaucer in 1391, and was used several times in late medieval Latin. But it wasn’t until the late 19th century that “algorithm” took on the meaning that it has today. But it was this medieval mathematician’s Latinized surname that would become synonymous with the ordered instructions we now call algorithms.

Although the word wasn’t routinely used, the concept was used for a long time. Several notable algorithms date from Ancient times. The sieve of Eratosthenes, used to detect prime numbers, is a good example. It works like this:

- Create a list of consecutive integers from 2 through to the number you want to analyze — let’s call this n: (2, 3, 4, …, n).

- Start from 2 the smallest prime number.

- The number 2 is a prime. Chalk that off as a prime number and then eliminate all the multiples of 2 from your list (2, 4, 6, 8, etc).

- Move on to the next number on your list: 3. Chalk it off as a prime and then eliminate all its multiples from your list (3, 6, 9, 12, etc — though 6 and 12 should already be eliminated as they are multiples of two).

- Do this until you reach n. You now have a list of all the prime numbers to n.

You can be a bit clever and refine the algorithm even more. You don’t need to go all the way to n with the numbers, you just need to go to √n. You can also skip a few numbers in step 3 and start from the square of the number you’re sieving with (for more detailed explanations on why this works, check the Wikipedia page).

The Sieve of Eratosthene is still taught today as a simple but effective way to manually find prime numbers. Another ancient algorithm still in use is Euclid’s algorithm, for computing the greatest common divisor of two integers, described in 300 BC.

So although algorithms hadn’t been thoroughly formalized, they were still in use for a very long time. In modern times, however, algorithms have tended to become synonymous with computation.

Computer algorithms

Computers are a relatively recent invention, believe it or not. But they’ve changed the world in many ways, and they’ve changed how we view certain words too — like algorithms. Nowadays, when people say algorithm, they almost always mean something for a computer to execute. Strictly speaking, this doesn’t need to be the case, but it’s how most people use the word.

Algorithms can range from simple to very complex. Take the famous Google PageRank algorithm for instance. Every time you Google something, a swarm of parameters determine which results pop up first. It’s not just how informative the page itself is, but how much authority the website has, how knowledgeable the page author is, your browsing habits, etc — it’s the “secret sauce” that makes Google, well, Google.

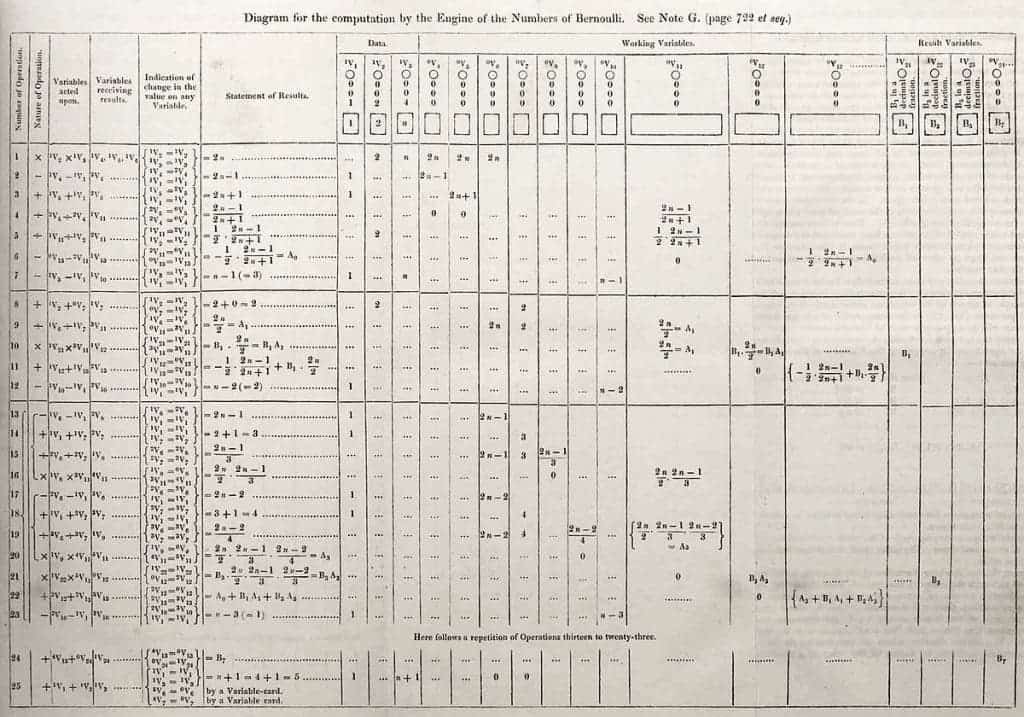

But let’s take a simpler algorithm, and one we’re already familiar with: the Sieve of Eratosthenes. We’ve seen how a human would go through it, but how would a computer do it?

For a human to go through numbers from, say 1 to 100 with the sieve could take a few minutes. For a human to go through 10,000 could take all day, and there’s a lot of room for error. But a computer could do it in a few seconds, or even less. Let’s look at this algorithm through something called pseudocode. Pseudocode is an artificial and informal language that helps programmers develop algorithms. It’s essentially”text-based” algorithmic design tool.

In pseudocode, the algorithm would look like this:

algorithm Sieve of Eratosthenes is

input: an integer n > 1.

output: all prime numbers from 2 through n.

let A be an array of Boolean values, indexed by integers 2 to n,

initially all set to true.

for i = 2, 3, 4, ..., not exceeding √n do

if A[i] is true

for j = i2, i2+i, i2+2i, i2+3i, ..., not exceeding n do

A[j] := false

return all i such that A[i] is true.

Of course, pseudocode is a simplified version of thinking about algorithms. To communicate it to a computer, you’d need something called a programming language. Since there are multiple types of programming languages, this could end up looking somewhat different since programming languages are different, but the essence is the same — the algorithm is the same.

In order to get the algorithm to be executed though, you have to be precise about how you write it. As the old saying goes, a computer does what you tell it to do, not what you want it to do.

Building an algorithm

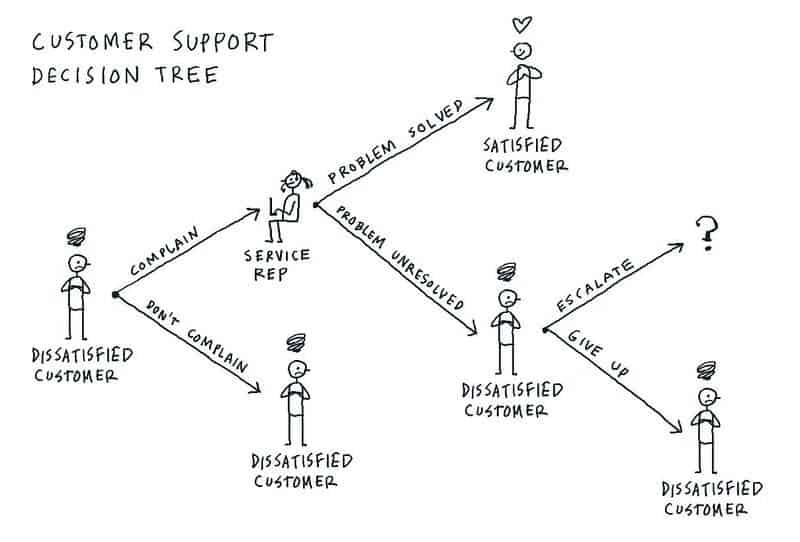

Algorithms often work as decision trees so you need three basic conditional elements to build an algorithm: if, and, or.

The ‘if‘ is one of the fundamental building blocks of all algorithms. If a condition A is met, then the algorithm does B. To add a bit more flexibility, you have ‘and’ and ‘or’ to satisfy multiple conditions or one of multiple conditions. An ‘else‘ is often used to tell the algorithm what to do if the condition isn’t satisfied.

Some algorithms are ‘recurring’, which means they do something until a condition is satisfied (or not satisfied). For instance, the Sieve of Eratosthenes continues while you are at a number from 2 to n.

There are many types of algorithms, and each field has its own type of algorithms with its own peculiarities. The more complex a field becomes, the more complex the algorithms often become. You can have sorting algorithms, optimizing algorithms, graph algorithms, and of course, machine learning.

Machine learning

Needless to say, mankind has developed some tremendously complex algorithms, which are used for multiple things ranging from product recommendations to air traffic control. Nowadays, the most complex ones use something called machine learning.

We’ve mentioned before that when a human writes an algorithm, it needs to be very precise. Everything needs to be laid out in excruciating detail. This works in many situations, but there are alternatives. In machine learning, a human feeds a program raw data as a starting point and an end point where the results are organized in the desired fashion. The program is then asked to figure out the algorithm to get from A to B. Machine learning algorithms build a model based on sample data. Then, after this training, it can make predictions and decisions about other types of data. In a traditional algorithm, the programmer would tell the computer how to make an omelet from eggs. In machine learning, you’d feed a computer eggs and an omelet and ask it to figure out how the omelet was made, and then it could figure out how to make other foods.

Machine learning is often regarded as a part of Artificial Intelligence, although the capabilities and limitations of the approach are often misunderstood. In terms of approach, machine learning is very similar to statistics, although their goal is often distinct.

Machine learning can even be unsupervised, without programmers that supervise the model and the algorithm “learning” by itself. A good example of this is the chess program MuZero that wasn’t even taught the rules of chess — it was simply left to play with a highly performant machine. After a while, it matched and even overcame the ‘trained’ engine.

Of course, whenever we think about smarter algorithms, our thoughts jump to the next bit leap: true artificial intelligence. The truth is, we’re still very, very far away from any form of ‘real’ artificial intelligence. It’s not clear if it can ever be done. Programs like MuZero are typically very good at one single task, but they’re not good at adapting to real-life situations. Still, you could argue that our entire personality is essentially one big, complex algorithm, and bio-inspired algorithms are a thing, but we’re still very far from something like that. No doubt though, as time passes and our society becomes more and more technology-based, algorithms will also play a more important role.

From making an omelet to building artificial life, it’s all about algorithms. We’d best get used to them.

Was this helpful?