Researchers have developed a method to identify impoverished areas using free information from satellite imagery.

We know a lot about poverty in a general sense, but local information is often scarce and difficult to obtain. Especially in Africa, we need to first identify and delimitate impoverished and heavily impoverished areas if we want to help them. But this identification is not easy, and can be quite time-consuming and expensive – this is where the new study steps in.

“We have a limited number of surveys conducted in scattered villages across the African continent, but otherwise we have very little local-level information on poverty,” said study coauthor Marshall Burke, an assistant professor of Earth system science at Stanford and a fellow at the Center on Food Security and the Environment. “At the same time, we collect all sorts of other data in these areas – like satellite imagery – constantly.”

So he got to thinking, how could we use readily available information from satellites to detect poverty? A good starting point is night lights. You can tell a lot about a particular area by looking at how its night sky looks like. Take the clear example of North vs South Korea. North Korea is a sea of darkness surrounded by bright lights from both South Korea in the south and China in the north.

But this doesn’t nearly tell the whole story. The absence of night lights is an indicator of poverty, but it doesn’t say anything about the degree of poverty, and can be misleading in certain conditions. So the team tried a more complex approach – they integrated night images with images taken during the day. They wanted to see what characteristics impoverished areas share between them.

At first, this was difficult because the available data was quite scarce.

“There are few places in the world where we can tell the computer with certainty whether the people living there are rich or poor,” said study lead author Neal Jean, a doctoral student in computer science at Stanford’s School of Engineering. “This makes it hard to extract useful information from the huge amount of daytime satellite imagery that’s available.”

However, as the algorithm developed, it became more and more accurate. Basically, they correlated the brighter night pictures with high-resolution daytime imagery to identify areas lacking economic development. But they also used other finer techniques. For example, they would look at the material from which roofs are built and the distance of settlements from urban areas.

“Without being told what to look for, our machine learning algorithm learned to pick out of the imagery many things that are easily recognizable to humans – things like roads, urban areas and farmland,” says Jean.

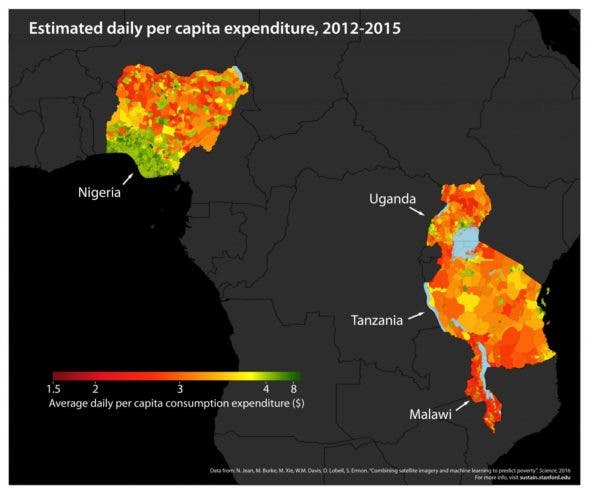

After the calibration phase, they tested it with real data, initially on five African countries: Nigeria, Tanzania, Uganda, Malawi, and Rwanda. The model isn’t perfect, but it was “strongly predictive.” In other words, they mapped poverty and extreme poverty with better accuracy than all existing methods, barring door-to-door information.

“Our paper demonstrates the power of machine learning in this context,” said study co-author Stefano Ermon, assistant professor of computer science and a fellow by courtesy at the Stanford Woods Institute of the Environment. “And since it’s cheap and scalable – requiring only satellite images – it could be used to map poverty around the world in a very low-cost way.”

This could help policymakers enact better measures to alleviate poverty and fight it, saving a lot of money in the process and making sure that helping funds reach the areas which need them most.

Journal Reference: “Combining satellite imagery and machine learning to predict poverty”. For more information, visit the research group’s website at: http://sustain.stanford.edu/

Was this helpful?