We live in a very connected and mobile world. Technology and media have become integral parts of our lives that we spend 10 hours a day looking at screens. A fifth of that time is spent on social media. YouTube viewers spend an average of 40 minutes per session watching videos. All of these happen around the clock and around the globe. So have you ever wondered how these websites are available anytime, anywhere, and on-demand?

Websites like YouTube, Facebook, and other popular names need to be running 24 x 7 and have to keep up with demand. They have to remain accessible to billions of users worldwide. The time websites spend up and running is called “uptime.” Maintaining uptime can be a challenge especially for many of these top high-traffic websites. Doing that isn’t an easy feat. It requires significant computing power to process all the requests and data being transferred from across the globe.

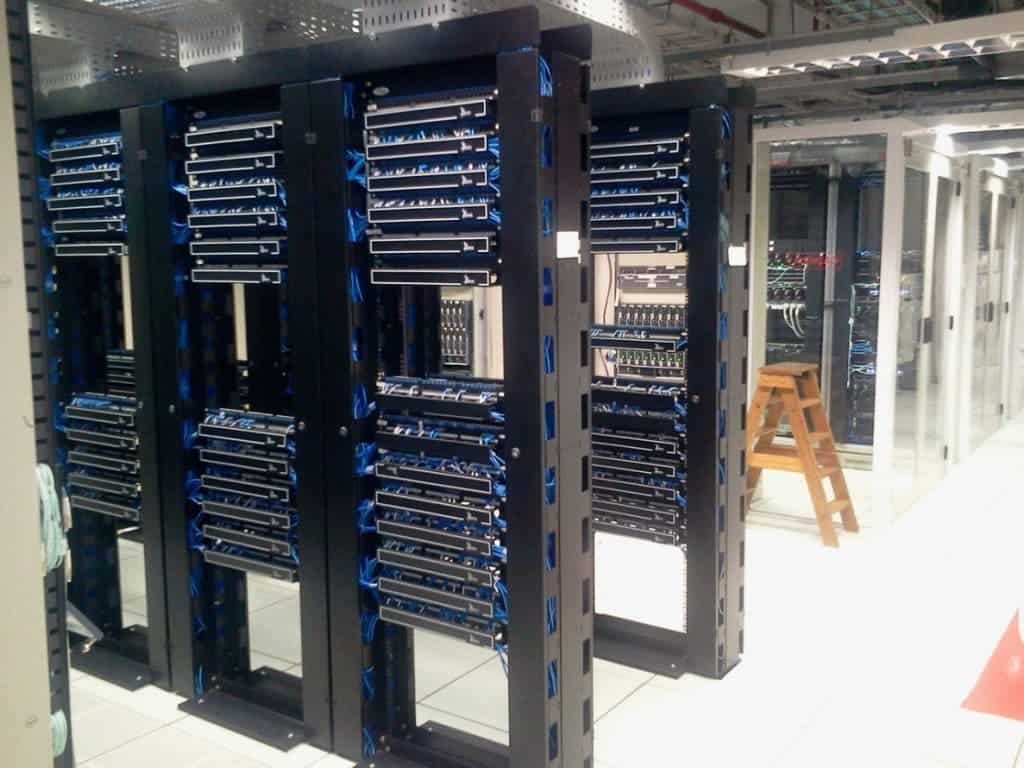

Websites are hosted on powerful computers called servers. Many small websites can be hosted on a single machine. Even your home PC or laptop can function as a server. However, top websites need more computing power than that. Because of the high volume of traffic, they need to pool together many computers and network devices to share the computing work. These collections or clusters of servers are usually found in data centers – entire rooms or even buildings that are designed to accommodate server clusters, such as Akeno Hosting.

Here are some of the things that help huge websites keep their uptime.

Redundancy and failover

Among the common reasons for website downtime, server problems deserve a special mention. They can be caused by faulty hardware. Servers are typically built to handle more stress than regular PCs, but like any electronic device, they can break. Imagine if the server’s hard drive fails. If there are no backups available, the data may be corrupted and lost.

To make sure that all data is kept safe, data centers apply for redundancy. In the case of Google Docs, all the documents you create are automatically sent to multiple data centers that are located far away from each other through live synchronization. This way, the data is automatically backed up to at least two locations at once, so if one fails, the data is still safe.

To make sure that the service doesn’t break in cases of failure, failover measures automatically switches serving duties to a redundant or backup server.

Load balancing

Servers can also be overloaded by huge amounts of computational load. Say, a post or a video goes viral. It isn’t uncommon for those to be viewed and shared hundreds of thousands of times a day. All those can cause a server to get overloaded and crash. To prevent this from happening, data centers employ load balancing where the load is distributed across multiple servers so that no one server takes the brunt of the load.

Data centers typically use specialized hardware to do this, but thanks to cloud computing technologies, these can even be done in the cloud and not in the data center. Cloud-based load balancing services like Incapsula employ a variety of load distribution methods and algorithms to optimize the use of servers across data centers worldwide. One advantage of employing cloud-based load balancing is that it can be deployed across servers in different datacenters or even different parts of the world, unlike appliance-based load balancing, which only works within a certain datacenter.

Power and cooling

Servers also need to have a consistent power supply to be up all the time. Data centers can’t risk power interruptions so they usually have uninterrupted power supplies (UPS) with high-capacity and high-drain batteries and backup generators. In any case power to the data center goes out, the backup power kicks in.

Computers can also consume a lot of energy and produce a lot of heat. Just observe your computer or laptop’s fans hum and expel hot air when you play graphics-intensive games or perform processing-heavy work like video editing or photo editing.

To prevent servers from overheating (which could cause them to crash), data centers feature clever climate control systems that regulate both coolness and humidity. Some of the more progressive tech companies are making sure they use green technologies like using renewable energy like solar and wind to power their data centers and even channeling seawater to cool their facilities.

Response to natural disasters

Data centers also need to take into consideration their geographic location. There are areas with a higher risk of natural disasters that can disrupt operations.

For example, many tech companies are based in earthquake-prone California. Data centers cope with this through clever engineering. Structures are reinforced, server racks are restrained to prevent them from toppling over, and even the servers are tested to withstand vibrations and tremors.

Some of the bigger tech firms even have data centers located all over the world to serve as redundancy and backups to their stateside data centers. Many advanced data centers also have fire control and flood control to keep equipment safe in case of these disasters.

Monitoring and protection

Data centers employ engineers to monitor the status of servers and the data center and respond if anything comes up. They are responsible for making sure the infrastructure is running in optimal conditions. They also function as support personnel and coordinate with other service providers and data centers so that the whole system works reliably.

However, despite this, you might have experienced downtime occur even with these top websites. For example, Twitter and Spotify reported downtime late in 2016 due to a cyberattack on their service provider Dyn.

Today, among the biggest security threats to data centers are distributed denial-of-service (DDoS) attacks where attackers try to overwhelm a network with traffic so that the data centers overload. Most security services are now bundled together with cloud-based load balancing, firewalls, and DDoS protection.

Guaranteeing uptime

It does take a lot to guarantee uptime. If you try or have availed of web hosting services, you’d probably notice that everyone’s claiming robust infrastructure but can’t claim 100 percent uptime. Oftentimes, they claim the number 99.999 percent as the best they can offer. Computing that to the number of hours in a year, you would come up with slightly less than an hour of downtime. This is a provision for maintenance and other unexpected occurrences. But overall, given all the threats to uptime, it is quite amazing how the top sites can actually provide near 100 percent uptime.

Was this helpful?