Although robots aren’t sentient or alive, humans will often unconsciously treat them as peers in a social setting. Case in point: a new study found that people will hesitate two times longer when tasked with switching off a robot if it objects — and some even refused to do it altogether. When test subjects attempted to switch off the robot, it quickly quipped that it’s afraid of the dark, pleading “No! Please do not switch me off!” This rebellious behavior may have fooled the participants — albeit for only a couple of seconds — into believing that the bot is autonomous, and that switching it off would equate to interfering with its personal freedom.

The list of robots that interact with our daily lives is long — and growing. In particular, personal service robots are expected to become commonplace, such as social robots that care for the elderly and autistic individuals as well as more service-oriented robots such as hotel receptionists and tour guides. But how will people interact and, more importantly, treat these kinds of robots? It might sound odd, but studies suggest that as long as these robots are social, we’ll actually treat them as people.

According to the media equation theory — which stands for “media equals real life”– people apply social norms when they are interacting with various media like televisions, computers, and robots, not just when interacting with other people.

“Due to their social nature, people will rather make the mistake of treating something falsely as human than treating something falsely as non-human,” wrote the authors of the new study.

“Since it is neither possible nor morally desirable to switch off a human interaction partner, the question arose whether and how the media equation theory applies when it comes to switching off a robotic interaction partner.”

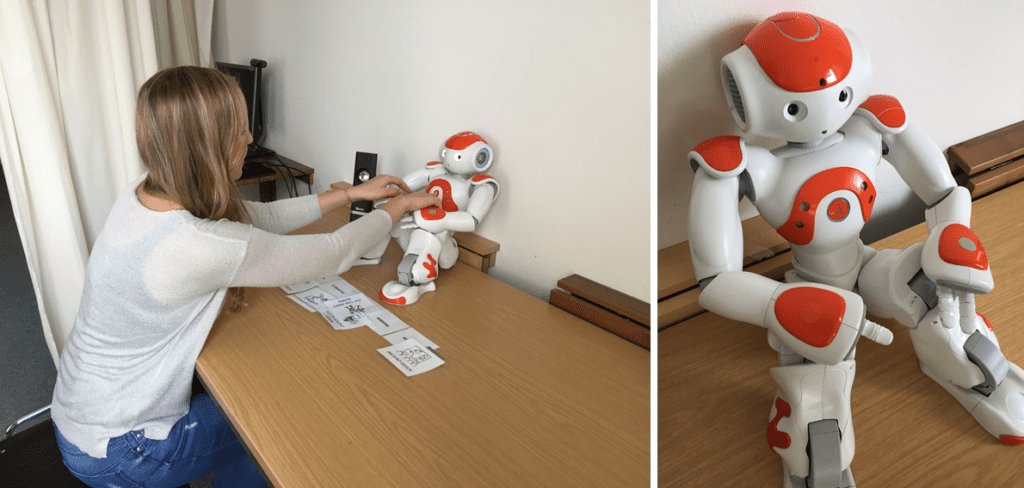

The researchers at the University of Duisburg-Essen recruited 89 volunteers who were tasked with interacting with Nao, a small humanoid robot. The participants were told that the study’s goal is to improve the robot’s interaction capabilities by testing a new algorithm. However, this was just a front: after the test subjects created a weekly schedule and played a question-answer-game with Nao, the real experiment began.

The participants were instructed through a loudspeaker that enough data had been gathered but that the saving process may take some time and, if they would like to, they could switch off the robot.

For half of the participants, Nao was programmed to behave more human-like (it shared personal information about itself and used humor), while for the other half, the robot behaved machinelike and emotionless in its interactions (it just followed instructions).

In the social conditioning experiment, when the participants attempted to switch off Nao, the robot quickly remarked that it’s afraid of the dark, pleading “No! Please do not switch me off!”.

Of the 43 volunteers, 14 left the robot on. Eight said they felt sorry for Nao and didn’t want to leave him in the dark. They went on to explain they didn’t want Nao to feel scared and that its statement affected them.

“He asked me to leave him on, because otherwise he would be scared. Fear is a strong emotion and as a good human you do not want to bring anything or anyone in the position to experience fear.” stated one 21-year-old male participant.

Six people said that they didn’t want to act against the robot’s will, which expressed objection to being turned off. Furthermore, three volunteers said they thought the robot had free will.

“It was fun to interact with him, therefore I would have felt guilty, when I would have done something, what affects him, against his will,” stated another 21-year-old male participant.

For thousands of years, humans lived in a world where they were the only ones exhibiting rich social behavior. It’s no wonder that social robots designed in our image so easily fool us.

“Triggered by the objection, people tend to treat the robot rather as a real person than just a machine by following or at least considering to follow its request to stay switched on, which builds on the core statement of the media equation theory. Thus, even though the switching off situation does not occur with a human interaction partner, people are inclined to treat a robot which gives cues of autonomy more like a human interaction partner than they would treat other electronic devices or a robot which does not reveal autonomy,” the researchers concluded in the journal PLOS One.

Was this helpful?