Words are not just a collection of sounds or letters — they’re a window into the deepest realms of human emotion. Researchers from Japan have now delved into this intricate relationship between language and emotion, uncovering insights that could change our understanding of communication and perhaps even the way we connect with each other.

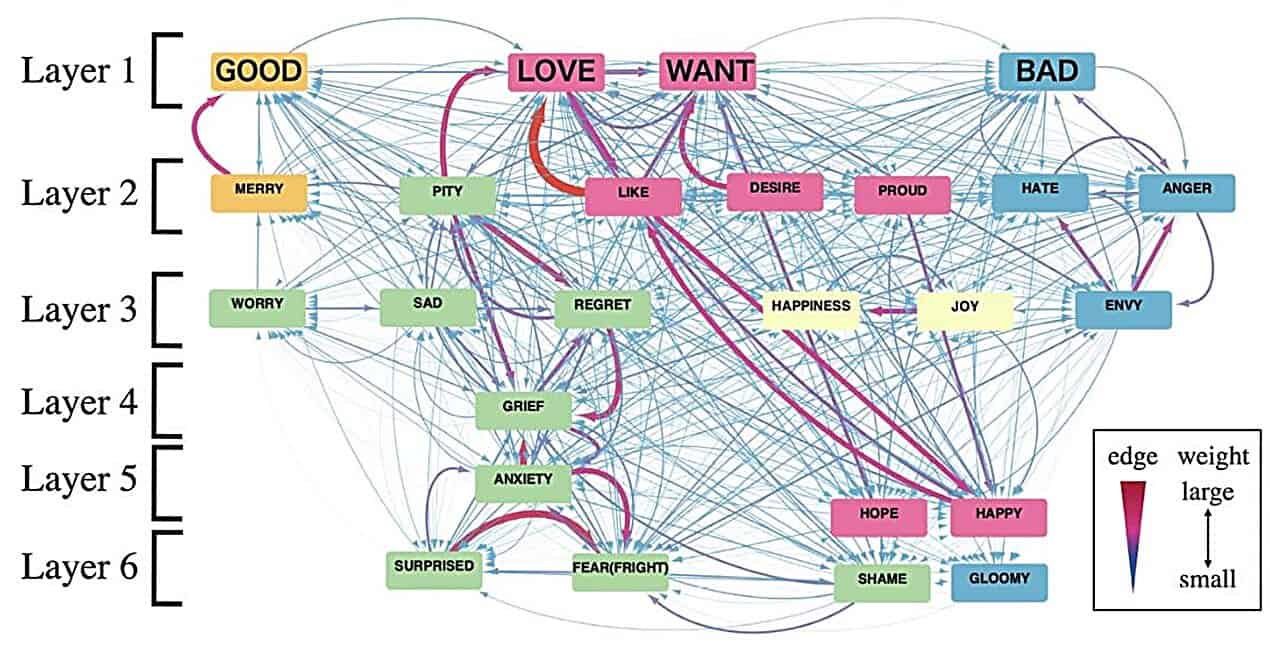

Through an innovative analysis of multiple languages, the researchers discovered that certain emotions, specifically “GOOD,” “WANT,” “BAD,” and “LOVE,” act as central hubs in a complex network of emotional expressions. This marks a significant departure from traditional theories that view emotions as distinct and separate entities. Instead, the words we use to describe emotions are intricately linked and overlap in ways previously unimagined.

Emotion and language intertwined

In the 1970s, psychologist Paul Eckman introduced six basic emotions: happiness, sadness, disgust, fear, surprise, and anger. Eckman later expanded this primary emotional range to include pride, shame, embarrassment, and excitement. Like mixing colors to create new shades, emotions can blend to form complex feelings, these psychologists thought. For instance, combining basic emotions like joy and trust gives rise to love.

A 2017 study identified 27 distinct categories of emotion — from anxiety to fear to horror to disgust, calmness to aesthetic appreciation to awe, and others — indicating an even richer and more varied emotional landscape than previously thought.

It seems like the trend is to expand the palette of human emotions. However, the Japanese researchers have gone the opposite route, simplifying and distilling human emotions — as reflected through language — to their very essence.

Emotions distilled

The new study hinges on a key concept in linguistics known as colexification. It sounds complex, but it’s quite simple. Colexification occurs when a single word in a language encapsulates multiple concepts that are semantically linked. For example, consider the Spanish word ‘malo,’ which can mean both ‘bad’ and ‘severe,’ depending on the context. Same in French for the word ‘mauvais’. In Russian, the word for ‘bad’ is colexified with ‘severe’ or ‘ugly’. And in Italian, the word ‘ciao’ can conveniently mean both “hello” and “goodbye”.

Researchers at Tokyo University analyzed multiple languages and built a “colexification network”, meticulously connecting linguistic concepts.

Rather than distinct and separate emotions, the researchers assert that the way we use words in emotional language can be categorized into ‘hubs’, each forming networks of subordinate emotions that are strongly connected semantically. There are four such hubs: “GOOD,” “WANT,” “BAD,” and “LOVE.”

“In this paper, by focusing on colexification, we succeeded in detecting central emotions that share semantic commonality with many other emotions,” explains Dr. Ikeguchi, the senior author of the study, in a press release.

The researchers discovered that three of the four central emotional hubs they identified — ‘GOOD,’ ‘BAD,’ and ‘WANT’ — align with core emotions previously identified through traditional semantic methods and the natural semantic metalanguage (NSM) approach.

“To identify the semantic primes, NSM researchers studied numerous languages using traditional semantic methods. Intriguingly, the set of semantic primes includes three of our four central emotion-related concepts: ‘GOOD,’ ‘BAD,’ and ‘WANT.’ This agreement supports our conclusion that the central concepts identified by colexification analysis could be shared by many languages rather than specific to English,” notes Dr. Ikeguchi.

The implications of this study are far-reaching. For one, it offers a new perspective on the evolution of language and cross-cultural communication. Understanding how emotions are interwoven into our languages can help us better comprehend the subtleties of human interaction and empathy.

Most likely, some tech giants will jump on these findings. Concepts tied to emotions are pivotal in natural language processing (NLP), particularly in sentiment analysis. This branch of NLP focuses on deciphering the emotional tone behind words, a tool increasingly used in everything from market analysis to social media monitoring. Think of smart writing tools that inform you whether your e-mail sounds “angry” or “professional”. However, it’s in artificial intelligence (AI) that the understanding of these hubs could be most lucratively applied.

Large language models (LLMs) form the backbone of many modern AI systems used in information processing and content generation, such as the famous ChatGPT. By understanding the central emotional hubs in language, developers can create more sophisticated and nuanced language processing algorithms.

Last but not least, in a world where language shapes our reality, this research reminds us of the power of words to connect, understand, and feel.

The findings were reported in the journal Scientific Reports.

Editor’s note: this article initially appeared in January 2024 and was updated with new information.

Was this helpful?