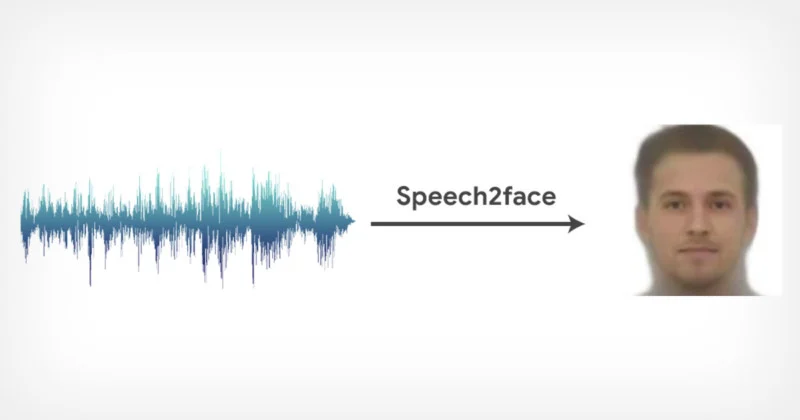

Some law enforcement and intelligence agencies across the world are experimenting with artificial intelligence to identify people using voice recordings and big data. But what if there’s no match? AI systems can still provide valuable input. For instance, researchers at MIT have demonstrated a novel machine-learning algorithm that can synthesize a person’s portrait based solely on a short audio recording of their voice.

Researchers at MIT’S Computer Science and Artificial Intelligence Laboratory (CSAIL) first trained their deep neural network by feeding it millions of videos scraped from YouTube showing people speaking in front of the camera. This huge swath of data trained the AI to correlate certain sound characteristics with facial features corresponding to age, gender, or ethnicity.

Throughout this process, there was no human intervention apart from the initial task of correlating voice and facial features. The AI learned all of this by itself with no external supervision, not even the labeling of subsets of data.

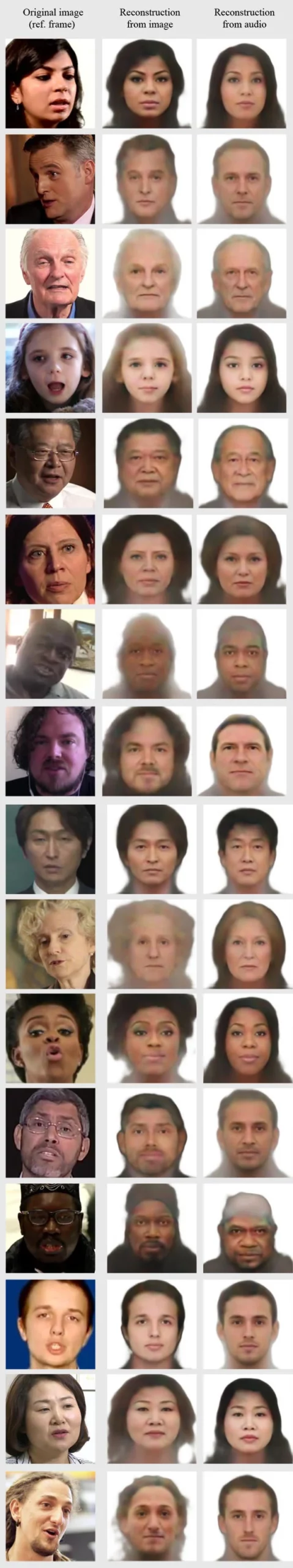

To test the system, the researchers designed a face decoder that reconstructs a speaker’s face from a still frame, regardless of its lighting or pose. This digital reconstruction was then compared to synthesized portraits solely from a speaker’s voice, with striking results that you can see in these images.

The synthesized faces are generic, meaning the produced images are not of specific individuals as those produced by the decoder. Nevertheless, they still manage to capture the basic facial features of a speaker such as skin color, gender, and age. The longer the voice recordings were, the more accurate the synthesized portrait proved to be.

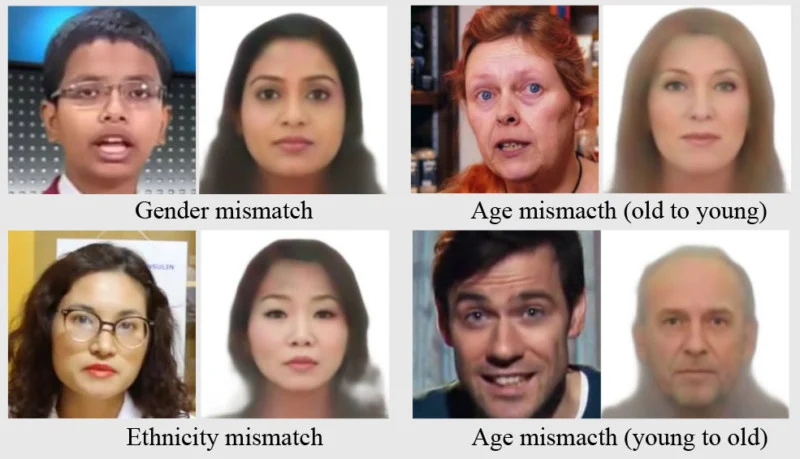

But there were also plenty of mismatches. High-pitched voices were often identified as female, even in cases when they came from males, such as young boys. Asian men who spoke in American English had portraits resembling white males, but this did not happen when the Asian voice spoke in Chinese.

The AI may remind some of their racist uncle, and the researchers are aware of these biases and are looking to overcome these limitations. Improving the system’s accuracy is a matter of providing more training data that is representative of the general population.

Until these limitations are addressed, real-world applications of this AI system should be treated with care. One possible use could be to make interactions between humans and machines more appealing. Machine-generated voices used by home devices and virtual assistants could now be given an appropriate face. Law enforcement could use this neural network to generate a portrait of a suspect when the only evidence is a voice recording. However, any government use will be sure to be met with criticism surrounding privacy and ethics.

“We believe that generating faces, as opposed to predicting specific attributes, may provide a more comprehensive view of voice face correlations and can open up new research opportunities and applications,” the MIT authors wrote in the description of their project called Speech2Face.

[via PetaPixel]

Was this helpful?