ZME Science reported the other day how the University of Missouri found the largest prime yet: 274,207,281-1. That’s 22,338,618 digits, 21.7 MB of storage if you were to put all the digits inside a .txt file. You’d need more than 100 days without sleep to pronounce it.

In 1951, Aimé Ferrier discovered the largest prime at the time (20,988,936,657,440,586,486,151,264,256,610,222,593,863,921) by hand. Since then, all other record setting primes were found by computers, for obvious reasons. It takes a lot of number crunching with minimal human oversight, but also nifty tricks to speed things up.

It would take your computer months to reach the University of Missouri discovery. It initially took 31 days of non-stop computing by the Great Internet Mersenne Prime Search at the University’s network of desktop computers. Squirrels — a software-development company specializing in wireless audio and video transmission — verified the operation in 2.3 days on an NVIDIA GPU and the other took 3.5 days using an AMD GPU. ZME Science caught up with Squirrels President David Stanfill who shared the company’s process, why this is really important and what’s next.

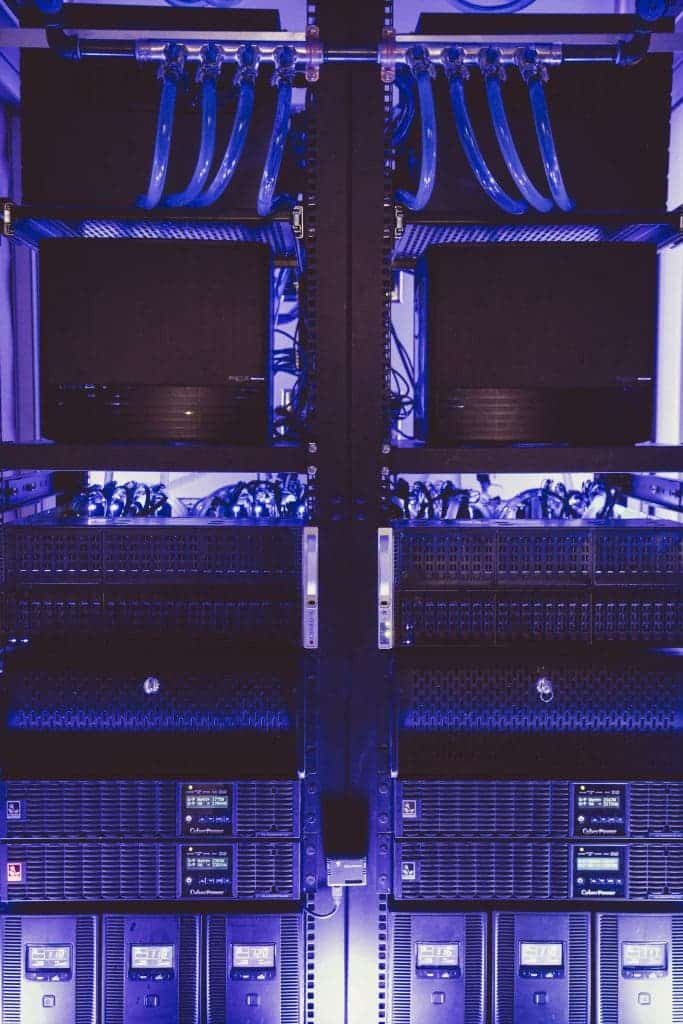

Squirrels used their own custom-built supercomputer, a system of 480.9 TFLOPS (2,400 times faster than your computer) which, however, only eats 24kW of power. A liquid cooling system helps the supercomputer stay in tip top shape, and any heat is recycled to warm the office instead of dumping it outside.

“The system is a cluster of dozens of high-end consumer graphics cards, but instead of playing games these cards are doing serious number crunching. A normal computer can do four to eight operations at once, where a graphics card can handle thousands of simultaneous actions in parallel,” Stanfill said.

“These cards aren’t meant to be piled 8 high into a system, the heat and demands on the host system is just too high, but Squirrels has tuned each card to act self-sufficiently, only interacting with the host computer when a task is starting or complete.”

“Doing one of these tests for a number of the size we verified takes around 34 quadrillion steps. A single error in any of those steps propagates quickly, and within just a few thousand steps the data you are working with is indistinguishable from the correct data. So we actually run our cards in parallel – every million steps or so three cards compare their results. If there is any mismatch, the cards back up and repeat the calculation until all three match.”

The prime was discovered by factoring 2 by itself millions of times, and subtracting one for each iteration. The methods used to both discover and verify the largest Mersenne prime number yet aren’t pure brute force, though. Algorithms are used to streamline the process and avoid unnecessary steps, from simple tweaks like ignoring any numbering ending in 5 (because any number ending in 5 is divisible by 5 and hence not a prime), to more complex algorithms like the Fast Fourier transform.

“FFTs are fundamental to many scientific and common computing algorithms, everything from image, audio, and video compression to advanced scientific analysis relys on the FFT. The FFT algorithm has been called most influential discovery in mathematics in the 20th century. NVIDIA and AMD both have research teams dedicated to putting out very fast FFT algorithms on their respective graphics card platforms and we use those in the core of the programs that search for large primes. The work and precision required for those large prime verifications has already identified and helped fix several issues with the precision of AMDs FFT library,” Stanfill said.

“This is the same kind of advantage this work has in discovering tiny flaws in hardware and software that was just revealed in the Intel Skylake bug,” he added.

“We are pressing these systems to the limit and everything has to be perfect. If anyone is going to find a bug or place to improve the FFT libraries or hardware involved, it is going to be those pushing the envelope with massive calculations that have no room for error.”

To verify the prime, Squirrels engineers tweaked the supercomputer for added accuracy by sacrificing speed. The risk of error is now infinitesimally small.

“We only utilized a fraction of our super computers raw power for this verification, but because of the intense scrutiny on this number, we make sure every calculation is perfect. We actually run the same calculation on each of those cards with a slightly different shift value to ensure different bits of the memory and processor wiring is used for each test. That’s like taking 456 * 456 on one card, 45600 * 45600 on another, and 4560000 * 4560000 on the final card, and then dividing the results by 1 , 10000, and 100000000 when we are done and making sure they all match,” Stanfill told ZME Science.

“All of the technology we developed and put in place makes those trade offs in realtime depending on the task at hand. It also manages the the error checking applied to any problem our cluster works on, not just prime searches. That’s what we mean when we say that this problem is a driving problem — the system and technology we improve to make sure this verification is perfect will also help ensure the results of tomorrows analysis of a possible cancer cure is just as correct,” Stanfill continued.

Next, Squirrels plans to help a landing hand using its expertise and hardware to other academic lines of research from protein folding analysis to help find cures for diseases, to deep learning algorithms for rapidly identifying cancerous cells in medical images, to extremely detailed physics and chemical simulations to identify potential improvements in battery and other technologies.

Was this helpful?