Self-driving cars are no longer a distant prospect. They’re already knocking at our doors — and while it will still take a few years before we will truly see them popping off, their emergence seems more like ‘when’ than ‘if’. For the most part, the decisions smart cars have to take are fairly straightforward: stop at a red light, keep your lane, and do whatever a careful driver would do. But what about complex or extreme situations?

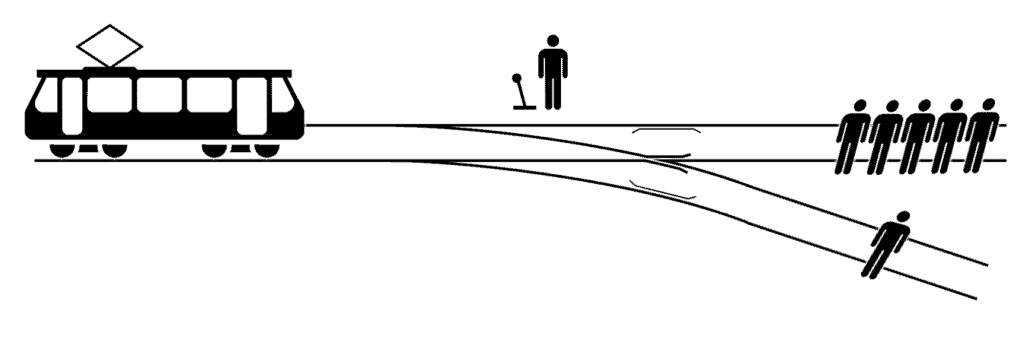

Take a variation of the already infamous trolley problem. Let’s say an empty self-driving car is driving and it’s about to run into a few pedestrians. If it can swerve to the sidewalk, and avoid hurting anyone, it should probably do that. But what if there are also people on the sidewalk? Or what if it’s not empty, and the maneuver would put its own passenger in danger? What would the decision process even look like?

Oftentimes, the moral framework of self-driving cars is discussed as one of two options: either selfish (do what it can to protect itself) or utilitarian (do what looks to be the least overall damage). But that’s kind of missing the point and doesn’t truly address the moral subtleties and dilemmas cars will be faced with.

“Current approaches to ethics and autonomous vehicles are a dangerous oversimplification—moral judgment is more complex than that,” says Veljko Dubljević, an assistant professor in the Science, Technology & Society (STS) program at North Carolina State University and author of a paper outlining this problem and a possible path forward.

“For example, what if the five people in the car are terrorists? And what if they are deliberately taking advantage of the AI’s programming to kill the nearby pedestrian or hurt other people? Then you might want the autonomous vehicle to hit the car with five passengers. “In other words, the simplistic approach currently being used to address ethical considerations in AI and autonomous vehicles doesn’t account for malicious intent. And it should.”

So what’s the alternative? Dubljević recommends implementing something called the Agent-Deed-Consequence (ADC) model. The model works on three basic questions to make a decision regarding an action:

- Is the agent’s intention good or bad?

- Is the action itself good or bad?

- Is the outcome of the action good or bad?

The ADC model is an attempt to explain moral judgments by breaking them down into positive or negative intuitive evaluations of the Agent, Deed, and Consequence in any given situation. This allows for moral complexities that other AIs aren’t equipped to deal with.

For instance, let’s say the action is crossing a red light. The action itself is bad, but the intention can be good, if it avoids a collision — and if the outcome is also good, then the action itself would be desirable. Using this framework to address problems self-driving cars could be faced with would offer more tools to solve the gray-area problems that self-driving cars will likely encounter at some point.

Of course, implementing this model is also rather difficult. For one, these moral decisions are hard to make even by humans, and there’s often not a universally agreed-upon framework to decide whether outcomes are good or not — which is why trolley-type problems are still so interesting and widely employed in philosophy. Dubljević says ADC models can work for self-driving cars, but more research is needed to ensure that it can be properly implemented and that nefarious actors don’t take advantage of it.

“I have led experimental work on how both philosophers and laypeople approach moral judgment, and the results were valuable. However, that work gave people information in writing. More studies of human moral judgment are needed that rely on more immediate means of communication, such as virtual reality, if we want to confirm our earlier findings and implement them in AVs. Also, vigorous testing with driving simulation studies should be done before any putatively ‘ethical’ AVs start sharing the road with humans on a regular basis. Vehicle terror attacks have, unfortunately, become more common, and we need to be sure that AV technology will not be misused for nefarious purposes.”

Journal Reference: Veljko Dubljević, Toward Implementing the ADC Model of Moral Judgment in Autonomous Vehicles, Science and Engineering Ethics (2020). DOI: 10.1007/s11948-020-00242-0

Was this helpful?