Researchers at DeepMind, Google’s dedicated branch tasked with innovation in artificial intelligence, just trained a machine learning algorithm to find new, more efficient algorithms for matrix multiplications. Yes, that’s as meta as it gets.

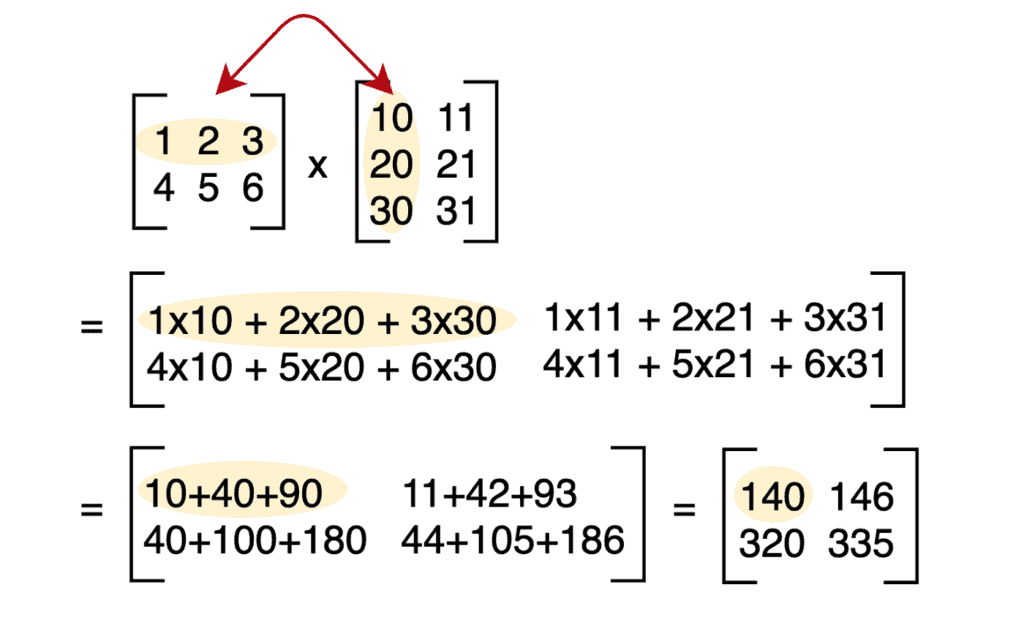

There are a couple of things highly remarkable about this research, which could greatly improve the speed at which computers perform tasks and save energy. But first, a quick primer on matrix multiplication. A matrix is a fancy mathematical term for a grid, with numbers arranged in rows and columns.

Matrices and matrix multiplications form the fundamental backbone of computing. Virtually all software is bound by this basic operation and even some hardware. Your display screen, for instance, shows you this article because its pixels are represented as a grid, and these pixels refresh with new information faster than your eyes can track.

In linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. This is typically done by multiplying the rows of one matrix with the columns in the other. You might remember this technique from high school algebra, but there are actually many other methods to multiply matrices together. In fact, there are trillions of trillions of ways to multiply matrices together, but there’s only one that’s the fastest, meaning it takes the least amount of steps to compute, for a certain grid size.

Faced with an almost infinite number of possibilities, how do you go about finding the most efficient one?

This is where DeepMind computer scientists came in, who looked at this puzzle and tackled it by doing what they do best: making AIs champions at games.

Previously, DeepMind made headlines after its AlphaZero AI beat the best humans at board games like chess or Go. Now, they’ve tweaked AlphaZero into a new version that treats the matrix multiplication problems as a sort of 3D board game.

“Through a set of allowed moves, corresponding to algorithm instructions, the player attempts to modify the tensor and zero out its entries. When the player manages to do so, this results in a provably correct matrix multiplication algorithm for any pair of matrices, and its efficiency is captured by the number of steps taken to zero out the tensor,” DeepMind researchers wrote in a recent blog post.

“This game is incredibly challenging – the number of possible algorithms to consider is much greater than the number of atoms in the universe, even for small cases of matrix multiplication. Compared to the game of Go, which remained a challenge for AI for decades, the number of possible moves at each step of our game is 30 orders of magnitude larger (above 1033 for one of the settings we consider).”

The new AI, known as AlphaTensor, started off as a blank slate, meaning it had no prior knowledge of any solutions to matrix multiplication. It was simply tasked with finding an algorithm for matrix multiplication that takes the least amount of steps. That’s it.

It’s the same approach that was used to help AlphaZero become the undefeatable champion at chess and Go even though at first the AI didn’t even know what moves were allowed. Its secret is a type of machine learning called reinforcement learning in which the AI initially interacts with the environment to achieve a goal set out by the programmers, receiving a ‘reward’ each time it performs an action that inches it closer to the goal.

AlphaTensor also uses decision tree algorithms in which it evaluates the outcomes of a stupendous amount of branching possibilities, like where the pieces could be ten moves later in a chess game, and chooses which path to prioritize for optimum efficiency in meeting its goal.

A 50-year record in computer science shattered with ease

Imagine two matrices, each comprising two rows and two columns. It’s the most basic configuration there is and if you were to multiply the two the conventional way we were taught in school, you’d end up with eight multiplications. But in 1969, mathematician Volker Strassen found a nifty trick to cut down the number of multiplications to 7.

In the new study, AlphaTensor managed to multiply two 4×4 matrices using only 47 multiplications, rather than the 64 it takes if you were to painstakingly multiply each row with each column from its corresponding matrix. That’s also two steps less than the 49 found by Strassen, whose multiplication method for 4×4 matrices had held the record for the fastest for more than 50 years.

AlphaTensor looked for multiplication algorithms in more than 70 different sizes of matrices. In every instance, it either beat the existing best algorithms or reached the same solution as the fastest algorithm currently in use for a particular matrix size. For instance, AlphaTensor solved two 9×9 matrices in 498 steps rather than the previous record of 511 and multiplied two 11×11 matrices in 896 steps instead of 919.

When the DeepMind team put these new algorithms to work in Nvidia V100 GPUs and Google TPU processors, two chipsets that are routinely used to train neural networks, they found that the algorithms were 10% to 20% faster than what these chips typically use for multiplying matrices.

It’s not clear whether consumer processors and graphics cards like the ones powering your smartphone or tablet could reap the same energy savings, but researchers are ready to explore this question. Nevertheless, researchers who work with supercomputers to model the climate, make weather forecasts, or compute complex dynamic systems will surely speed up their work as a result of these developments.

And, using this success as a proof of concept, there’s nothing stopping AlphaTensor from developing new, better algorithms for a host of computational tasks.

“Our research also shows that AlphaZero is a powerful algorithm that can be extended well beyond the domain of traditional games to help solve open problems in mathematics. Building upon our research, we hope to spur on a greater body of work – applying AI to help society solve some of the most important challenges in mathematics and across the sciences,” DeepMind researchers wrote.

The findings were reported today in the journal Nature.

Was this helpful?